Apple looking to improve video quality with advanced sensors

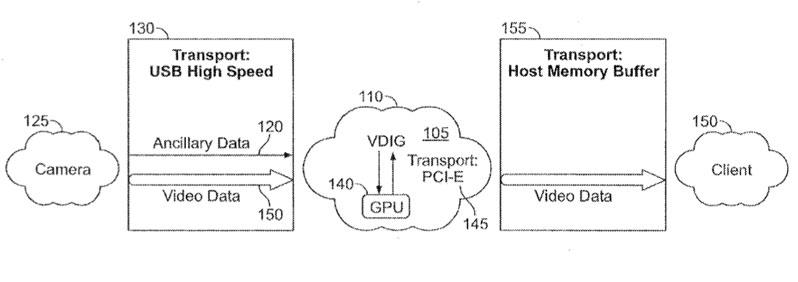

A new patent application revealed this week, entitled "Video Acquisition with Processing Based on Ancillary Data," describes advanced techniques of processing video and improving picture quality. It describes plugging a camera or mobile device into a computer, which would then read "ancillary data" recorded by something such as an iPhone.

That data could be processed and used to help improve the image quality of the final product. Using the data, the computer could filter and/or blend the images, or use a whole range of other tools, to give even amateur videographers the best picture quality possible.

Features such as image stabilization have long been a feature of Apple's video editing tools, including Final Cut Pro and iMovie '09. But the newly described method would use sensors in a mobile device, like an iPhone, to improve picture quality even further.

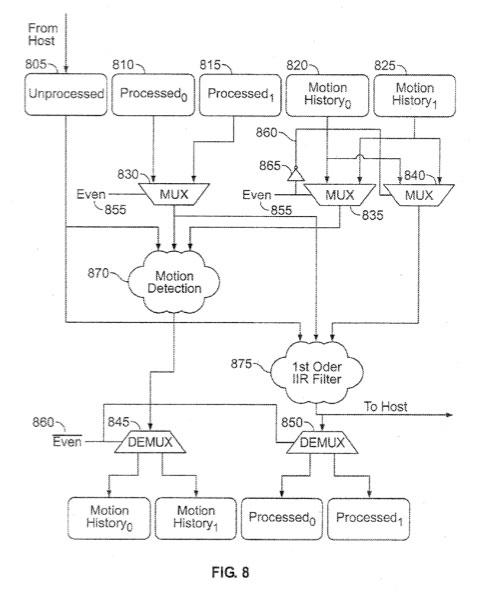

The system could detect motion or a change in lighting conditions, and accordingly blend frames from the video to lessen the effect. But it could also employ less traditional methods, such as location and temperature, that could have an effect on picture quality.

"The image sensor and/or other components are subject to temperature outside of predetermined thermal specifications, which can cause some fixed pattern noise in the detected images," the application reads. "Such fixed pattern noise can show up as red and blue pixels at fixed locations that vary between sensors and can be exacerbated in low light and high heat."

The application states that charged-couple device (CCD) sensors provide a relatively high image quality, but are more expensive than complementary metal-oxide semiconductor sensors (CMOS). CMOS sensors also require less power, but produce a lower quality image than CCD sensors, particularly in low lighting.

"The described techniques and systems can be used to enable CMOS sensors to be used in place of relatively expensive CCD sensors, such as to achieve comparable quality from a CMOS sensor as from a CCD sensor," the application reads. "In particular, processing can be performed using software, hardware, or a combination of the two to filter out noise, which is increasingly present as light levels decrease; increase dynamic range; improve the overall image quality and/or color fidelity; and/or perform other image processing."

Revealed by the U.S. Patent and Trademark Office this week, the patent application was filed by Apple on May 3 of this year. The invention is credited to Alexei V. Ouzilevski, Fernando Urbina, Brett Bilbrey and Jay Zipnick.

Neil Hughes

Neil Hughes

Mike Wuerthele

Mike Wuerthele

Christine McKee

Christine McKee

William Gallagher

William Gallagher

Andrew Orr

Andrew Orr

Sponsored Content

Sponsored Content

Malcolm Owen

Malcolm Owen