Apple exploring 3D frame-of-reference iOS interface based on eye, light location

Apple's concept was revealed this week in a new patent application discovered by AppleInsider Entitled "Three Dimensional User Interface Effects on a Display by Using Properties of Motion," it describes a system relying on a number of sensors, including eye tracking with a forward facing camera, to display a user interface that automatically reacts to the world around it.

The application notes that devices like the iPhone can do many unique things thanks to the plethora of sensors included in them, such as compasses, accelerometers, gyrometers, GPS and cameras. In addition, face detection is also possible and can present when a user is positioned in front of a device.

"However, current systems do not take into account the location and position of the device on which the virtual 3D environment is being rendered," the filing reads, "in addition to the location and position of the user of the device, as well as the physical and lighting properties of the user's environment in order to render a more interesting and visually appealing interactive virtual 3D environment on the device's display."

Apple's proposed invention would be a user interface that would be continuously tracking the movement of a portable device, like an iPhone, as well as the lighting conditions and the position of the eyes of the user. Using these elements, Apple could create more realistic three-dimensional depictions of objects on the screen.

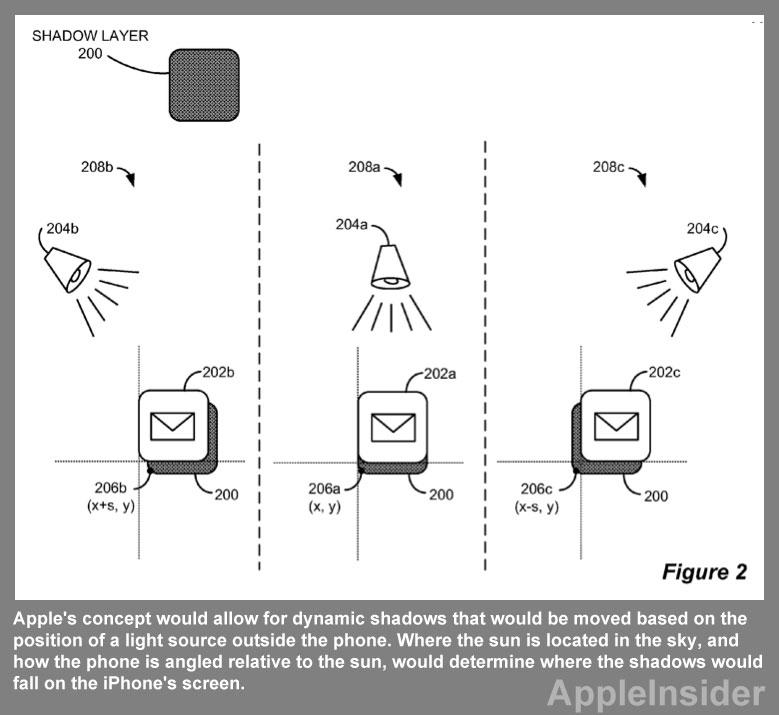

In one of the simpler examples presented in the filing, Apple's concept would allow for dynamic shadows that would be moved based on the position of a light source outside the phone. Where the sun is located in the sky, and how the phone is angled relative to the sun, would determine where the shadows would fall on the iPhone's screen.

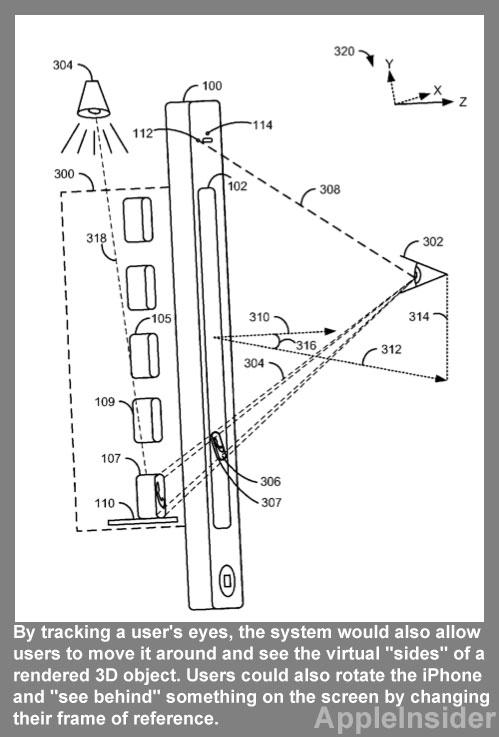

By tracking a user's eyes, the system would also allow users to move it around and see the virtual "sides" of a rendered 3D object. Users could also rotate the iPhone and "see behind" something on the screen by changing their frame of reference.

In another example, the 3D operating system would have a recessed "bento box" that could be advantageous for modular interfaces. The user could rotate an iPhone to look into a number of "cubby holes" included in the virtual bento box.

"It would also be then possible, via the use of a front-facing camera, to have visual 'spotlight' effects follow the user's gaze, i.e., by having the spotlight effect "shine" on the place in the display that the user is currently looking into," the filing reads."

The filing also takes into account the effect this operating system could have on the battery life of a portable iOS-based device. To prevent over-use of an iPhones graphics processing unit, the system could be turned on or off quickly with a simple gesture.

By performing a gesture like a "wave," by quickly turning the iPhone, the 3D user interface could be "unfrozen" and enabled. Doing this again would have the display "slowly transition back to a standard orientation."

The filing also notes that the 3D user interface could also be accomplished on desktop machines, even though such computers are not portable. These elements could still be accomplished with a forward-facing camera that would track a user's head and eye movement.

The filing, made public this week by the U.S. Patent and Trademark Office, was originally filed in August of 2010. It is credited to inventors Mark Zimmer, Geoff Stahl, David Hayward, and Frank Doepke.

Neil Hughes

Neil Hughes

Andrew Orr

Andrew Orr

Wesley Hilliard

Wesley Hilliard

Amber Neely

Amber Neely

William Gallagher

William Gallagher

Malcolm Owen

Malcolm Owen