Apple's touchscreens can measure not only where you tap, but also how hard you tap — and that velocity sensing functionality may become even more advanced in the future, a new patent application reveals.

Apple already offers velocity sensitivity in its own GarageBand application designed for iOS. With that feature, an action such as pressing a key on a virtual keyboard or hitting the snare on a virtual drum can be louder or softer based on how hard the user hits it.

The current method employed by Apple utilizes the advanced accelerometers found in its iOS devices. But a newly revealed patent application published this week by the U.S. Patent and Trademark Office suggests Apple could also determine touch velocity by using a gyroscope, microphone, Hall Effect sensor, compass, ambient light sensor, proximity sensor, camera, or a positioning system.

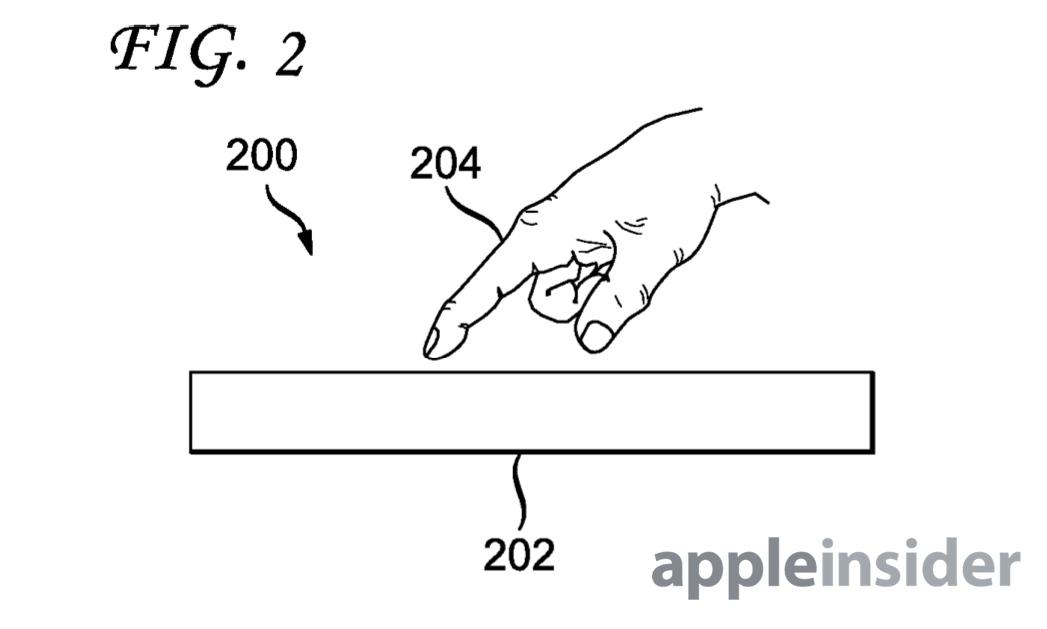

The filing, entitled "System and Method for Enhancing Touch Input," describes a processing algorithm that would estimate the velocity of a touch input. The sensors within an iOS device or otherwise allow the system to determine velocity, even though the screen may not be pressure sensitive.

The application notes that sensing touch velocity goes well beyond the obvious applications in music, as Apple has showcased in GarageBand. Other examples given by Apple include art applications, where the force of a brush stroke could be determined, or in a game, where the software may provide more advanced controls through sensing how hard the player taps the screen.

Apple notes that sensing velocity through a built-in accelerometer alone has limited accuracy because the calculation is based on just a single dimension: the Z-axis. By employing more sensors, Apple could provide a more accurate reading of touch velocity.

The proposed invention, published this week by the USPTO, was first filed by Apple in April of 2012. It is credited to Elliott Harris and Robert Michael Chin.