The details come from a handful of patent applications revealed this week by the U.S. Patent and Trademark Office. Discovered on Thursday by AppleInsider, they offer a glimpse of how Apple's rumored voice control system — which may be a secret, unannounced feature coming to iOS 5 later this year — could work.

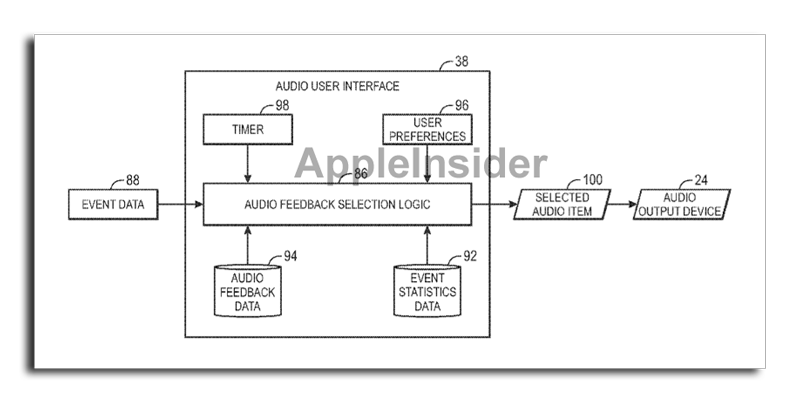

The first application, entitled Adaptive Audio Feedback System and Method, notes that current audio feedback systems are inefficient, particularly when dealing with items that may contain a large amount of information. Presenting so much to a user audibly can be overwhelming and result in a negative experience.

In addition, relying on audio prompts for a user interface can result in certain information not being appropriately conveyed. For example, audio feedback may not adequately distinguish between prompts that are of high importance, versus those that are not as important.

Apple's solution looks to cut down on the verbosity, or "wordiness," of audio feedback systems, making them more efficient and less frustrating for the user. In one method, the system would recognize if a list of data — such as a selection of songs being presented to the user audibly — contained repetitive information, such as the artist or album name.

Such a system would intelligently recognize that a user has already been presented with certain information in any context, and would spare them the need to hear it repeated once again. This could apply to menu navigation, alerts and prompts, and more, in a method referred to as "stepping down."

"If a subsequent occurrence of the user interface event occurs in relatively close proximity to a previous occurrence of the user interface event, the audio user interface may devolve the audio feedback (e.g. by reducing verbosity), such as to avoid overwhelming a user with repetitive and highly verbose information," the application reads.

In one specific example provided, the audio prompt "Genius is not available" is replaced by a shorter, more efficient "No Genius." This could be made even more efficient in the form of an audible cue, such as a negative sounding tone or beep.

Such a system could also work in reverse, too. An iPhone could "step up" the verbosity of an item based on user preferences, such as to convey more important information or alerts to users.

This dynamic system would spare users the need to repeatedly hear the same audio prompts, particularly if they are accomplishing a task with their iPhone that they are already familiar with. Shorter prompts are not only less bothersome to the user, but could also make handsfree navigation faster.

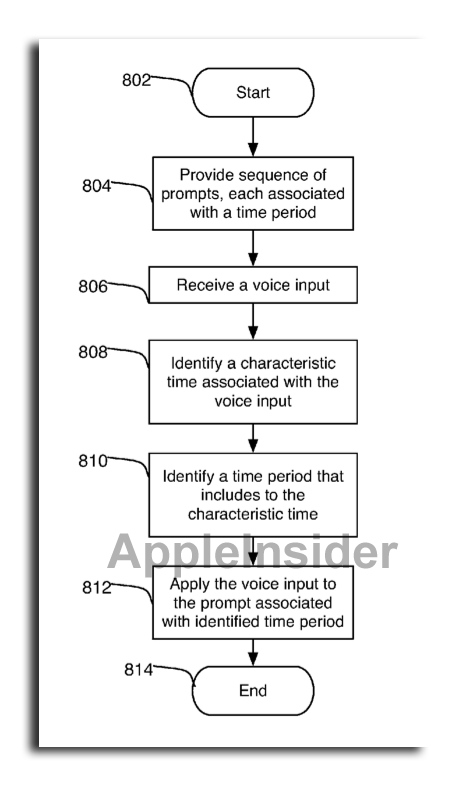

Apple also addressed the voice control side of handsfree systems in a second patent application published this week. Entitled Processing of Voice Inputs, it aims to address user frustration that often emerges when using a voice control system.

The filing notes that sometimes it takes too long for a system to process and interpret a user's voice command. That delay not only leads to a less efficient system, but it can also lead to other frustrations for the user. For example, the system and the user may end up talking over one another because of timing issues.

"Because of the time required to receive an entire voice input, process the voice input, and determine the content of the voice input, a particular voice input that a user provided in response to a first prompt can be processed and understood after the first prompt has ended and while a second prompt is provided," the application reads. "The device can then have difficulty determining which prompt to associate with the received voice input."

Apple's solution would have dynamic time stamps or time ranges associated with system prompts, offering an appropriate window in which the user's voice input could be given. Prompts presented by the system could have any combination of time stamps or ranges.

"The time stamps or time ranges can be defined such that a voice input processed after a prompt ends can still be associated with a prior prompt," the application notes.

These windows for input could be increased or decreased dynamically based on a number of factors. In one example, the system would allow the user more or less time to respond to a prompt, based on their history of using the system, or even a user's individual voice characteristics.

The type of prompt can also play a factor in the system's input window. For example, something that may take the user longer to say or process may result in an automatically expanded time range.

Both applications were applied for by Apple in January of 2010. The proposed invention related to audio feedback is credited to Benjamin Andrew Rottler, Aram Lindahl, Allen Paul Haughay Jr., Shawn A. Ellis and Policarpo Wood. Wood and Lindahl are also credited for the voice input application.

Both The Wall Street Journal and The New York Times reported earlier this year that Apple was working on improved voice navigation in the next major update to iOS, the mobile operating system that powers the iPhone and iPad. And a later report claimed that voice commands would be "deeply integrated" into iOS 5.

The groundwork was laid for Apple's anticipated voice command overhaul when Apple acquired Siri, an iPhone personal assistant application heavily dependent on voice commands. With Siri, users can issue tasks to their iPhone using complete sentences, such as "What's happening this weekend around here?"

Last month, it was said that those rumored voice features weren't ready to be shown off at Apple's annual Worldwide Developers Conference. However, it was suggested that the feature may be shown off this fall, and will be a part of the anticipated fifth-generation iPhone.

Neil Hughes

Neil Hughes

-m.jpg)

Wesley Hilliard

Wesley Hilliard

Andrew O'Hara

Andrew O'Hara

Malcolm Owen

Malcolm Owen

Marko Zivkovic

Marko Zivkovic

Chip Loder

Chip Loder

Christine McKee

Christine McKee

William Gallagher

William Gallagher

-m.jpg)

12 Comments

Don't get me wrong siri was sweet a year ago. Now its too slow and goofy.

I was happy when apple acquired siri.

Lets hope the wait is worth it.

p.s i used a HTC phone yesterday and the haptic feedback was extremely impressive.

Get on it apple! lol

It seems Apple has to add new features to sell every generation of iPhone.

I too am experimenting with hands-free control.

With hands tied behind my back, I now call my iPhone 4 to come hither ... Ok, still working out the kinks. But the app is free - 50% to "hands free".

My prediction is that ultimately the iPhone Market will erode more quickly than the iPad..

My prediction is that ultimately the iPhone Market will erode more quickly than the iPad..

Has the iPad market eroded?