Touch panel stylus input explored

When the iPhone was introduced in 2007, Apple co-founder Steve Jobs criticized the stylus, which previously had been the primary input method for touchscreen devices. "We are all born with the ultimate pointing device — our fingers — and iPhone uses them to create the most revolutionary user interface since the mouse," he said.

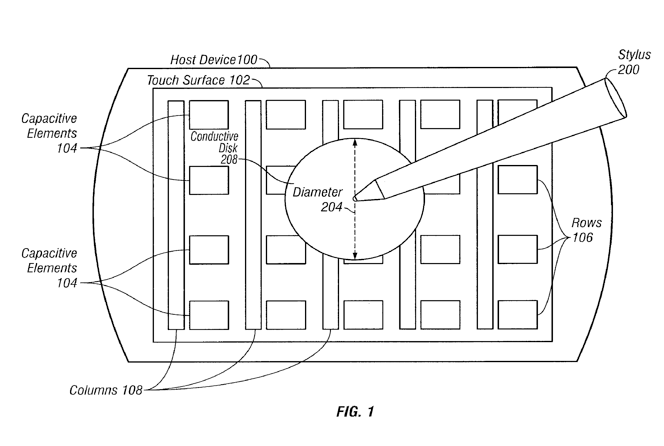

But in its current incarnation, the iPhone does not support stylus use, as the touch panel on its display requires a conductive pointer — like the human finger — to be recognized. Apple's patent application would address this with a conductive tip for a stylus that would be recognized by such a screen.

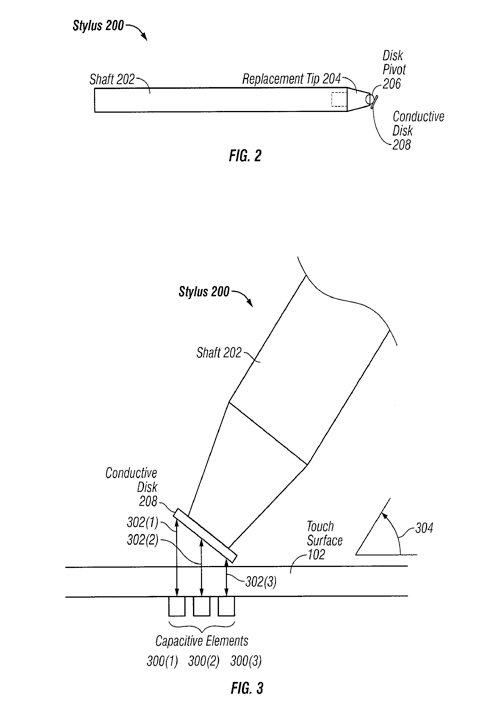

"A metallic or otherwise conductive disk may be attached to one end of the stylus," the application reads. "The disk may be sized so as to guarantee sufficient electrical interaction with at least one sensory element of the touch sensor panel."

The application also presents the option of a powered stylus that would provide the stimulus signal required by a capacitive touch screen. A powered stylus could also include sensors that would measure elements like force and angle that would transmit additional information to the device.

"This additional data can be used for selecting various features in an application executing on the host device (e.g., selecting various colors, brushes, shading, line widths, etc.)," the application reads.

The invention is credited to John G. Elias, an Apple employee and co-founder of FingerWorks, the firm acquired by Apple during the development of the original iPhone. The application is titled "Stylus Adapted For Low Resolution Touch Sensor Panels." It was submitted to the U.S. Patent and Trademark Office on July 11, 2008.

Dynamic graphical user interface for mobile devices

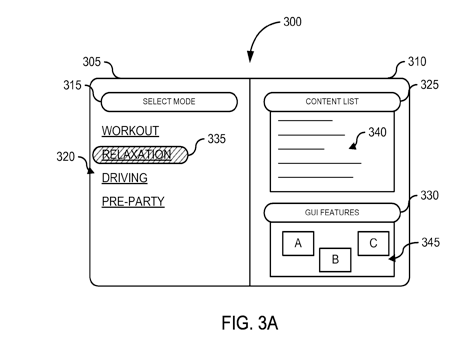

Future portable devices could have different input methods and user interfaces depending on where they are located, according to another Apple patent application.

For example, using the device in the car or in the gym could show a different design on the screen. Devices could also be controlled in different fashions when they are docked and less portable, and a different design and input method might make more sense.

"Each mode may define different features and content that are customized for a particular mode," the application reads. "Based a selected mode, the media player may provide access to only content, features, hardware, user interface elements, and the like that the user wishes to have access to when the mode is enabled. The media player may provide the user different experiences, looks, and feels for each mode."

Users would be able to customize each of the different GUIs available. The goal, the application states, is to create a "cleaner, more focused user experience."

Custom layouts and playlists could be created for use at the gym or while driving, which would automatically be reconfigured when a particular mode is enabled.

"The mode may further specify how applications relevant to the mode may be displayed, such as backgrounds, icons, style information, themes, or other information that provides a visual indicator of the active mode," the document reads.

The application, entitled "Multi-Model Modes of One Device," is credited to William Bull and Ben Rottler. It was submitted on Sept. 9, 2008.

Neil Hughes

Neil Hughes

-xl-m.jpg)

-m.jpg)

Malcolm Owen

Malcolm Owen

Chip Loder

Chip Loder

Christine McKee

Christine McKee

William Gallagher

William Gallagher

Amber Neely

Amber Neely

Andrew Orr

Andrew Orr

-m.jpg)

21 Comments

Would't that make a little more sense in a tablet? not that it would hurt the phone.

A stylus? I see no point for one unless you live in the frigid areas of the world where you wear gloves. Even then, don't they make gloves that work on the iPhone?

You can't get around the fact that if you want to do intricate work your finger in just too fat.

You can't get around the fact that if you want to do intricate work your finger in just too fat.

Hell for me just clicking on links is a pain in the ass on the iPhone.

A stylus already exists for the iPhone, again we have to go a third party to get a Apple product to work correctly.

Ditto for the glossy screens.

This sounds like devolution. Why would we go back to a stylus? I know someone mentioned cold weather, which to a certain extent, I can understand, but I live in NYC where the winters can be really cold, and I just take my gloves off when I need to do something on the iPhone...mind you, that only happens when I'm on my way to the subway, so I can wait until I'm in a warm place to use the iPhone.