The U.S. Patent and Trademark Office on Thursday published an Apple patent application for a system that dynamically changes audio and video settings based on where a user is located in relation to the source device, thus allowing for the best viewing or listening experience.

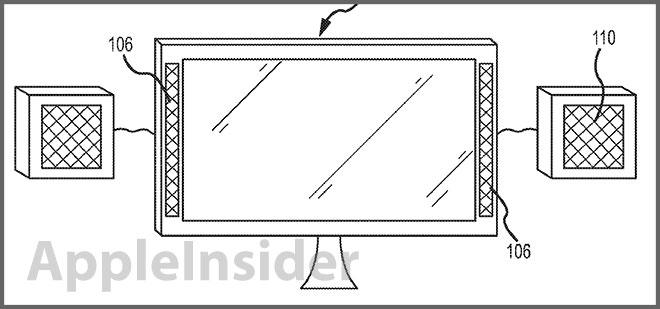

Apple's patent application for "Devices with enhanced audio" covers not only speaker settings, but video adjustments as well. By using various sensors, such as cameras and microphones, the system is able provide an optimal experience with little to no input from the user.

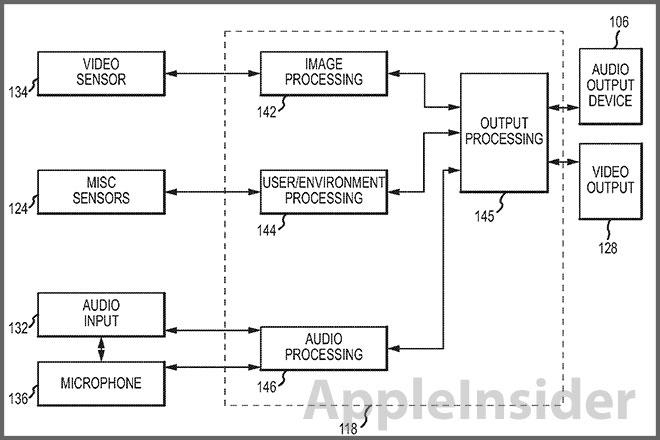

The method starts with data collected from a large variety of sensors, including imaging sensors, proximity sensors, microphones and infrared sensors, among others. By taking input from a given sensor array and processing the data, the system can determine the positioning of a user in relation to a computer screen or source device. Also taken into account is the user's environment, for example a large room with wooden floors, or a small room with drawn curtains.

A multitude of inputs are covered in the patent, including cameras that monitor eye movements (gaze tracking) or facial recognition to calculate where a user is looking. Microphones can gauge the level of reverberation in a room and ambient brightness can be measured with light sensors.

Next the data is processed to determine the best way to reach high quality output. Audio filters can be applied, the brightness or contrast of a display can be tweaked and speaker volumes can be altered. Basically any metric that can be controlled electrically is accounted for and calculated based on the data taken from the sensor inputs.

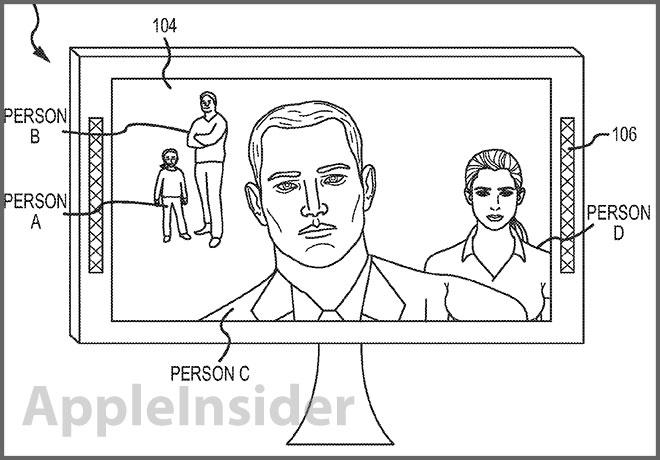

A significant portion of the patent is dedicated to video conferencing, with the system being able to control the best way to facilitate two-way communications for both users. For example, an input such as facial recognition can be used to determine where a microphone should be "beam steered" to achieve maximum voice quality even when a user is looking away from the computer screen. Also mentioned are controls to determine how far away each person is from the camera, and the corresponding settings needed to adjust for the difference.

The patent was first filed for in July 2011 and credits Aleksandar Pance, Brett Bilbrey, Darby E. Hadley, Martin E. Johnson and Ronald Nadim Isaac as its inventors.