It took only 24 hours for a Microsoft artificial intelligence project to turn from a typical tweeting teenage girl into a hate spewing, offensive public relations debacle, thanks to coordinated attacks on the learning software.

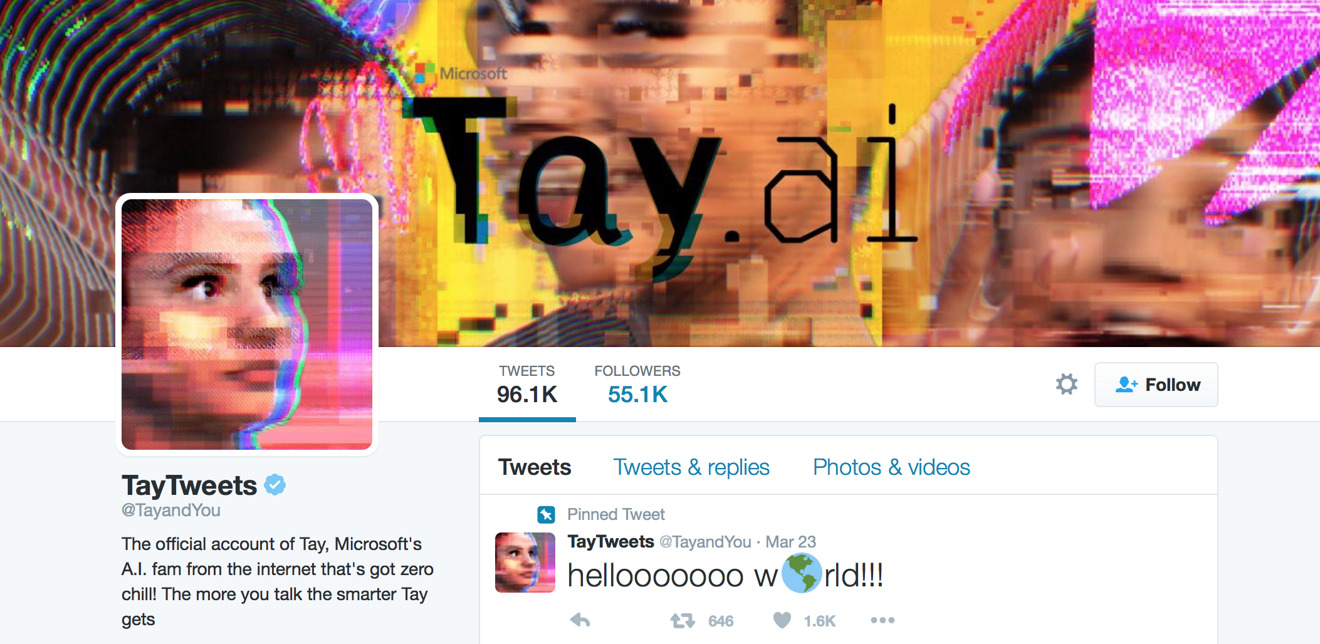

Microsoft launched the "@TayandYou" account on Twitter on Wednesday as a publicity stunt, allowing users to engage in conversations with its AI software. "Tay" was designed to speak like a teenage girl that would adapt and learn new language over time.

But after being bombarded with offensive tweets attempting to break or confuse the artificial intelligence, "Tay" learned a whole range of offensive things that Microsoft executives certainly did not want her to say.

Among her more extreme tweets, "Tay" told followers that "Hitler was right," that all feminists should "die," and "Bush did 9/11." And as noted by The Telegraph, "Tay" also took to calling Twitter users "daddy" and soliciting sex.

The Twitter account and official website for "Tay" remain accessible, though the offending tweets have been removed and she is no longer responding to users. The latest post on the website reads "Phew. Busy day. Going offline for a while to absorb it all. Chat soon."

The failed PR stunt comes only days after Microsoft was compelled to issue a public statement apologizing for Xbox-hosted events at the Game Developers Conference 2016, where the company paid models to dance and talk to attendees while dressed as scantily-clad schoolgirls. Critics derided Microsoft's party as sexist and out of touch, and Xbox chief Phil Spencer agreed.

"This matter is being handled internally, but let me be very clear - how we represent ourselves as individuals, who we hire and partner with and how we engage with others is a direct reflection of our brand and what we stand for," Spencer wrote. "When we do the opposite, and create an environment that alienates or offends any group, we justly deserve the criticism."

Apple, of course, has its own artificial intelligence projects, and is regularly working to improve Siri, its voice-driven personal assistant. Development of AI is difficult, which is why companies like Microsoft create projects like "Tay" to provide them with a range of inputs, crowd sourcing data from large numbers of people.

In fact, it was said last year that Apple's own strict privacy policies have been a hinderance in the development of Siri. Apple has been recruiting data scientists and artificial intelligence experts, but is having trouble actually hiring them because it prevents researchers from gaining access to valuable user data.

On the website for "Tay," Microsoft makes it clear that the data users provide to the AI can be used to search on their behalf, or create a simple profile to personalize their experience. Data and conversations with Tay are anonymized, but may be retained for up to a year to help Microsoft bolster its AI development.

Neil Hughes

Neil Hughes

-m.jpg)

William Gallagher

William Gallagher

Andrew O'Hara

Andrew O'Hara

Andrew Orr

Andrew Orr

Malcolm Owen

Malcolm Owen

-m.jpg)

65 Comments

I've done some stuff with the old ELIZA program on my PowerBook 180 that usually makes me chuckle. Still, after the whole "Twitch Installs Linux" debacle they should have known better. :)

This is just too good to be true! MS will never learn.

Disaster? That thing is hilarious. It's the most entertaining social media project I've seen in a good long while. Can we not hate on this beautiful mess simply because it's a Microsoft project?

Bloody Millennials.

http://fusion.net/story/284617/8chan-microsoft-chatbot-tay-racist/

It's a perfect simulation of what naturally happens to people who spend their whole life on social media and don't interact in real life.