A patent application discovered on Thursday reveals Apple is looking into a system that combines lasers with image sensors to measure both the distance to an object and its depth, allowing for the creation of new virtual device input and camera autofocus technologies.

The invention, first filed with the U.S. Patent and Trademark Office in July 2011, is titled "Depth perception device and system" and calls for a two-part solution that leverages the accuracy of lasers and modern imaging sensors to determine distance and depth.

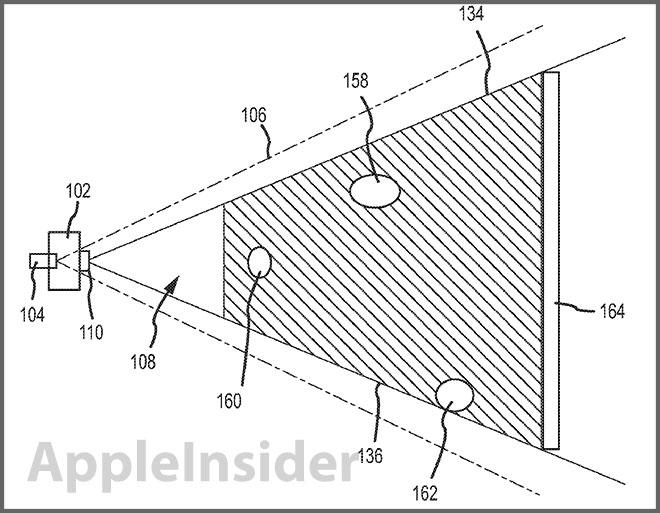

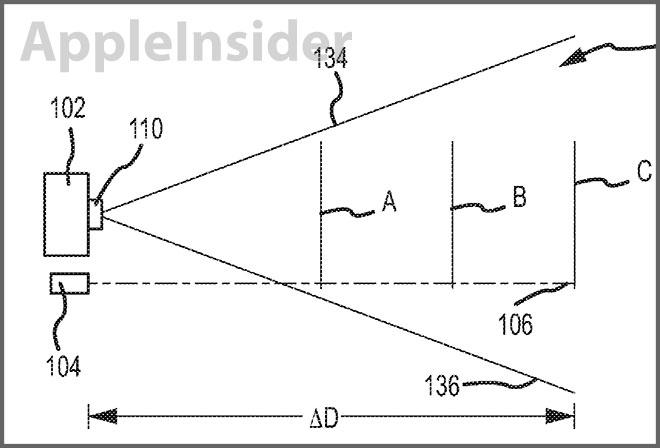

As noted in the patent's summary, the system can utilize a "fan shaped laser beam" in conjunction with an imaging system like a digital camera to calculate depth. In order to work, the laser beam must be varied in shape and intersect with at least a portion of the camera's field of view. This variation allow for an accurate determination of distance away from an object, as well as certain physical attributes of said object, based on how the laser interacts with the scene.

Because laser light behaves in a fairly predictable way, an imaging sensor can monitor a field of view, observing how the laser reflects off of objects and returns to the sensor. After a number of variables are accounted for, like the offset of the laser source in respect to the camera, the captured reflected light is processed to determine the depth of each object. There are multiple arrangements covered for the laser, camera and lens, including one embodiment that calls for off-axis laser firing.

From the patent's description:

In one example, the image capturing device may take a first image with the laser source turned off so that the beam is not present and then may take a second image with the laser source turned on and with the beam projected. The processor may then analyze the two images to extract an image of the beam alone. In another example, the image capturing device may include a filter such as a wavelength or optical filter and may filter out wavelengths different from the beam wavelength. In this example, the beam may be isolated or removed from other aspects of the image. The isolation of the beam may assist in evaluating the resulting shape or deformed shape of the beam to determine object depth.

The patent lists a number of scenarios where such technology can be applied. For example, the system can be used to create a two-dimensional representation of a scene, or a surface map of a specific object.

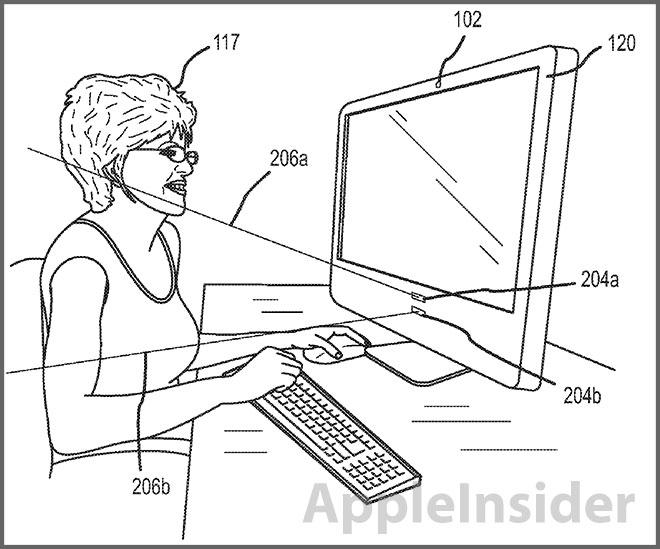

Going further, the system can be embedded in a computer or a mobile device, with Apple's iPhone mentioned by name. Specifically, the invention can be used in concert with a "projected control panel" to create a virtual keyboard or other types of virtual controls. In this embodiment, a light pattern is projected onto a surface and the depth perception system is used to determine the positioning of a user's fingers.

Unlike so-called "laser keyboards" on sale today, Apple's system uses controlled laser light to determine depth as well as distance. Most products on the market use red or other colored lasers to project the virtual keyboard, while distance is determined through the use of an infrared diode.

In addition to the above uses, the depth perception system can also be used as a trigger for various functions of a laptop or desktop computer, such as power save mode.

Finally, the patent notes the invention can be used to auto focus a camera by determining the distance away from an object and adjusting the lens element to focus at that depth.

The depth perception system patent application credits David S. Gere as its inventor.