The U.S. Patent and Trademark Office on Thursday published an Apple invention that replaces frames dropped during a low-bandwidth FaceTime call with pre-recorded or doctored images, thereby creating the illusion of a seamless feed.

Apple's patent application, titled "Video transmission using content-based frame search," looks to deal with dropped frames in a FaceTime video call, a problem some iPhone or iPad users may encounter when communicating over a low-bandwidth connection.

Currently, video communication over cellular data is spotty in many areas due to bandwidth restrictions and existing wireless technology. In some cases, features like Apple's FaceTime are nearly unusable due to dropped frames, extremely low-resolution images and poor audio quality.

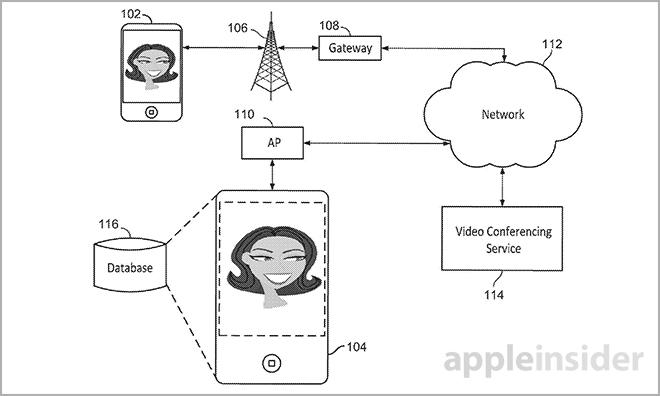

Detailed in the filing is a method in which previously transmitted video frames and "video frame content information" are used to reduce the amount of data being sent between two devices during a video call.

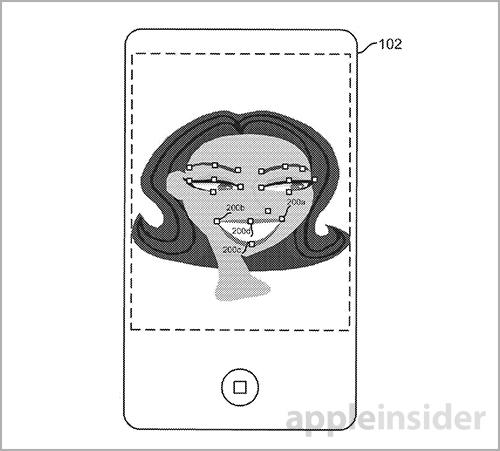

According to one method, a set of parameters or coefficients can be generated from, and associated with, a given video frame. For example, object landmarks points like facial features, orientation and scale may be called coefficients and can be tracked and stored alongside transmitted video. This data is processed into a searchable database from which single frames can be retrieved.

During low-bandwidth scenarios, a device can compute and send a set of coefficients for a given live video feed instead of a video frame. On the other hand, when bandwidth is available, the full image with associated coefficients may be sent and stored.

When a connection is poor and frames begin to drop, the system is able to use the coefficients to search a database of previously stored video for similar objects. In the example given, the coefficients of a live feed are compared to the video frame database to find a best match. When a frame with similar object characteristics is found, it is retrieved and displayed on the receiving device.

In another embodiment, the generated coefficients are used to intelligently morph an image in a previously recorded video frame. The result is inserted into the live feed in place of dropped frames.

Finally, objects deemed to be background content can be transmitted at a lower resolution than subject content. For example, the system will present a person's face in a higher resolution than the wall behind them.

Aside from the obvious benefits in call quality, the technology may also be used to cut back on data consumption as coefficients can be used to dynamically reproduce a scene from already transmitted video. Even if one artificially generated video frame were to be inserted for every three live frames, the savings would be huge.

As with any Apple patent application, it is unknown if the invention will one day be implemented into a consumer product. With rising data costs and the use of high-res camera modules, however, the feature would be useful for those who frequently make FaceTime video calls.

Apple's morphing and matching FaceTime video frame patent application was first filed for in 2012 and credits Alex Tremain Nelson and Richard E. Crandall as its inventors.

Mikey Campbell

Mikey Campbell

Wesley Hilliard

Wesley Hilliard

Marko Zivkovic

Marko Zivkovic

Andrew O'Hara

Andrew O'Hara

Christine McKee

Christine McKee

Amber Neely

Amber Neely

Malcolm Owen

Malcolm Owen

8 Comments

Good way of cheating with the video, to make it feel realtime on bad reception

Now if only they would add the conference call feature back into Facetime on the Mac we would be in business. It's really annoying that they replaced iChat and left us with no video conference option.

Why am I getting a visual of MMaaaaax-x-x Headd-rroom..?

I don't want to give myself any undue credit, but I remember way back in 2010 when the iPhone 4 keynote was presented and Steve Jobs initially said that FaceTime would be only over Wi-Fi for the time being. I immediately scoured the internet and found a company's website showing smooth video being played back over really slow bandwidth conditions. I emailed Steve to congratulate him on a great keynote and forwarded the website and details over to him. I never got a response back, unfortunately, but something tells me the email was not simply ignored. Check out GooderVideo's quote on their product page and check out this sample video: VideoPhones and Web-Cams are notorious for very low frame-rates. This clip shows a 4 fps (frames per second) original along with the %u201Cmended%u201D output at 22 fps. The motion is smooth and natural. Maybe these videophones will catch on some day? http://goodervideo.com/products/MP.html http://www.shadowlabs.com/WebVideos/FrameFill.6x.TeleConference.avi

I was thinking Max Headroom, too. In fact, I'll be disappointed if it isn't like this or it's not an option...