Apple is contemplating ways 360-degree video content for VR headsets could be improved, by coming up with a method for stitching together multi-directional image data from videos to make the appear with less distortion than current stitching apps and encoders can produce.

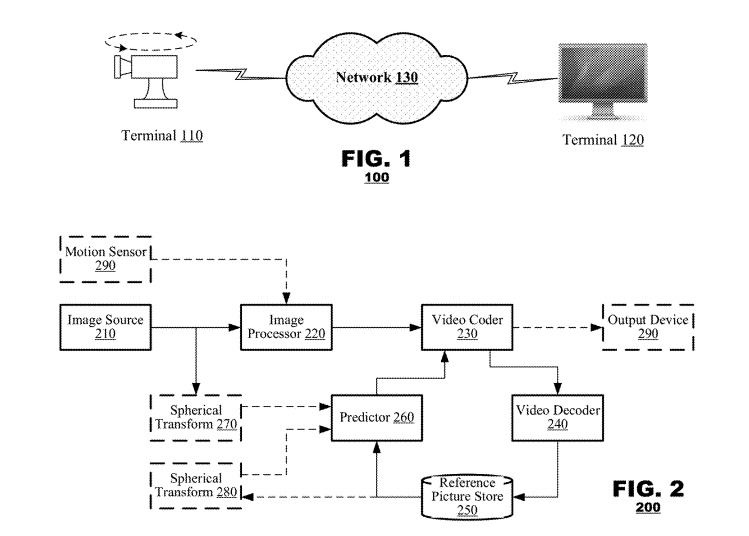

Published by the U.S. Patent and Trademark Office on Thursday and originally submitted on February 15, 2017, the patent application titled "Processing of Equirectangular Object Data to Compensate for Distortion by Spherical Projections" is all about fixing errors and issues when combining videos together.

The filing notes that videos are produced in ways that a subject or scene can be captured from multiple viewpoints, such as by using multiple cameras pointing at the same spot or, as a more recent development, cameras producing spherical video that can show all sides of a scene. For handheld video, or for shots that change the camera's position and viewpoint, the images could provide a lot of extra data that could be incorporated into a scene.

"Many modern coding applications are not designed to process such omnidirectional or multi-directional image content," the filing states, suggesting the applications are designed on an assumption the image data is "flat" or captured from a single view. This means such applications do not account for distortions that could appear when processing these types of videos, and so can fail to recognize redundancies in image content and in turn be inefficient.

In short, the encoder splits a video into pixel blocks, and for each block, the encoder may compare it to other data it may have about the scene in a reference picture. Using a prediction search on the search block and the reference data, the encoder could perform different actions to the pixel block, in order to make it look more appropriate for the viewer in the format it with be used within.

For example, a spherical video could be created for viewing by someone wearing a VR headset, produced specifically with that format in mind. At the same time, an identical view of what the user is seeing could be shown on a second monitor as-live, but with changes made so it appears as if it's from a normal "flat" single-lens viewpoint, without any of the distortions required for it to appear correct in a spherical view.

It could also be used to create spherical pictures from several flat views, with the same system able to make corrections to make it usable in a spherical video.

Apple files a large quantity of patent applications every week, but relatively few of the concepts make their way into the company's products or services. The USPTO filings are not a guarantee that the idea will make an appearance in a future consumer device.

The concept in the filing may already have a few good applications available. For a start, it could allow people recording with 360-degree cameras to properly stitch the video together and create clips from selected areas, converted so they appear as if they were originally recorded on "flat" cameras.

The second is for VR, both for creating spherical videos, as well as for spectators viewing what the headset-clad user is seeing. Videos produced with 360-degree cameras are likely to be a major content source for VR users in the future, but the ability to correct distortions, artifacts from the filming of such videos, would make the content much more acceptable to view.

Apple has been rumored to be working on its own VR headset for some time, with related patent filings bolstering the reports. Despite software-related AR and VR moves, the company has yet to indicate directly that it will be producing its own headset.