Apple is looking into ways to use ultrasonic-based technology to perform touch sensing, including creating new layers for touch screens and potential changes to the Apple Pencil to include ultrasonic transducers for more accurate movement detection.

The first of two recently-published patent applications from the United States Patent and Trademark Office, the "Composite cover material for sensitivity improvement of ultrasonic touch screens" filing discusses how an ultrasonic layer could complement existing capacitive-based touch displays currently used in devices, such as with the iPhone and iPad, or replace it entirely.

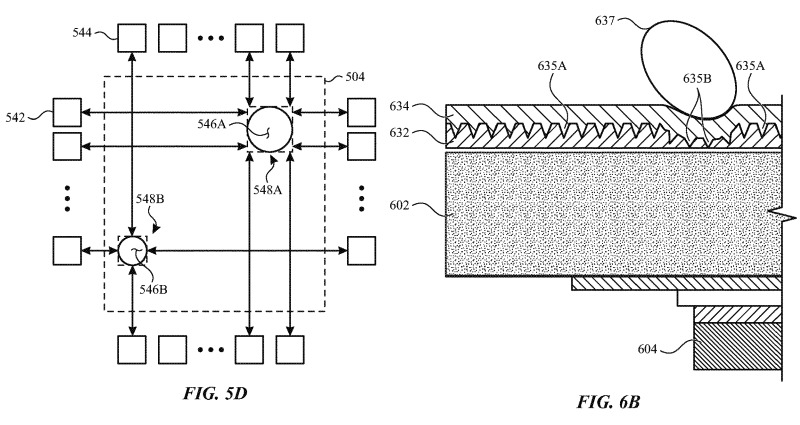

The concept requires the use of multiple intermediate layers on the surface of an acoustic touch screen's cover material. Acoustic touch sensing uses transducers to transmit ultrasonic waves along a surface of a device, along the layers, with sensors detecting the waves and any reflections.

A user's finger, a stylus, or another element in contact with the layer would affect the wave, causing reflections. By examining the reflections, knowing the time-of-flight and a wave's final resting place, the position of the object and even its size could be determined using the data.

By using multiple layers, the difference in data between layers could also reveal the amount of pressure applied, making it usable for features like 3D Touch.

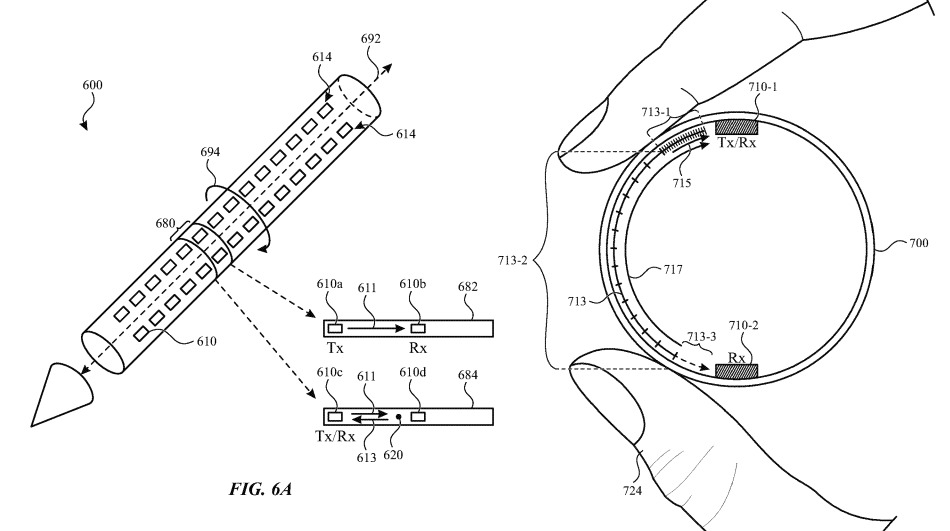

The second filing, "Ultrasonic touch detection on stylus" follows a similar concept of using wave-based technology, but instead focuses on applying it to a stylus, like the Apple Pencil. In this case, it is less about how the stylus interacts with a touch-sensitive surface, more detecting how the stylus itself is touched by the user.

Rows of transducers along the shaft of the stylus would be able to detect where on the stylus' surface other objects are touching it, again affecting any transmitted waves as with the display filing. While having transducers on one end would help determine where the objects touch in terms of length from the nib of the stylus, multiple rows will also reveal what parts and how much of the surface area of the stylus is in contact.

In more practical terms, the stylus would be able to know how the user is gripping the input device, and in what area of the stylus.

By knowing this data, applications that work with the stylus could potentially alter what happens when the user touches the stylus to the screen, or by other actions. For example, twisting the stylus and shifting the grip around could change the weight of a pen stroke in an art program, or a tap on a section of the shaft could be used as the equivalent of a software button press.

Determining the grip of the user could also reveal if they are left or right-handed, with that information aiding in improving palm detection features when the user is writing or drawing on the screen.

The use of ultrasonic technology for touch detection has potential, as it could easily be used alongside other technologies, including resistive and capacitive systems, but it is doubtful Apple will use it unless it has a good reason to, considering how well established the other technologies already are on the market.

While the Touch Bar for the MacBook Pro works well, albeit in limited situations, it is unlikely that Apple will be incorporating a full touchscreen into its MacBook or iMac lines anytime soon, with or without the above described technology. In April 2017, Apple's Phil Schiller advised a touch-sensitive display "doesn't even register on the list of things pro users are interested in talking about."

Some other patent filings from September suggest ways a touch-enabled MacBook could come about, describing an enclosure for an iPad that extends the tablet's utility in a notebook form while still retaining display interactivity. Apple has also considered making an enhanced keyboard and lower section for MacBooks that turns the entire area into a giant touchpad.

As for the stylus, Apple has suggested other ways it could change how an Apple Pencil or similar device could work in the future. One patent application in February 2018 suggests how a stylus and motion or orientation sensors attached to monitors or notebooks could allow for the stylus to be applied onto any flat surface or in the air, with movements translated into inputs for applications.