Speaking at the Sight Tech Global conference on Thursday, Apple executives Chris Fleizach and Sarah Herrlinger went deep on the company's efforts to make its products accessible to users with disabilities.

In a virtual interview conducted by TechCrunch's Matthew Panzarino, Fleizach and Herrlinger detailed the origins of accessibility at Apple, where it currently stands and what users might expect in the future.

Fleizach, who serves as Apple's accessibility engineering lead for iOS, offered background on how accessibility options landed on the company's mobile platform. Going back to the original iPhone, which lacked many of the accessibility features users have come to rely on, he said the Mac VoiceOver team was only granted access to the product after it shipped.

"And we saw the device come out and we started to think, we can probably make this accessible," Fleizach said. "We were lucky enough to get in very early — I mean the project was very secret until it shipped — very soon, right after that shipped we were able to get involved and start prototyping things."

It took about three years for the effort to reach iPhone with iOS 3 in 2009.

Fleizach also suggests that the VoiceOver for iPhone project gained traction after a chance encounter with late co-founder Steve Jobs. As he tells it, Fleizach was having lunch (presumably at Apple's campus) with a friend who uses VoiceOver for Mac, and Jobs was seated nearby. Jobs came over to discuss the technology, at which time Fleizach's friend asked whether it might be made available on iPhone. "Maybe we can do that," Jobs said, according to Fleizach.

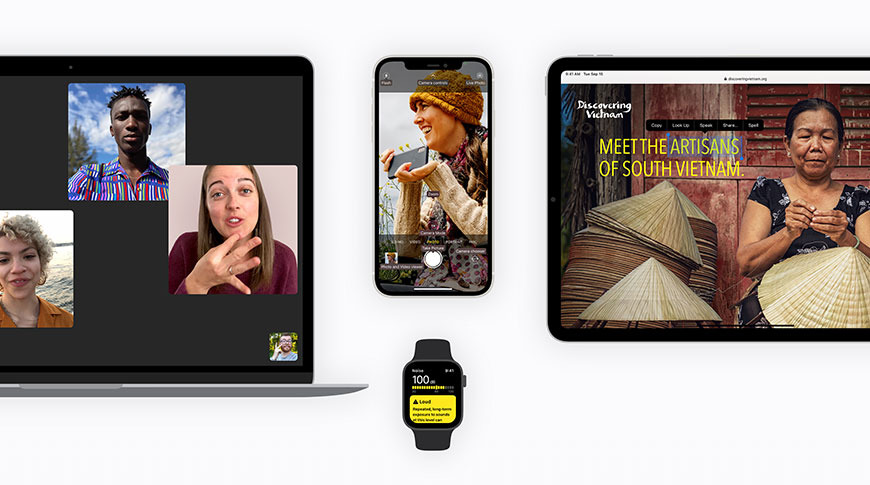

From its humble beginnings, accessibility on iOS has transformed into a tentpole feature set that includes tech like Assistive Touch, hearing accommodations, Audio Selections, Dictation, Sound Recognition and more. The latest iPhone 12 Pro adds LiDAR into the mix for People Detection.

Herrlinger, Apple's Apple's Senior Director of Global Accessibility Policy & Initiatives, says the accessibility team is now brought in early on a variety of projects.

"We actually get brought in really early on these projects," she said. "I think as other teams are thinking about their use cases, maybe for a broader public, they're talking to us about the types of things that they're doing so we can start imagining what we might be able to do with them from an accessibility perspective."

Recent features, such as VoiceOver Recognition and Screen Recognition, lean on cutting edge innovations related to machine learning. The latest iOS devices incorporate A-series chips with Apple's Neural Engine, a dedicated neural networking module designed specifically for ML computations.

VoiceOver Recognition is an example of new ML-based functionality. With the tool, iOS can not only describe content displayed screen, but do so with context. For example, instead of saying a scene includes a "dog, pool and ball," VoiceOver Recognition is able to intelligently parse those subjects into a "dog jumping over a pool to fetch a ball."

Apple is just scratching the surface with ML. As noted by Panzarino, a recently published video shows off software that apparently takes VoiceOver Recognition and applies it to iPhone's Camera to offer a description of the world in near real time. The experiment illustrates what might be available to iOS users in the coming years.

"We want to keep building out more and more features, and making those features work together," Herrlinger said. "So whatever is the combination that you need to be more effective using your device, that's our goal."

The entire interview was uploaded to YouTube and can be viewed below: