Apple exploring improved, contextual voice commands for iPhone

The new voice control method is described in a patent application from Apple made public this week by the U.S. Patent and Trademark Office. Discovered by AppleInsider, the patent entitled "Contextual Voice Commands" aims to make voice control of a device more reliable and efficient.

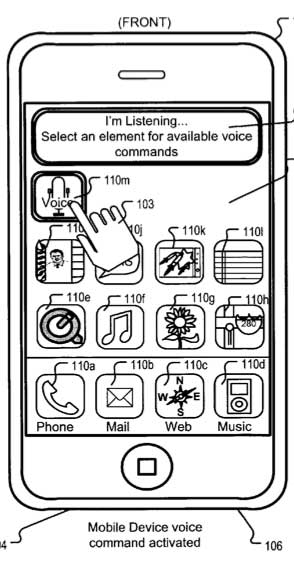

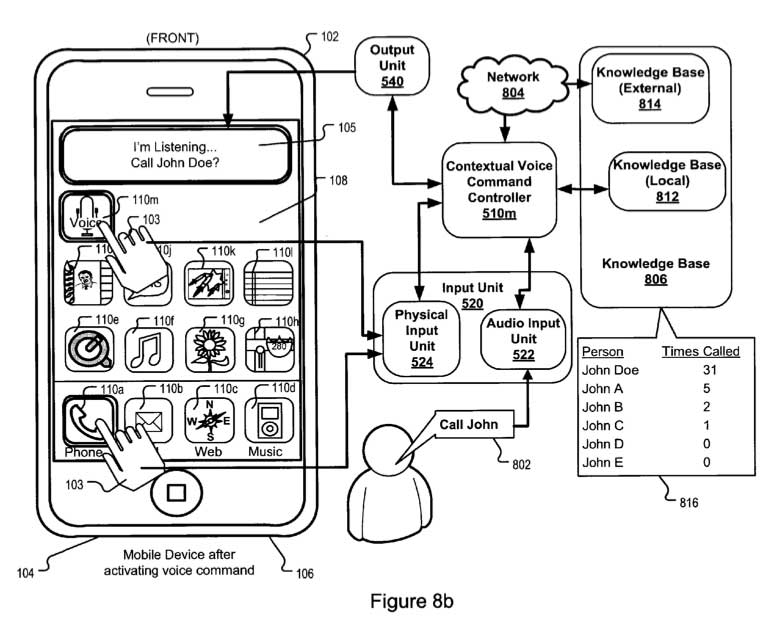

Relying on a combination of quick physical inputs as well as voice commands, Apple's proposed system would allow for contextual input on an application-by-application basis. This would narrow the voice command possibilities in any given application, as opposed to a system-wide method that has a large number of options.

"By using contextual voice commands, a user can execute desired operations faster than by navigating through a set of nested menu items," the filing reads. "Also, contextual voice commands can be used to teach the device to accurately predict the intent of the user from a single voice command. Further, contextual voice commands can be used to vary the manner in which the user provides the voice input based on the context of the device being used."

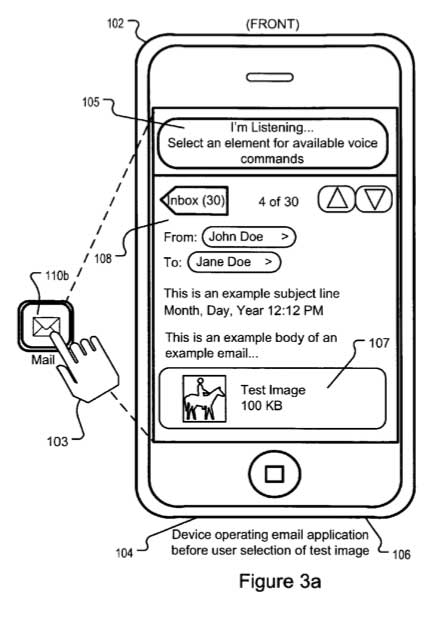

By having the user select an application and narrow the potential voice commands, a device like an iPhone would be less likely to be "confused." Also, for a user who may be driving a car, requiring them to simply select the e-mail application is much simpler and safer than actually typing out a note.

"Contextual voice commands can be more precise than conventional voice commands that merely control the device as a whole," the application states. "For example, different context specific voice commands can be implemented based on user activity or application in use."

Using this method, Apple would also be able to allow third-party applications to rely on voice commands as well. The application notes that an application programming interface (API) related to a contextual voice command module could be provided to developers, allowing their application to be controlled by voice.

In addition to an iPhone or another portable device with a touchscreen, the patent filing also notes that contextual voice commands could also be used with a Mac or another traditional computer. In this method, users would select a program on screen with a mouse cursor.

Apple's method could also provide visual or audible cues to the user, notifying them what application has been selected or providing a list of potential voice commands. For example, operations such as "compose, reply, forward, read, save, copy, paste, search" and more could be displayed on the screen in an e-mail application, or read aloud to the user.

The application, released this week by the U.S. Patent and Trademark Office, was first filed in June of 2009. The proposed invention is credited to Marcel Van Os, Gregory Novick, and Scott Herz.

The application is particularly noteworthy because earlier this year, Apple acquired Siri, the maker of a personal assistant application for the iPhone. The free Siri application, available on the App Store, relies on voice commands to accomplish tasks from multiple sources of information, such as making reservations at a restaurant, or buying tickets to a movie.

With Siri, voice queries are provided in "natural" English, as a person would use in a conversation. Questions such as "What's happening this weekend around here?" would provide local events, and a follow-up query of "How about in San Francisco?" would change the location.

Neil Hughes

Neil Hughes

Mike Wuerthele

Mike Wuerthele

Malcolm Owen

Malcolm Owen

Chip Loder

Chip Loder

William Gallagher

William Gallagher

Christine McKee

Christine McKee

Michael Stroup

Michael Stroup

William Gallagher and Mike Wuerthele

William Gallagher and Mike Wuerthele