Despite headlines fretting of a "new era in OS X and iOS malware," Apple's security systems for iOS and OS X are working as intended to protect users from exposure to the ubiquitous malware affecting open platforms including Android and Windows. Here's the realistic, non-sensationalized facts about how safe Apple's users actually are and how users can remain protected from threats that arise.

Mac and iOS users are protected from viruses and malware by default unless the user bypasses their security systems, by jailbreaking an iOS device; by disabling the protections of Mac OS X's GateKeeper; or by choosing to "Trust" app installs that iOS identifies as being from an "Untrusted App Developer." Here's how those systems work, and how users can avoid being tricked into turning off their own security.

Mac and iOS users who do not defeat Apple's security systems are not at risk

"WireLurker," a recent trojan horse attack detailed by Palo Alto Networks, was blocked in all forms— even on Macs with key security features disabled— by Apple within hours.

"We are aware of malicious software available from a download site aimed at users in China, and we've blocked the identified apps to prevent them from launching," Apple wrote in a statement last week.

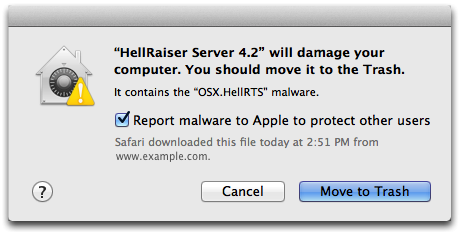

Apple has previously used XProtect to remotely disabled user-installed Mac malware ("trojan horses," like the Russian Yontoo blocked last year) or software components with serious potential security vulnerabilities (such as Oracle Java), nipping problems in the bud before they could develop into an unmanageable security problem.

XProtect is so effective that the last significant malware issue for Macs (named Flashback) was a trojan horse masquerading as Adobe Flash Player that was specifically intended to disable XProtect (although the malware couldn't actually do this).WireLurker and Masque Attack aren't viral and can't infect users unless they intentionally disable their security and manually install apps bypassing Apple's builtin trust verification systems for iOS and Macs.

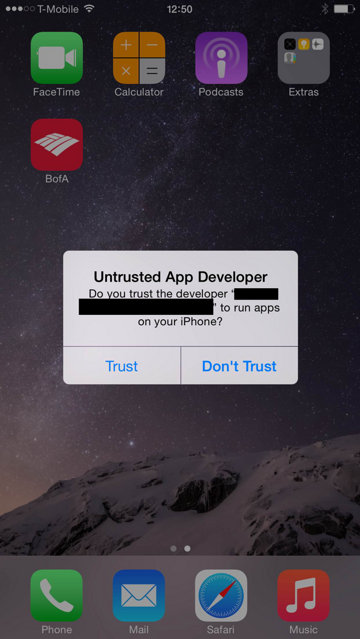

Masque Attack, a related exploit that shares one of the vulnerability vectors exploited by WireLurker, similarly requires users to "Trust" a request to install software from an unknown source, a step that Apple has now made effortlessly easy. Fortunately, users who inadvertently trust such apps from Untrusted Developers can review their iOS Provisioning Profiles to disable any self-signed certificates they have already approved.

WireLurker and Masque Attack are not viral and can't infect users unless they intentionally disable their security and manually install apps bypassing Apple's builtin trust verification systems for iOS and Macs.

No need to be terrified in ignorance

That hasn't stopped sensational blogs from confusing users about how safe they actually are. Chris Smith, writing for BGR, spent paragraphs trying to convince readers that a minor distribution of Chinese malware "should terrify you," even though the identified malware has been circulating for months in China without actually delivering a real payload of malware outside of the wide open world of jailbroken devices.

Mac and iOS users have no need to be "terrified," but should understand in general terms how Apple's security systems work so they can't be fooled into installing malware. This is becoming a more complex issue because Apple now makes it easier than ever to bypass security on iOS, although it still requires an explicit "Trust" approval from the user.

Ironically, just two years ago the Electronic Frontier Foundation was demonizing the on-by-default security of iOS and OS X as "Apple's Crystal Prison" and an "elaborate misdirection," and called upon the company to provide a "simple, documented, and reliable way to drill into a settings menu, unlatch the gate of the crystal prison, and leave."

WireLurker, Masque Attack require users to "unlatch the gate of the crystal prison and leave"

WireLurker is unique in that it first attempted to fool Mac users into installing the malware. Once a user disables the Mac's security and manually installs a WireLurker-bearing third party Mac app, the app could seek to install malicious code on a USB-attached iOS device that has been jailbroken, or it could attempt to copy malware to a non-jailbroken iOS device using enterprise app deployment tools, the same vector that Masque Attack uses.

WireLurker is not currently a real threat because Apple immediately blocked it from running on the Mac using XProtect, and revoked the enterprise credentials that allowed it to move from Macs to a connected iOS device. Apple's security measures are working as intended, but users can still be tricked into installing software using the mechanisms Apple created to allow corporate users to develop and install their own custom apps outside of the App Store.

Here's an overview of Apple's OS X and iOS security systems and how they work.

How Apple's Mac XProtect works

Apple's XProtect subsystem of OS X is configured to deploy a blacklist of known malware definitions to Mac users within hours of malicious software being discovered. Macs automatically check for new malware definitions every day, and immediately begin blocking any new threats Apple identifies.

XProtect works like a very simple antivirus scanner— which is all Macs need because Apple has secondary systems that work to prevent malicious code from being installed in the first place. These are measures that have been proven to work— as long as users don't turn them off.

First introduced back in 2009— five years ago— for OS X 10.6 Snow Leopard, Apple's XProtect system is always on. Unlike more complex antivirus scanning tools designed for Windows, Apple's XProtect system for Macs doesn't consume significant memory or CPU resources because it isn't constantly scanning through every file and email on the system looking for bad code.

XProtect doesn't need to; unlike Android or Windows, the Mac platform is not under relentless attack from viruses and spyware. When a rare new exploit appears for Macs (or iOS), the discovery is actually considered big news. These attacks are not just rare, but also quite easy to prevent and fix, due to a series of security-first decisions built into Macs and iOS devices.

Apple has incrementally improved its security on iOS and has brought security features pioneered on iOS to its OS X Mac platform, deploying barriers that limit what apps can run, what data they can access and what privileges they can assume. With these protections in place, Apple's users don't have to be (or hire) computer science experts to avoid exposure to malware, spyware and adware problems.

How iOS app signing and OS X GateKeeper work

While XProtect disables malware a Mac user has manually installed, GateKeeper prevents users from installing apps from unsafe sources to start with. GateKeeper, introduced in 2012 for OS X 10.8 Mountain Lion, effectively brought iOS-style secure app signing to the desktop PC.

Many in the tech community have expressed outrage over Apple's tightening security rules. After GateKeeper was unveiled, Ellis Hamburger wrote the article "Sandbox of frustration: Apple's walled garden closes in on Mac developers," for The Verge, stating that "developers lashed out at Apple for its new rules when Mountain Lion was announced back in February, in part because of how much effort might go into re-architecting their apps."

A few months later, David Streitfeld similarly wrote for the New York Times that Apple's ability to dictate rules governing the iOS App Store "might be detrimental for the Internet as a whole."

Apple's mobile iOS was designed from the start to prevent the installation of "unsigned" third party software. When Apple opened the iOS App Store in 2008, it required that all iOS apps be signed (encrypted) with a security certificate. iOS is designed to only run apps that the system can verify as both being from a reputable source and being unmodified since being signed by the developer.

When a developer deploys apps through Apple's App Store, the app is "signed" or encrypted by the developer's private key. Under iOS, there's no way to turn this off unless users purposely "jailbreak" the security system, a process that exploits an existing vulnerability in order to turn off the system's verification of app signatures.

The dangers of sideloading apps

In early 2013, five years deep into the unparalleled success of Apple's iOS App Store, Dylan Love of Business Insider concluded that the tiny percentage of iOS users trying to jailbreak their phones was evidence that Apple should downgrade its security policies.

"This is clearly Apple's customer base sending a strong message that they want more out of the proverbial 'walled garden,'" he stated.

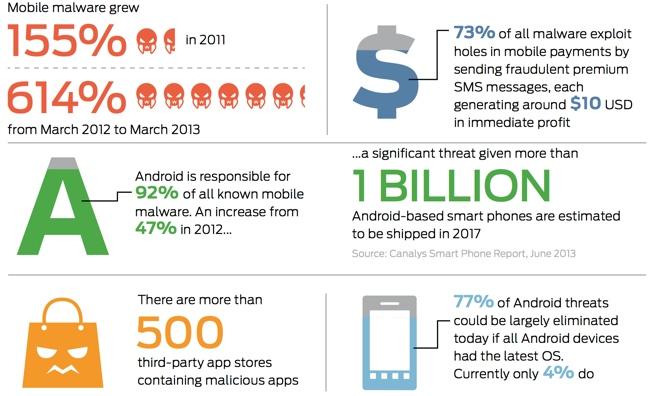

Android has a similar, but optional, code signing feature for apps, but Google made a series of mistakes that have weakened its ability to block or disable malware on the platform. This summer, Android's Fake ID vulnerability sprung from the fact that Android simply failed to properly verify certificates, allowing any app on the system to pretend to be software that the system gives broad access.

This issue still affects most Android users who shop for apps outside of Google Play and who haven't applied a patch for the flaw— an issue complicated by the fact that most Android users are working with basic device that cost less than $200 and are not regularly (or ever) updated by the hardware maker or the carrier.

Google actually encourages users to sideload apps by calling permissive software installation a "feature" while disparaging Apple's App Store as a "walled garden" of restrictions. That's resulted in a series of complex security and privacy issues for Android that effectively exploit users of often low-end devices— who are the least likely to understand how dangerous it is to allow any app that comes knocking to figuratively come through the front door and crawl into your bed.

In stark contrast, Apple has made the deployment of up to date iOS and OS X releases such a priority that the majority of Apple's users can and do install updates rapidly, while users of other platforms frequently can't obtain patches or updates at all, even if they do know about them and want to install them.

However, Apple has also make it increasingly easy for corporations and individuals to self sign and distribute their own apps to users who trust them. This has opened the door to the same types of "sideloading" security issues that exist on Android for users who trust apps from unknown sources.

App signing blocks piracy, creating a legitimate market for apps

In each new iOS release, Apple regularly defeats the mechanisms jailbreakers use to bypass iOS security, because jailbreaking is a direct threat to the App Store's high volume, low price market place. Apple doesn't make it easy to jailbreak because jailbroken iOS devices can pirate apps. App signing is a protection for developers as well as end users, effectively policing iOS for bad behavior.

Without an effective security system, iOS would look like the Windows or Android platforms: widespread software piracy, ubiquitous malware and virulent attacks that make it easy to infect and spy on large populations of unaware users. If there's no police to stop looting, trespassing and robberies, those problems rapidly grow out of control.

iOS and OS X lock down the platform to protect the people that benefit from low priced, higher quality apps: end-users and developers. This comes at the expense of malware writers and people who would rather steal apps, but it also impacts people who want to distribute code Apple does not approve for distribution in the App Store.

Enterprise app provisioning opens up potential new threats

Enterprise users may want to distribute a custom app to only their own employees, rather than making it available in the App Store. For these users, Apple has made it possible to effectively sideload iOS apps along with a provisioning profile that vouches for the app.

Apple continues to closely regulate iOS apps, because it does not want to revert iOS back to an insecure platform where legitimate-looking apps from multiple sources can pretend to be things they are not, violating users trust and exploiting them. However, enterprise app provisioning opens up new vectors for distributing bad apps if users choose to "Trust" apps from Untrusted Developers.

Before iOS, malicious desktop software writers could create and distribute bad code pretending to be a legitimate tool, tricking users into running it. Malware could also hide by copying itself into other apps on the system, modifying them the same way a real-world virus injects its DNA into healthy cells, forcing them to replicate more of its own DNA. A software virus is software that can replicate itself by fooling the system to run its replication code.

iOS app signing security (and the identical GateKeeper for OS X) creates walls that prevent all these scenarios from even being possible. First, by default Macs and iOS device don't allow users to install software that isn't signed by a known developer. Both systems also check apps for modification before running them, blocking anything that has been infected or otherwise tampered with after its developer signed it.

Apps are also barred from promiscuously reading data in other apps, and must ask for user permission from the system to access the user's contacts, photos, location or other kinds of data. iOS and OS X app signing have effectively blocked viruses from existing on Apple's platforms, and make it easier for users to protect themselves from other non-viral malware that depends upon tricking the user, rather than the computer.

Setting GateKeeper to "Anywhere" is the equivalent to jailbreaking iOS

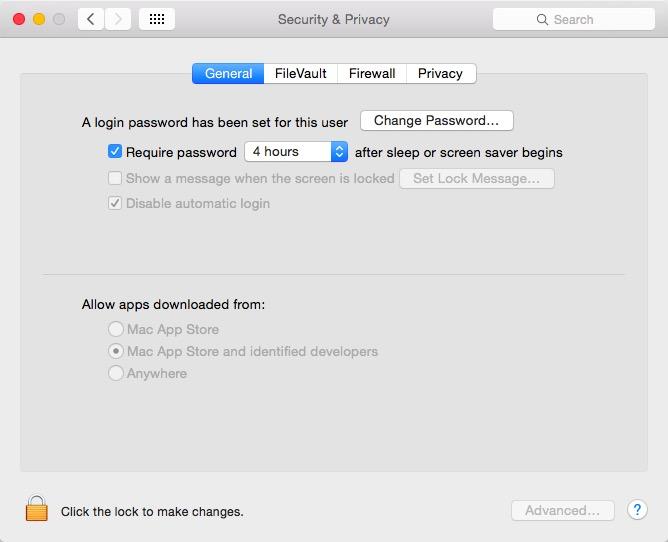

On Macs, Apple's app signing security is branded as "GateKeeper," although the feature is exposed to users only within the Security & Privacy panel of System Preferences under the General tab (without any "GateKeeper" signage). By default, this is set to "Mac App Store and identified developers" (as shown below).

This setting allows the system to only run signed apps from the Mac App Store or "sideloaded" apps that have been signed by a certificate authority the Mac recognizes, such as an enterprise developer distributing its own custom apps signed by a certificate the company registers with Apple.

This can be further restricted to only allow apps to run titles from the App Store, or it can be effectively disabled by selecting "Anywhere," which allows users to manually install malware, including apps infected with bad code like WireLurker's.Opting out of Apple's security makes the end user responsible for managing their own security, similar to the wide open worlds of malware, spyware and adware popups that infest rival platforms

Unlike iOS, where Apple thwarts users from running non-signed apps at all, Mac users can turn off GateKeeper completely. This exposes them to risks the default Mac settings prevent, and is similar to deactivating antivirus software under Windows. Users should be extremely careful about allowing unsigned Mac apps to run.

In addition to turning off app-signing security with the "Anywhere" setting, users can temporarily bypass GateKeeper security by attempting to launch an unsigned app. After getting a warning that the app has been blocked from running, the user can manually go into System Preferences and click a one-time button that forces the system to run the previously blocked, unsigned app. This is as inherently dangerous as turning off GateKeeper entirely.

Trusting an Untrusted App Developer's iOS app is equivalent to jailbreaking

Once users disable the Mac's security defenses by installing unsigned apps, the malware they install can further infect iOS devices. In the case of WireLurker, an iOS payload app, signed by a enterprise developer certificate, could be installed. Once installed, the app could exploit vulnerabilities in iOS to do further damage, although the current version of WireLurker only exploited the enterprise app development path to deliver a comic book app as a demonstration of its abilities.

Masque Attack similarly relies on corporate provisioning to trick users into letting their guard down and approving untrusted apps. When distributed with a provisioning profile, a potentially malicious app simply requests through the system that the user "Trust" the installation. If the user clicks trust, the system installs a provisioning profile that enables that app to run on that iOS device, bypassing normal App Store rules.

However, Apple's corporate provisioning system opens up even more serious issues than simply allowing users to turn off basic security; as detailed by researchers at FireEye, a malicious app can report itself to the user as something basic and innocuous, then present itself to the system as being a replacement for an existing app, similar in some respects to the Fake ID issue involving Android this summer.

By giving itself the Bundle Identifier of an existing app, once the user "Trusts" the installation, the malware app can not only replace another app the user trusts, but can also inherit its data. This essentially lets malware trick a user into updating a known app into a bad app, without the user being aware that what they though they were installing is actually overwriting another app on the system.

The researchers presented an example of an app pretending to be "New Flappy Bird" to entice the user to Trust its installation, but then identifying itself to the system as being com.google.Gmail, allowing it to take over the user's Gmail app. It could then ask for the user's credentials, posing as the Gmail app to steal their login. When it replaces the app, such malware could also glean sensitive data from the original app's caches.

Even worse, Apple exempts custom corporate apps from its App Store rules including limitations on access to private APIs and sandboxing. This allows any malware that can trick the user into "Trust" installing it to do things that App Store titles are strictly prevented from doing, including background monitoring, spoofing system password requests to trick users into presenting their account logins, and even taking advantage of other vulnerabilities to gain even greater access to the system.

Don't Trust apps from Untrusted App Developers!

The researchers who identified Masque Attack therefore advise users "Don't install apps from third-party sources other than Apple's official App Store or the user's own organization."

The group also warns users, "Don't click 'Install' on a pop-up from a third-party web page, no matter what the pop-up says about the app. The pop-up can show attractive app titles crafted by the attacker."

Thirdly, it notes, "When opening an app, if iOS shows an alert with 'Untrusted App Developer,' as shown in Figure 3 (above), click on "Don't Trust" and uninstall the app immediately."

One of the side benefits of iOS requiring apps to be signed by a developer certificate is that once a malware author steals, borrows or otherwise obtains a legitimate app-signing certificate and uses it to install malware on iOS devices, Apple can revoke the signature, which blocks the signed app from being run.

When users "Trust" a self signed app from an "Untrusted App Developer," iOS 7 also installs an enterprise provisioning profile that can be seen in Settings / General / Profiles / Provisioning Profiles. Under iOS 8, the group notes that "devices don't show provisioning profiles already installed on the devices and we suggest taking extra caution when installing apps."

Security professionals asking Apple to make iOS even less like Google's Android

The combination of iOS app signing, GateKeeper and Xprotect make it easy for Apple to address and block problems before they occur, and even arrest issues related to users bypassing the provided security. Apple's users will always be under attack, but they are safer than users on other platforms that make security less of a focus.

Rather than asking Apple to relax its software "walled garden" policies as the tech media has been demanding for years, security professionals are recommending that Apple make its App Store walls even stronger.Rather than asking Apple to relax its software "walled garden" policies as the tech media has been demanding for years, security professionals are recommending that Apple make its App Store walls even stronger

FireEye specifically called on Apple "to provide more powerful interfaces to professional security vendors to protect enterprise users from these and other advanced attacks."

Security expert Jonathan Zdziarski has also called on Apple to make iOS "do a better job of prompting the user before installing applications," noting that a "more thorough explanation to the user about what they are about to click OK to will likely help prevent a number of users from allowing the software to install on iOS in the first place."

He further recommends that Apple disable enterprise app installations by default, stating that the "vast majority of non-enterprise users will never need a single enterprise app installed, and any attempt to do so should fail. So why doesn't Apple lock this capability out unless it's explicitly enabled?"

Zdziarski also recommends tighter app policies that would block malware from pretending to be another app on installation, including new rules to link a corporate provisioning signing certificate to the app bundle identifier, and even limit an app's bundle identifier to accessing web services using the matching hostname (for example, ensuring that an app identified as com.facebook.messenger could only send data to servers identified as messenger.facebook.com.

Another recommendation from Zdziarski is to use the secure element of new iOS devices to validate apps, although only new iPhone 6 models and the latest batch of iPads with Touch ID incorporate a secure element.