Apple working on iPhone video feature that automatically switches cameras during a FaceTime calls

A newly published patent application from Apple outlines teleconferencing and video capture technology that would process video from an iPhone's front and rear cameras simultaneously, and automatically determine which feed to stream based on voice or visual cues.

Apple's "Automatic video stream selection" patent application, published by the U.S. Patent and Trademark Office on Thursday, describes a system that can intelligently determine whether to use the video feed from a smartphone's front or rear facing camera. The tech can be used in a video calls, such as those made via Apple's FaceTime, or for content stored locally on a device.

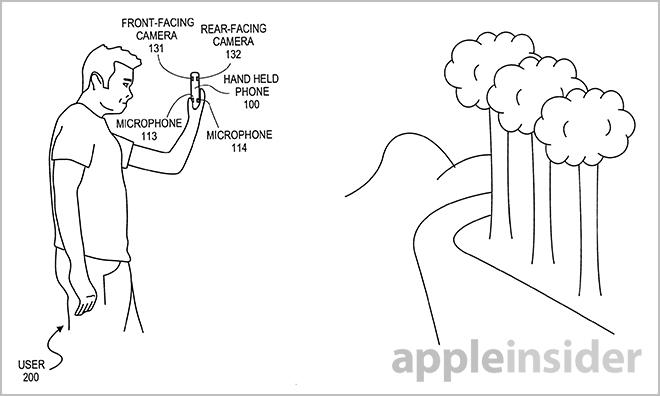

As noted in the document, many handhelds have two cameras for video and picture taking. The shooters are typically facing in opposite directions, with the rear-facing module used for higher-resolution shots, while the front-facing unit handles video call duties.

With advancements in mobile processing power, some smartphones are able to capture video streams from both cameras simultaneously, though the use cases for such a feature have been limited. The iPhone currently uses only one camera at a time, offering users the option to toggle between the two as desired.

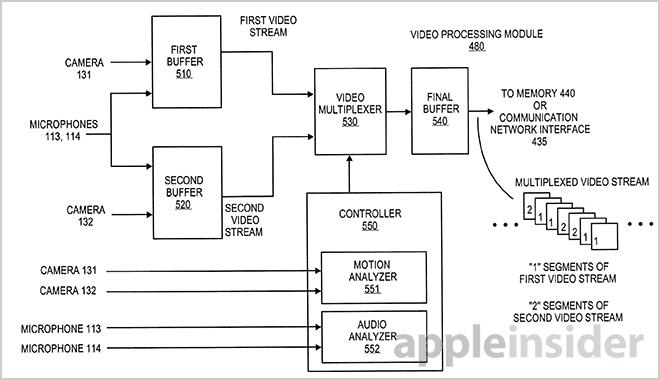

Apple notes that current bandwidth restrictions would make it difficult to stream both video feeds at the same time, thus a method is needed to intuitively select one while keeping audio in sync.

What Apple proposes is a system that captures and monitors two video streams for voice or visual cues, then outputs one of the two depending on a set of predetermined criteria. One example would be a FaceTime call in which a rear-facing camera video stream is being used before the feed automatically switches to the front-facing camera when a person begins to talk.

In order to decide which camera to use, the technology detects speech by using data from microphones facing in the same direction as each camera, or facial expressions such as lip movements.

One embodiment describes an "Auto-Select" indicator that, when selected, will activate the system and begin outputting the multiplexed video.

Various implementations are described in the paper, including "report mode," which captures a a scene from the rear-facing camera and switches to the user when they start talking. When no speech or lip movement is detected, the video switches back to the scene. Both video streams can be interleaved throughout the process and output to local storage.

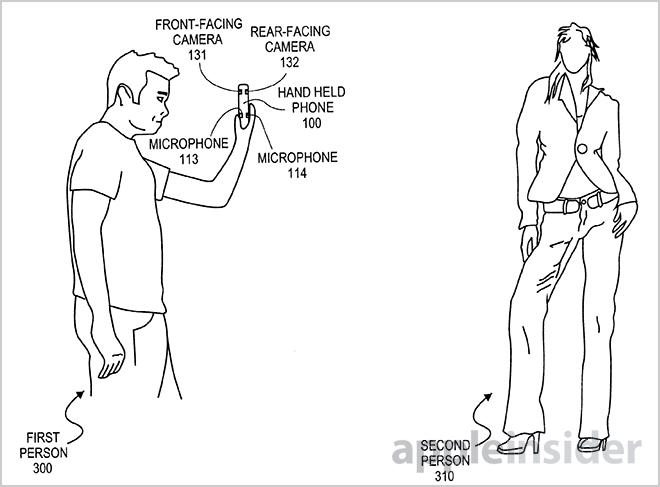

In "interview mode," the phone is located between a user, who is being captured by the front-facing camera, and another person facing the rear camera. The video switches between the two when one person is determined to be speaking.

Finally, along with streaming the multiplexed video in a FaceTime call, the invention allows devices without cellular access to store the content, to be uploaded whenever a Wi-Fi connection is available.

While the invention relies heavily on software, there are hardware limitations, such as audio and video processors, that may restrict Apple from releasing such a feature in the near future. However, as the final specifications of the upcoming "iPhone 5S" have yet to be revealed, it is possible that the smartphone could have the necessary components baked in. As of now, iOS 7 beta does not carry such functionality.

Apple's automatic stream selection patent application was first filed for in May and credits Jae Han Lee and E-Cheng Chang as its inventors.

Mikey Campbell

Mikey Campbell

Chip Loder

Chip Loder

Malcolm Owen

Malcolm Owen

William Gallagher

William Gallagher

Christine McKee

Christine McKee

Michael Stroup

Michael Stroup

William Gallagher and Mike Wuerthele

William Gallagher and Mike Wuerthele