When iPhone launched ten years ago it took basic photos and no video. Apple has since rapidly advanced its capabilities in mobile photography to the point where iPhone is now globally recognized for its billboard-sized artwork and has been used to shoot feature length films for cinematic release. iOS 11 now achieves a new level of image capture with Depth. Here's a look at the future of photos, with a focus on new features debuted in iPhone 8 Plus.

Across the history of Apple — and in particular the last decade of iPhone — the company has introduced a series of emerging new technologies into mainstream use. Many of these ideas had been researched, presented and even productized elsewhere first, including multitouch displays, electronic gyroscopes, accelerometer motion sensors and depth-sensing cameras.

Rather than just being first to market, Apple has repeatedly been first to mass market success, in part because it refines its work before release but also because it often targets a useful and practical real world application for the technologies it launches.

Note that Jeff Han, one of the first researchers to develop and present multiple-touch sensing, didn't bring the technology to mobile devices used by hundreds of millions of users the way Apple's iPhone did. Instead, he developed the idea along lines that resulted in big conference room screens that sell in the thousands — even after being bought out by Microsoft and branded as the $9,000 and up Surface Hub.

As Han himself noted at its debut in 2007, "iPhone is absolutely gorgeous, and I've always said, if there ever were a company to bring this kind of technology to the consumer market, it's Apple."

Similarly, Apple's newest work in depth sensing cameras — first introduced with iPhone 7 Plus last year and expanding on iPhone 8 Plus and the upcoming iPhone X —

has been experimented with in products ranging from the living room scale of Xbox Kinect to specially outfitted tablets associated with the Occipital Structure Sensor or Google's Project Tango.

But rather than being a fad game controller or an experimental niche tool, Apple's multiple forays into depth capture have brought the concept of distance-aware imaging into daily use by tens of millions of users, and will soon radically change how we use our mobile devices, from how we authenticate to how we communicate, visualize data and capture images and video.

Here's a look at how Apple's depth imaging strategy has been playing out over the past year, and where it's headed in the future.

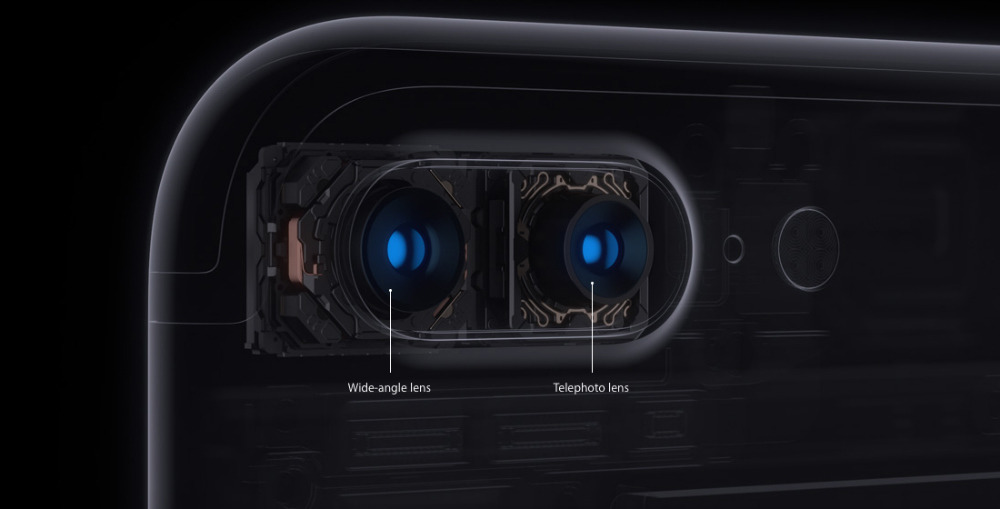

iPhone 7 Plus: Dual Lenses with differential depth capture

Last year's iPhone 7 Plus introduced dual cameras with independent lenses. In retrospect, this feature (exclusive the the Plus model) not only attracted more iPhone buyers to the larger, more expensive Plus (earning Apple more money) but also enabled Apple to introduce a new technology faster than it otherwise could have if it only had one new iPhone model each year and was forced to compromise on the features it could build into a single product.

Previous generation iPhone 6/6s Plus models had similarly retained an exclusive camera feature that set them apart from the standard-sized, regular-priced iPhone: Optical Image Stabilization. OIS uses a ring of incredibly precise micro-motors around the lens that rapidly shift its position in tandem with data from motion sensors in order to counteract hand shake.

In particular OIS helps to capture better photos in low light situations, where the camera aperture needs to be open as wide and for as long as possible, making it especially sensitive to movement. However, OIS is not as visible of a feature and is harder to demonstrate compared to iPhone 7 Plus zoom.

The dual camera of iPhone 7 Plus ventured further into the potential for meaningfully enhancing photographs. It presented two lenses, designating one as the standard wide angle lens and the other as a 2x zoom lens, allowing you to get closer to a subject without losing image quality, whether taking a photo, movie, slo-mo, time-lapse or even a panorama.

The two lenses have independent camera sensors, but they can also work together. This enables smooth, incremental zooming that passes from the standard to the 2x optical camera sensor as the user adjusts the zoom level.

At its debut, Apple also teased an upcoming feature designed to pop dramatic attention on the subject using a bokeh effect. It would blur the background in the style of SLR cameras outfitted with a lens capable of capturing a narrow depth of field — putting the Portrait subject of the foreground into dramatic focus while the background fades away.

It turned out to be a hit, as people loved the way it made their kids, pets and friends look as important in photos as the subjects felt to the photographer capturing them. Unlike the comical lens effects of Photo Booth or Snapchat, iOS 10 Portrait mode captured realistic, high quality images with a dramatic flair that not only flattered the subject but also made the photographer feel confident in their ability to capture great photos as well.

How Apple was capturing these Portrait images wasn't discussed in detail until WWDC17 this summer, when the company outlined that Portrait Mode wasn't the only trick the dual lens camera of iPhone 7 Plus could do. Apple described that in Portrait mode, the two lenses are both configured to capture an equivalent 2x image, each grabbing a slightly different angle of the subject.

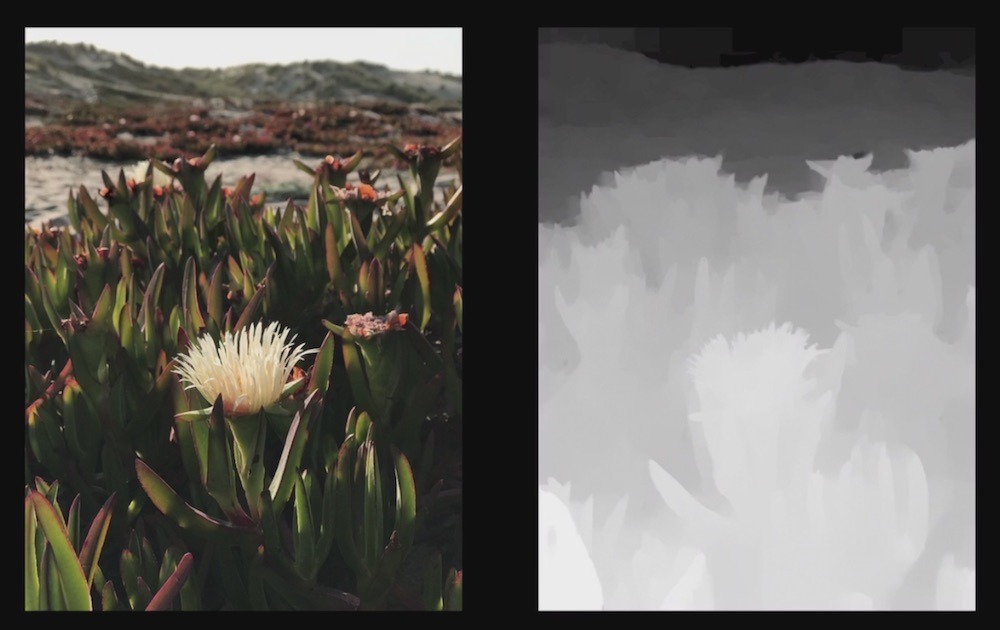

Using differential depth processing — which compares the differences of points in the two images to determine whether objects are near to the camera or far away in the background — the two image sources can be used to create a depth map, a sort of 3D typographical layer of meta data that is paired with the original photo. The Portrait feature uses this data to identify what parts of the photo are distantly in the background, and can apply a blur effect on those areas.

New in iOS 11, third parties can now use Apple's public Depth API to take a photo shot in Portrait and perform their own processing on specific layers. A simple example: desaturating the background so only the subject is in color.

Effectively any existing photo filter could be selectively applied to various depth layers of the image, at any threshold of depth. Portrait mode bokeh was quite literally the tip of the iceberg in terms of what depth capture can do.

As demonstrated in his WWDC17 presentation "Image Editing with Depth," Apple's Etienne Guerard showed how the depth map created by the rear dual camera could be visualized in 3D, and used to selectively apply filters or other effects to various parts of the photo.

By developing and highlighting one specific type of effect for Portrait Mode that worked really well, looked cool, was grounded in the real, creative world of SLR photography and was therefore of obvious value to iPhone users, Apple effectively marketed Portrait Mode as a compelling reason to buy iPhone 7 Plus.

If Apple had introduced the Plus dual camera as an experimental toolbox of various controls to create a myriad options, most users would likely have ignored it all as gimmicky complexity that in many cases just resulted in a doctored looking image.

iPhone 8 Plus: Portrait Lighting using A11 Bionic

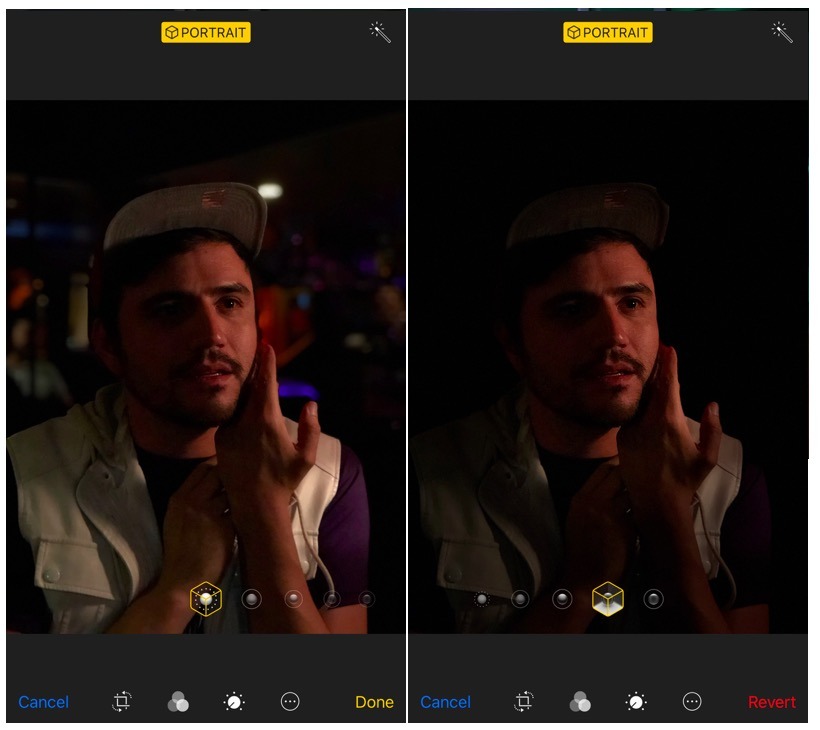

In iOS 11, on iPhone 8 Plus and iPhone X, depth maps from the dual rear cameras are more detailed. The advanced new processing capacity of the A11 Bionic incorporates Apple's custom Image Signal Processing core used to analyze sensor data from the cameras. Its new Neural Net processing is specifically used to recognize and understand objects, faces and bodies as they move in a scene.

With this new processing power, the latest phones' Portrait Lighting feature lets users capture an image and not only apply the background bokeh effect, but also apply an intelligent lighting effect on the foreground, simulating a flattering light bounce card to highlight the subject's face, more dramatic lighting to frame their jawline, or a full blackout of the background to isolate the subject from background distractions entirely, giving them them appearance of being photographed on a studio stage in front of a black backdrop.

Again, rather than introducing a wide open toolbox of infinite depth options, Apple did the work to find a set of Portrait Lighting effects that would benefit a wide variety of users taking photos across a spectrum of circumstances, all inspired by professional photography and studio portraits artists.

Other vendors out of their depth

Other smartphones have been experimenting with dual real cameras for years. Way back in 2011 (as Apple showed off Siri on iPhone 4s) HTC and LG were showing off stereoscopic cameraphones that could capture 3D images and display them in 3D on the screen without requiring glasses. In parallel, 3D marketing hype hit HDTVs for months before consumers decided that they weren't really interested in the dizzying effects of 3D. Nobody is making 3D smartphones anymore.

Years later in 2014, the HTC One M8 debuted a dual camera system that could take two images, mix them to calculate a depth map, and use this to blur backgrounds or digitally refocus the image. Pocket Lint observed, "the effects were rather gimmicky and the benefits of having a dual camera didn't really make an impact."

Early last year LG introduced dual lenses, with one serving as a wide angle lens and the other being super wide to fit even more into the photo. However, the lens focal depth is fixed, making it impossible to use both together as a hybrid camera. Also, while a wide angle lens can be useful, a zoom is generally more practical.

Wide angle shots can be digitally simulated by taking a panorama; a 2x zoom panorama lets you create less distorted panos. It's therefore easier to functionally simulate a wide angle lens than a zoom lens (digital zoom sacrifices photo quality while a panorama can capture even greater detail than a standard photo).

Huawei recently collaborated with Leica to deliver a phone with two cameras, one dedicated to color data and one to monochromatic detail, in an attempt to mimic human vision to deliver better photographs. It's not clear that this approach really delivers improved photos however. A better approach seems to be enhancing the logic that handles focus, an area where Apple has (ahem) focused much of its efforts. Again, having two identical cameras with the same lens means you don't get zoom or wide angles.

Pixel 2: one camera better than two! At least for Google

Google's latest vanity Pixel 2 phone project mimics Apple's iPhone 7 Plus Portrait mode in creating a simulated bokeh effect using a single camera. CNET states that the phone uses a "dual-pixel design, which means that every pixel is split into two; there's a left and right sensor that capture a left and right photo."

Of course, physics and geometry mean that a single camera sensor would capture less accurate depth information than two cameras positioned further apart. It appears that Google is actually just recognizing a face and estimating what regions of hair and body are connected to it, defining these as the foreground and blurring the rest.Apple is also pursuing a series of efforts with machine learning and intelligent computer vision tools that work across all iOS 11 devices, along with even more advanced depth image capture specific to the upcoming iPhone X

The report cited Mario Queiroz, Google's GM and VP of phones, as saying that there was "nothing we're missing for that feature set with that camera," but in using a single rear camera and lens, it does not offer an optical zoom lens like iPhone 7/8 Plus models or the wide angle lens like LG's phone. It also does not attempt to perform more complex effects like the subject Portrait Lighting of iPhone 8 Plus.

It remains to be seen how Pixel's Portrait mode compares to last year's iPhone 7 Plus. But it appears that the real point of the single camera design primarily just makes the phone cheaper for Google to manufacture, at the expense of being able to do creative effects not just to a static photograph, but also in the depth processing effects iOS 11 can perform for video, as well as optical zoom.

In addition to the rear dual cameras of iPhone 7 Plus and iPhone 8 Plus, Apple is also pursuing a series of efforts with machine learning and intelligent computer vision tools that work across all iOS 11 devices, along with even more advanced depth image capture specific to the upcoming iPhone X, which the next article segment details.