Apple's Animoji may provide more ways to interact with the face-tracking animated characters, by playing appropriate sound effects for each avatar triggered when users either make specific expressions or say certain words during a recording session.

Launched alongside the iPhone X as a demonstration of what people can do with the TrueDepth camera system, Animoji quickly became a popular addition to iOS that spawned commercials and "Animoji Karaoke" videos across the Internet. While Apple has added more interactivity to the face-tracked creations, including tongue detection and user-created Memoji, it seems that Apple has more ideas of where Animoji can go.

A patent application filed on February 28 and published by the U.S. Patent and Trademark Office on November 22 for "Voice effects based on facial expressions" is all about how a video clip of a virtual avatar is created, but can be altered with special effects that the user can trigger. In short, Animoji but with added extra features.

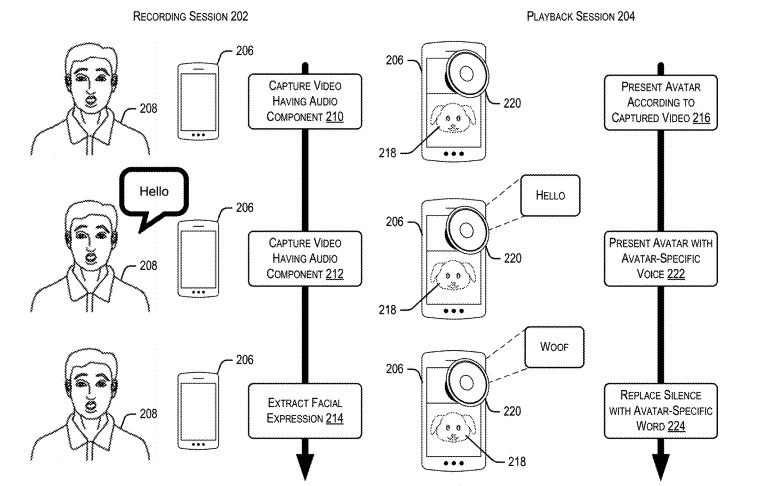

During the recording phase of the Animoji message, the software captures the facial movements and audio from the subject. Usually, the face tracking is mapped directly to the character, with the user's movements mirrored as closely as possible, while the audio track is exactly what was picked up by the iPhone during the period.

According to the patent application, both the visual and audio elements are monitored for specific states, which can then be changed during the playback phase to something else. These states can include detected facial expressions, such as frowning, or even trigger words, which could trigger an effect.

Once triggered, the expression creates an effect that is applied to the character's visuals or the audio section. The character could go through a predefined animation or be altered in certain ways while the expression is being made, while accompanying speech could be pitch shifted or replaced entirely.

For example, a person using a dog avatar could say the word "bark," resulting in the playing of an audio file of a dog barking and altered mouth shapes on the character to match. A user frowning and growling could transform a pleasant dog character into an angry version, with a pitch-shifted or replaced growling sound.

The voice recording in its entirety could be replaced by a synthesized voice in some cases, with voice recognition detecting individual words, pitch, and cadence that can then be reproduced in a character-specific voice.

While the filing of a patent application is not a guarantee that it will appear in a future Apple product or service, while also serving as an indicator of where Apple's interests lie, in this case the concept has a good chance of being implemented in a future iOS update.

In the case of Animoji, it already takes advantage of the facial tracking elements of TrueDepth camera-equipped iPhones, making it feasible for Apple to add in emotion recognition. The voice recognition capabilities, as already demonstrated by the existence of Siri, also lends itself to the audio-tampering elements of the patent application.