A future HomePod audio system may take a nearby user's position into account when playing sound, including creating separate stereo experiences for individuals in different places, and automatically adjusting the audio or even turning it off if the listener moves out of the room.

The HomePod is already a sophisticated smart speaker, taking advantage of the A8 processor to perform a considerable amount of audio processing to create the optimal listening experience for an environment. The speaker is capable of fine tuning its output to best suit a room's architecture and furnishings, filling the area with even sound.

While this is impressive in its own right, Apple believes that the listening experience can be improved, if audio devices can determine where listeners are in relation to it. Knowing the position, or positions for multiple listeners, opens up the possibility of creating even better sound for each individual.

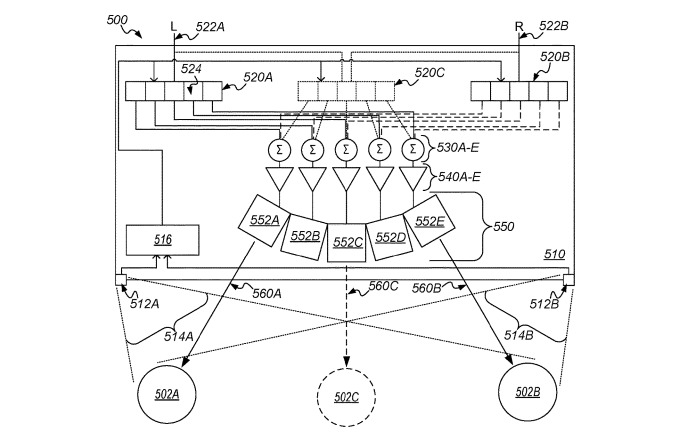

A patent granted by the U.S. Patent and Trademark Office to Apple on Tuesday for a "Multi-listener stereo image array" defines a system where multiple people can experience a stereo audio effect, regardless of where they are seated.

Current stereo setups consist of two speakers providing left and right channels, but outside of the optimal position equidistant between the two speakers, users will typically hear one speaker louder than the other, which can diminish the effect. While this can be mitigated by changing the volumes of each speaker, this doesn't help in situations where there are multiple listeners in varying positions.

In Apple's solution, it suggests the use of a speaker array that consists of a set of drivers configured to collectively generate a separate audio signal pattern for each listener, shaped for each individual based on their position, and transmitted specifically to that user but not others.

The highly directional audio transmission limits who can hear a specific output, so only those who are intended to hear that audio will do so, with two sets for the left and right channels providing a complete stereo experience. Other listeners would not be able to hear the audio intended for another user, but would instead receive their own transmissions.

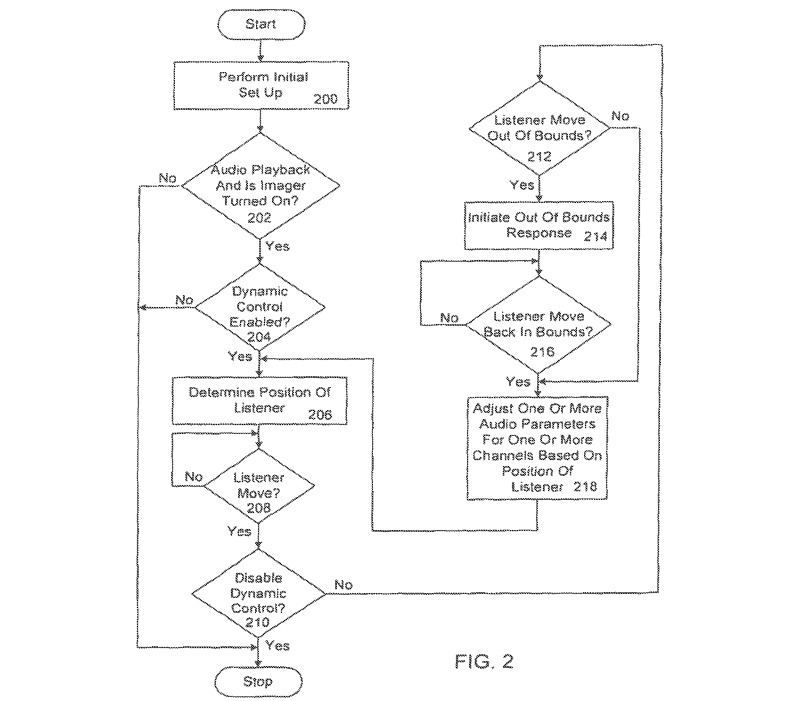

In a second patent, "System and method for dynamic control of audio playback based on the position of a listener," Apple envisions a system where a multi-channel audio system is able to determine where the user is, and to adjust its audio to provide an optimal experience.

Again relying on the idea of there being a perfect position among speakers for listening, Apple suggests the point of perfect playback can be adjusted by changing the volume of speakers and other elements, to compensate for shifts in position by the user.

Using an imaging device like a camera, the system determines the user's position relative to the audio system and automatically adjusts a variety of audio parameters. This can be continually updated as the user moves around, giving as near to optimal audio as possible.

The system could also be set up to perform specific playback-related actions if the user is in specific places relative to the device. For examples, if the user travels out of range for the system to function properly, or out of view entirely, playback of media could be paused or stopped completely, making it a useful mechanism for those watching a movie but are called away for a moment and don't want to miss out what's happening onscreen.

It could also be feasible for a system to turn down the volume if the user gets close to the speakers, as a measure to protect the user's hearing. The ability to determine the user's head position and angle could also be used to fine tune the audio further, such as making one channel louder than the other if a user's ear is partially obscured.

Apple files numerous patents with the USPTO on a weekly basis, but while the filings are no guarantee that Apple will be using the concepts described in a future product or service, it does indicate areas of interest for the company.

The idea of knowing more about the listening environment to adjust playback has been explored in an earlier patent, one that suggested the use of three-dimensional sensor data to create a depth map of a room. Scanning the environment visually could provide clues as to what the optimal acoustic settings could be, as well as triggering a readjustment of those same settings if new items are added or elements removed from the room.

The same system would feasibly be able to perform some gesture recognition, allowing the user to control music playback with their hands and fingers while sitting down, rather than using a separate device, pressing buttons, or disturbing others by commanding Siri.