Apple's Siri is always said to lag behind its competitors, but Apple has made giant strides with it. Even still, though, there are a few really key things we still long to see added.

The received wisdom is that Siri lags behind Amazon Alexa and Google Home, but received wisdom has a problem. Whatever it's about, the opinion tends to be quickly formed and it takes a very long time to change it. So right now Siri has zoomed ahead with the advances in iOS 13 and iPadOS, but it's going to take time for that to really register.

That's partly because it's going to be months before we all have the final versions on our iPhones and iPads. It's also because it's then going to take us time to really experience the differences.

It's especially so because some of those differences are a direct result of improvements to Siri Shortcuts and that's nowhere near as mainstream as Alexa is.

And it's also the case that Siri can go further, that there are things we'd like it to change, areas we'd love it to improve in. Yet while this may be us reading too much into it, some of this year's improvements even lay the groundwork for these areas.

The wish list

If there is one thing that would make Siri better, it's fixing how much of an interruption it is. The most visible part of that is on an iPhone or iPad when you invoke Siri and immediately lose the whole screen to that black background and, admittedly, gorgeous waveform.

Oddly, this is one area where Siri is best on the Mac.

As much as we all wanted to get Siri on the Mac, once we had it, we realised we were usually typing on these machines and it was quicker to do that than to ask Siri for things.

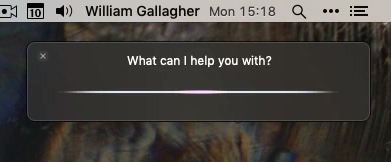

Only, when you do ask Siri on a Mac, the good part is how it slides in like a notification. Ask what you want to ask, and Siri displays the results in a list. The results are the same as if you asked on an iPad and the list looks just as it would on an iPhone, but it doesn't take up the whole screen.

That means both that you can be reading something else on the display as you talk to Siri, but also that you can drag a result out. If your Siri search turns up a document, just drag it out to Word or the desktop. You're only dragging an alias, but you're able to drag it where you want.

Once Siri on the Mac has found what you're looking for, you can drag an alias of them out to anywhere you like.

It would be good to have that facility in iPadOS too, where you have the room to see a similar Siri notification and drag items off into Files.

Get on with it

Even though this is already less intrusive on a Mac than on iPad or iPhone, though, it's still an interruption.

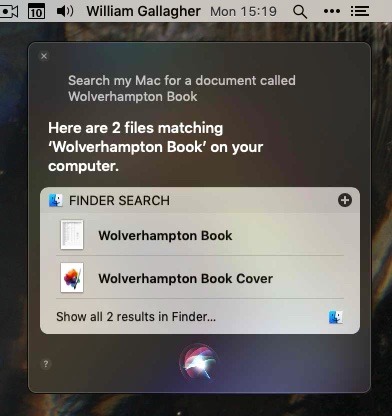

Whatever you do with Siri, whichever device you're using, the procedure is the same. You stop what you're doing, invoke Siri, ask what you want, and wait for the reply.

Sure, you often have a supplementary step when you have to ask it again because something wasn't understood.

Even when it works, though, it is still a discrete, specific step. It is possible on the Mac to keep typing as you talk to Siri, but it's a contortion. You have to click back into your word processor, whether you've clicked on the Siri icon or you have a Mac that responds to "Hey, Siri."

What we'd like is to see us all being able to just talk to Siri as we go. To carry on typing notes during a phone call as we ask it to add a meeting to our calendar, for instance.

Every. Single. Time. These are the four steps of using Siri and how much it interrupts your work as you use it.

It would be good, too, if Siri were just slightly cleverer about figuring out which device you're talking to. The HomePod seems to have bionic ears, for instance, and will pick us up calling for Siri when we're in the next zip code.

When we're at a desk with a Mac, an iPhone, a HomePod, an iPad and our Apple Watches, that HomePod will usually think we're talking to it. However, the iPhone will react too. It's only for a moment, but the iPhone screen lights and then goes dark.

That's distracting when you did mean to use your HomePod, it's infuriating when you meant to use the iPhone.

Let Siri be more subtle, with no screens reacting until the right device has been recognized. We already have the issue that we've spoken into the air asking for Siri and then realised we're in the bathroom without any devices. Let's have it so that we never mentally link Siri to any device, we just know it's with us.

Getting there

We are on the way to that. Apple's AirPods 2 added the ability to listen out for the phrase "Hey, Siri," but they took something away, too. They took away the bleep. With the original AirPods, not only did you have to tap to get Siri to work, but you waited for the bleep that told you to start speaking.

Now you simply speak and it isn't just quicker and more convenient, it actually changes how you talk to Siri. We had trained ourselves to wait for the bleep, to hold that thought for half a moment, and then to race to say what we wanted in case the ending bleep came.

That became more natural, more second nature, than we'd have thought, but one thing that does not get any less cumbersome is having to say "Hey, Siri," so many times.

We would love Apple to ditch that, to let devices listen to us — while retaining our privacy — and understand when we meant Siri to do something.

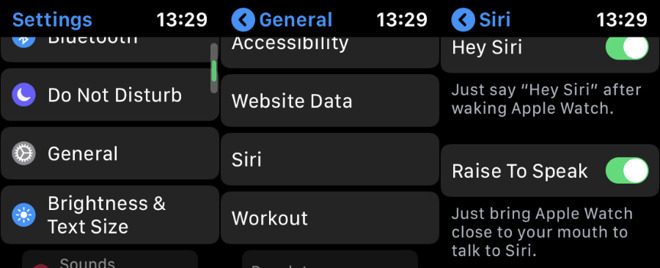

How to set up Raise to Speak on Apple Watch. The dramatic arm-flinging pose you have to use is not pictured.

And of course Apple has already done this. If you have a Series 3 or 4, and watchOS 5 or later, you have Raise to Speak. In theory, you just have to lift your wrist and begin speaking. The very action of moving your wrist is interpreted as your having said "Hey, Siri."

If only it worked. Right now you have to strike a pose like you've just completed an Argentine tango, or you're about to go into battle behind your Captain America shield.

Conversational Siri for Beginners

For now, we have to keep saying "Hey, Siri" and we have still have to be too conscious of our speaking rate. It is fantastic that we can say things like: "Hey, Siri, add Pepsi Max to our shopping list in OmniFocus," and it will do it. Except when it doesn't. Saying precisely those words in that sequence either gets you what you want, or it has Siri trying to add to your Apple Reminders app instead.

However, as well as AirPods becoming more natural because they lack the old bleep, so apps are about to become more natural because of the specific advances in Siri for iPadOS and iOS 13.

Developers, such as the Omni Group which makes OmniFocus, have now been given new tools to work with Siri and their apps. An app developer can do what's called donating a shortcut to Siri.

If you use an app to add an item to your shopping list, that app can then create a Shortcut for that action and donate it to Siri. It depends on the app developer, but via shortcuts, they can make Siri work with their apps better than ever before.

And Apple has also created what it's calling Conversational Shortcuts.

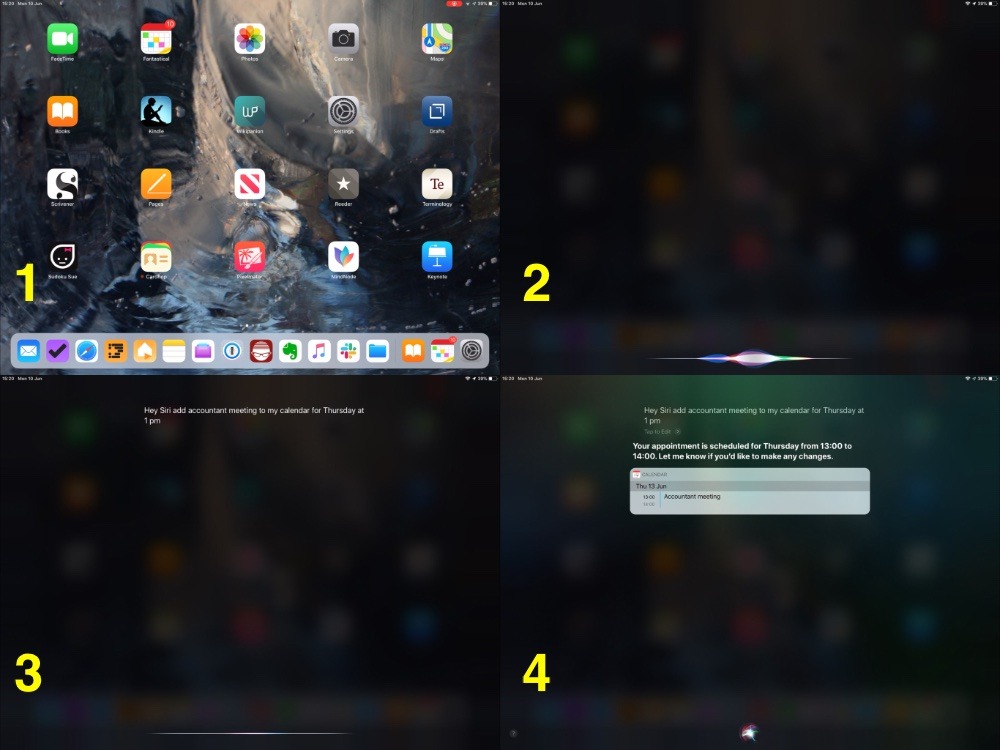

This means that you will be able to start a Siri Shortcut by speaking and then it will be able to get Siri to ask you questions about it.

Right now that is being positioned as something to improve Siri Shortcuts and to give developers more they can do with this system. However, most users will not bother with the distinction between Siri and Siri Shortcuts, they'll just know that now you can converse more with Siri to get things done.

We'll only really appreciate that when we and developers have had these improvements for a while. Yet added to Apple's promise of an even more natural-sounding voice for this assistant, Siri is moving ahead tremendously.

And of course we now have all the more reason to think that it's going to get better and better. The fact that iPadOS has been formed out of the ribs of iOS 13 is great, but the fact that its first iteration brings more productivity features is superb.

Craig Federighi even replied directly to an email from an iPad user about improving Siri on it.

Craig replied me back!

— Juliano Rossi (@_JulianoRossi) 9 June 2019

The mail was about my request for Siri on iPad to behave like on the Mac, without filling the entire screen.

Well, maybe next year. pic.twitter.com/7xIgxDB7hf

The tweet about that email was first reported by MacRumors, though you can take a line like "we'll consider it" too seriously. Whether Federighi was just being polite or not, though, Apple is getting the message that Siri and the iPad are important to us all.

Keep up with AppleInsider by downloading the AppleInsider app for iOS, and follow us on YouTube, Twitter @appleinsider and Facebook for live, late-breaking coverage. You can also check out our official Instagram account for exclusive photos.

Keep up with all the Apple news with your iPhone, iPad, or Mac. Say, "Hey, Siri, play AppleInsider Daily," — or bookmark this link — and you'll get a fast update direct from the AppleInsider team.