Alongside its work in creating tools to create Augmented Reality and Virtual Reality experiences, Apple is also looking at how such work can be recorded, shown again, or transmitted to others.

Apple has been working on how to create both Augmented Reality and Virtual Reality for many years, but a new patent shows it's also looking at recording. A fully recorded AR/VR experience could be documented and replayed later, accord to Apple.

It's already possible to record live streams of, for instance, gameplay, but Apple's aim is to capture both the computer-generated elements of an experience, and the real-world ones.

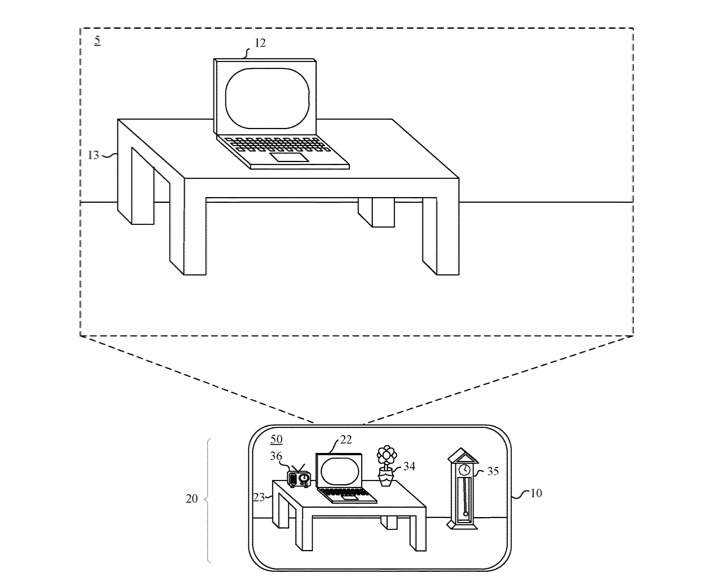

Augmented Reality, in particular, combines real-world environments with digitally-added elements. Apple refers to the combination of this, virtual reality and any real-world interaction such as audio, as being "computer generated reality content" (CGR) or "mixed reality" (MR).

"Existing computing systems and applications do not adequately facilitate the recording or streaming of CGR content," says Apple in "Media Compositor For Computer-Generated Reality."

"In contrast to a VR environment," it continues, "which is designed to be based entirely on computer-generated sensory inputs, a mixed reality (MR) environment refers to a simulated environment that is designed to incorporate sensory inputs from the physical environment... [A] mixed reality environment is anywhere between, but not including, a wholly physical environment at one end and virtual reality environment at the other end."

Rather than being a passive Twitch-like stream of whatever the original user was seeing or doing, this could provide a more immersive recording.

"Some implementations provide composited streams that include richer information about the CGR experience than the screen and audio capture information of traditional video recording techniques," continues the application. "The composited streams can include information about the 3D geometry of the real or virtual objects in the CGR experience."

"Including 3D models of real or virtual objects in a composited stream enables an enhanced experience for the viewers of the recording or live streaming, e.g., allowing viewers to experience the scene from different viewpoints than the creator's viewpoint, allowing viewers to move or rotate objects," it says.

Apple's system would allow the viewer of such a recording to move their head to see these different viewpoints, but also to "experience sounds based on their own head orientation, relative positioning to the audio sources, etc."

At the core of the patent application is a series of descriptions of how a device, basically any computer with enough processing power and storage, could capture elements separately but simultaneously. They can then be recombined "to form a composited stream" which includes the whole experience.

"The device obtains a first data stream comprising rendered frames and one or more additional data streams comprising additional data," it says. "The rendered frame content (e.g., 2D images or 3D models) represents real content or virtual content rendered in a CGR experience..."

Apple refers to the computer recording these streams as being a "media compositor," which is able to record multiple data streams and keep them synchronized.

"A media compositor obtains a first data stream of rendered frames and a second data stream of additional data," it says. "The media compositor forms a composited stream that aligns the rendered frame content with [additional data such as timestamps]."

This patent application is credited to five inventors, Ranjit Desai, Venu M. Duggineni, Perry A. Caro, Aleksandr M. Movshovich and Gurjeet S. Saund.

Movshovich's previous related patents include one for an "A/V decoder having a clocking scheme that is independent of input data streams."