Apple is looking to add Apple AR technology to the Measure app on iPhone, making it more accurate and automatically annotating objects with their measurements.

The Measure app on iPhone became useful, and more accurate, once LiDAR was introduced. Now Apple has been researching bringing its Apple AR technology to make it faster and more accurate.

In the future, pointing your iPhone camera at an object could automatically get you an on-screen notification of its measurements. It will do it through AR, and it will do it in part through Machine Learning on different types of objects.

"Automatic measurements based on object classification," is a newly-revealed patent application. It's concerned with how to determine which object you're interested in, then how measure it accurately.

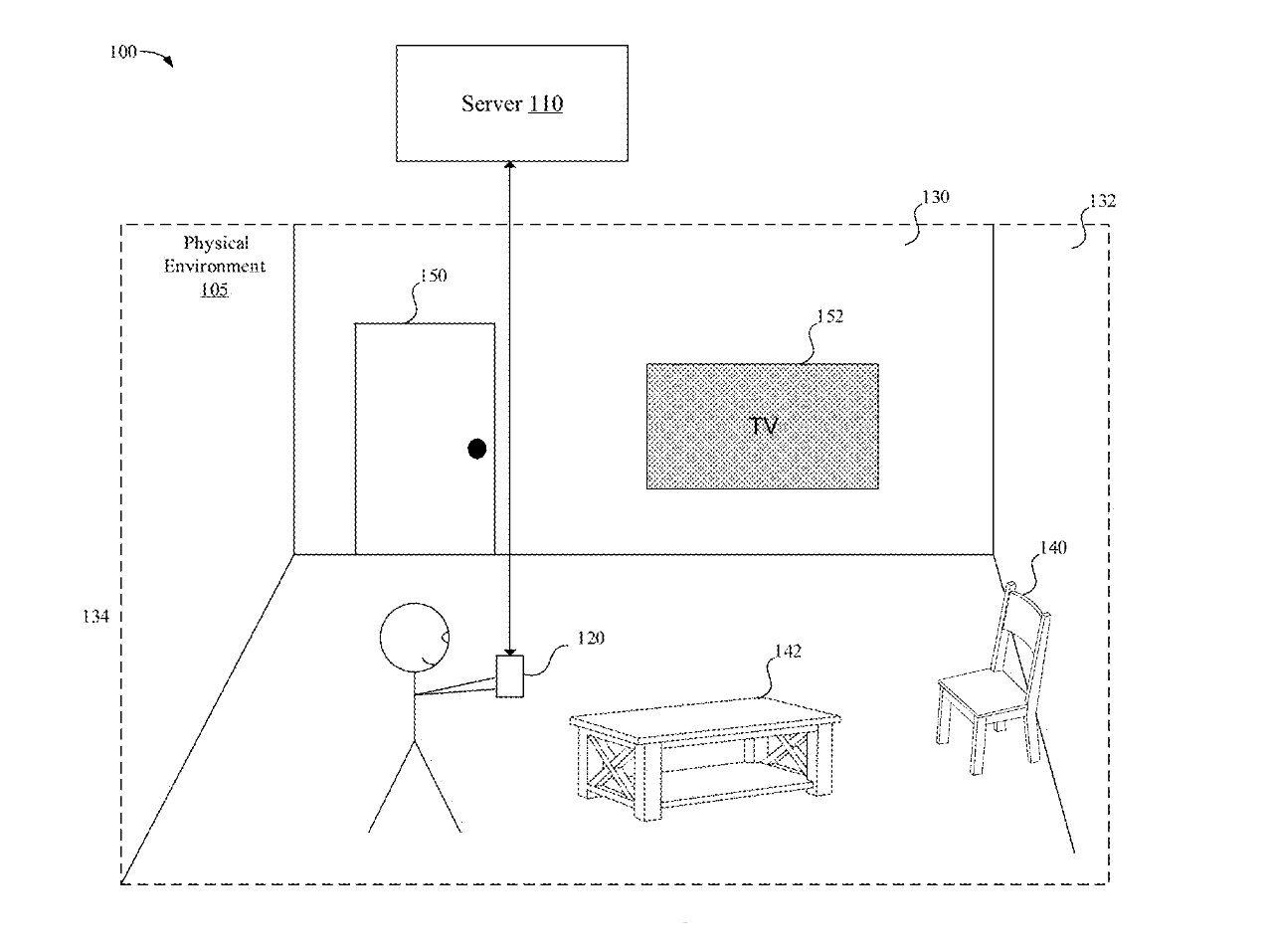

The patent application is particularly broad, including "devices, systems, and methods that obtain a three-dimensional (3D) representation of a physical environment." The detail generated is based on a whole series of different sensors and measurements to do with "depth data and light intensity image data."

"[These] generate a 3D bounding box corresponding to an object in the physical environment based on the 3D representation," says Apple, "classify the object based on the 3D bounding box and the 3D semantic data, and display a measurement of the object, where the measurement of the object is determined using one of a plurality of class-specific neural networks selected based on the classifying of the object."

First, AR will determine what object you're interested in. The patent application doesn't dwell on precisely how that determination is made. Still, you can infer that it's similar to how Portrait mode recognizes you're focusing on the person in the foreground.

"The generation of object detection and object measurements is facilitated in some implementations using semantically-labelled 3D representations of a physical environment," says the patent application. "Some implementations perform semantic segmentation and labeling of 3D point clouds of a physical environment."

However it determines what you are and are not interested in measuring, this future Measure app may use "multiple, different techniques."

"In some implementations, an object is measured by first generating a 3D bounding box for the object based on the depth data," says Apple, "refining the bounding box using various neural networks and refining algorithms described herein."

A bounding box could start by determining the basic width, height, and depth of an object. Then the Measure app goes further by attempting to classify the object, for instance, whether it's a chair or a TV set.

"[For example, an] object is measured using different types of measurements for different object types using machine learning techniques (e.g., neural networks)," says Apple. "[Different] types of measurements may include a seat height for chairs, a display diameter for TVs, a table diameter for round tables, a table length for rectangular tables, and the like."

Machine Learning is already able to recognize and classify objects, even from flat images. Mac image editors such as Pixelmator Pro use it to automatically label layers, for instance.

Apple wants to "train" Machine Learning to be good for measuring particular objects.

"For example, one model may be trained and used to determine measurements for chair type objects (e.g., determining a seat height, arm length, etc.)," says Apple, "and another model may be trained and used to determine measurements for TV type objects (e.g., determining a diagonal screen size, greatest TV depth, etc.)."

The idea is that if you measure a table, you will want to know the details of its surface. Whereas if you measure a TV set, you might want both the physical width and the diagonal screen size, not counting bezels.

Really, the point of this potential new Measure app is that you don't have to care or know any of the details of how an object is being measured. Instead, you just point the iPhone, and all of this proposed technology results in a label on-screen, showing you how wide, deep, or tall an object is.

This patent application is credited to five inventors. That includes Aditya Sankar, whose previous work includes a patent regarding indoor scene capture.