Future Apple devices may be able to use motion detection to read lips, and so trigger Siri without needing a microphone to constantly listen out for commands.

If you're old enough, the notion of Siri being able to read lips in any way has immediately and worryingly brought Arthur C. Clarke and Stanley Kubrick's "2001: A Space Odyssey" to mind. Hopefully if Apple is channeling that 1968 film, it is because the computer HAL 9000 had superb voice recognition skills.

In comparison, Siri has much more difficulty reliably and consistently understanding spoken commands, but to be fair it also hasn't yet tried to kill the crew of a spaceship. It's swings and balances.

Conceivably, though, giving Siri an extra aspect such as detecting mouth and head movements could improve its accuracy. A newly-revealed patent application called "Keyword Detection Using Motion Sensing," aims to do that — but then something more.

"[Data] is received from a motion sensor, for instance, recording the motion of a user as the user utters a spoken input," says the patent application. "A determination is made whether a portion of the motion data matches reference data for a set of one or more words (e.g., a word or phrase)."

"Additionally, voice [only] control systems can result in false positive responses ," mentioned Apple, "if the audio sensor picks up ambient noise or speech from an unintended user."

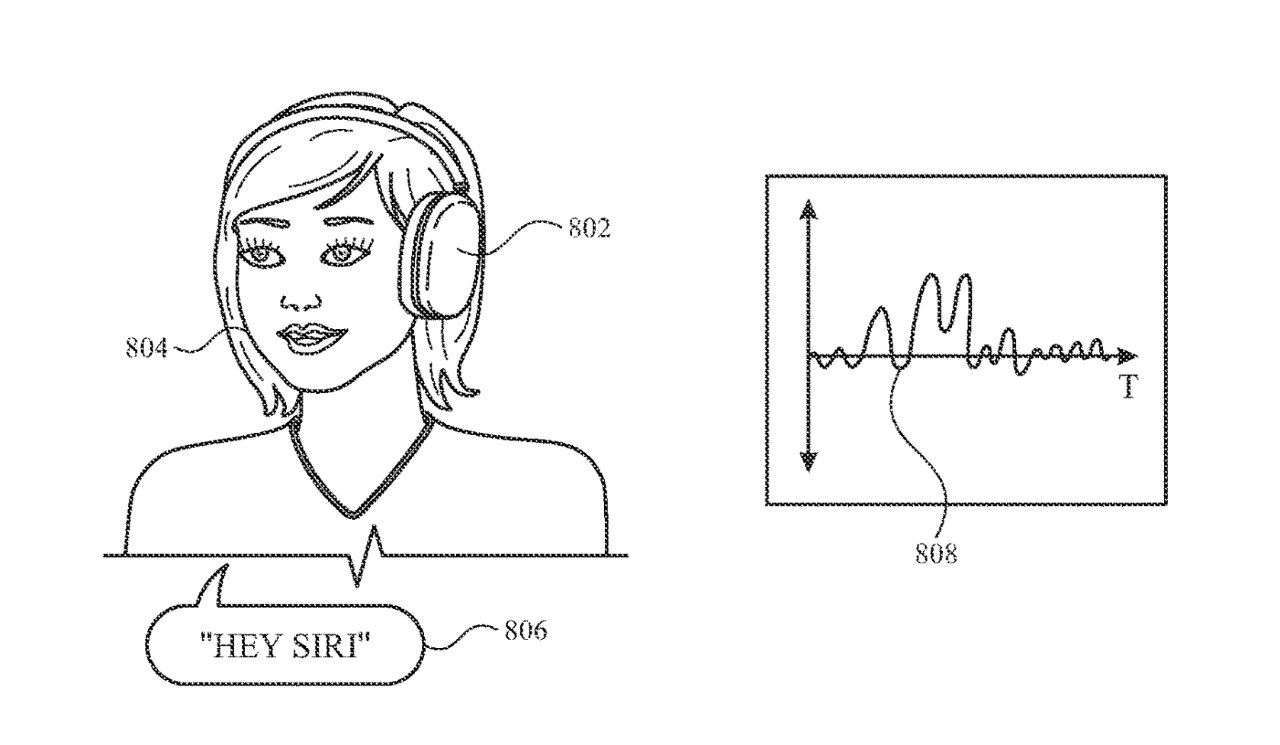

The patent application details how mouth movements can be compared against previous data as Siri or a device attempts to find a match.

Detail from the patent showing how motion detection can be compared against previous data to determine what someone is saying

But this is not really for improving Siri, and it's not a sign that Apple is planning some devices without microphones. Instead, Apple proposes that such motion detection could mean being able to switch off the microphones that a device uses to constantly listen for "Siri," or "Hey, Siri."

"[Continuously] detecting and processing audio data expends power and processing capacity even when the user is not actively using voice control," says Apple.

"When a user speaks, the user's mouth, face, head, and neck move and vibrate," it continues. "Motion sensors such as accelerometers and gyroscopes can detect these motions, while expending relatively little power compared to audio sensors such as microphones."

Detecting motion now and comparing it to previous records seems clearly able to work when what's being said is "Hey, Siri," or some other regular command. like "Next track." When the spoken command is less common, such as "Hey, Siri, open the pod bay doors," then surely motion detection won't work.

But as long as motion detection is fast enough, spotting that a user has said "Siri" should mean the device being able to turn on the microphones in time to catch the rest vocally.

Other than referring to accelerometers and gyroscopes, Apple's patent application doesn't spend much time discussing the devices that could be used to implement this proposal.

However, it is lip reading by motion detection, rather than through cameras and line of sight. So, especially in conjunction with an iPhone, this motion detection could theoretically work with AirPods as well as, for instance, Apple Vision Pro.

This patent application is credited to two inventors, including Madhu Chinthakunta. Chinthakunta's previous work for Apple includes a patent for having Siri automatically make arrangements and calls on your behalf.