Protesters at Apple Park are demanding that the company reinstate its recently abandoned child safety measures.

Nearly three dozen protesters gathered around Apple Park on Monday morning, carrying signs that read, "Why Won't you Delete Child Abuse?" and "Build a future without abuse."

The group, known as Heat Initiative, is demanding that the iPhone developer bring back its child sexual abuse material (CSAM) detection tool.

"We don't want to be here, but we feel like we have to," Heat Initiative CEO Sarah Gardner told Silicon Valley. "This is what it's going to take to get people's attention and get Apple to focus more on protecting children on their platform."

Today, we showed up at #WWDC, with dozens of survivors, advocates, parents, community + youth leaders and allies, calling on Apple to stop the spread of child sexual abuse on their platforms now.

— heatinitiative (@heatinitiative) June 10, 2024

We must build a future without abuse. pic.twitter.com/hky9eh3dFD

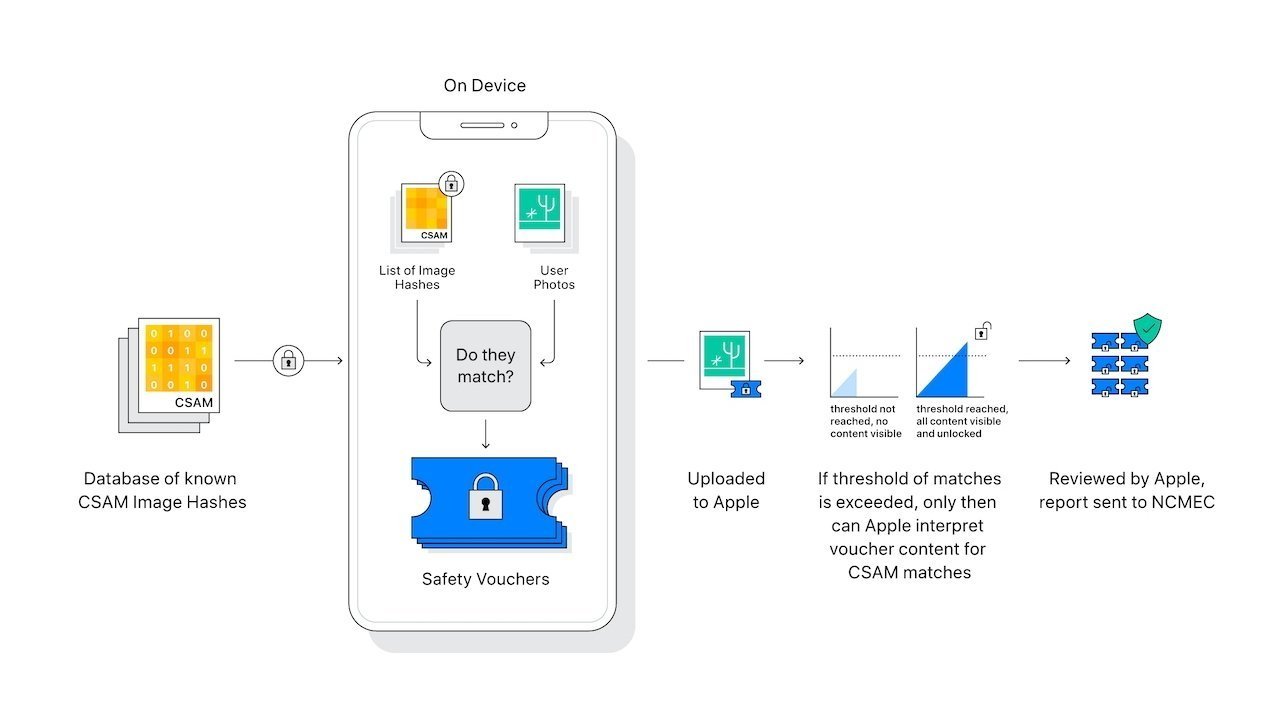

In 2021, Apple had planned to roll out a new feature capable of comparing iCloud photos to a known database of CSAM images. If a match were found, Apple would review the image and report any relevant findings to NCMEC. This agency works as a reporting center for child abuse material that works with law enforcement agencies around the U.S.

After significant backlash from multiple groups, the company paused the program in mid-December of the same year, and then ultimately abandoned the plan entirely the following December.

When asked why, Apple said the program would "create new threat vectors for data thieves to find and exploit," and worried that these vectors would compromise security, a topic that Apple prides itself in taking very seriously.

While Apple never officially rolled out its CSAM detection system, it did roll out a feature that alerts children when they receive or attempt to send content containing nudity in Messages, AirDrop, FaceTime video messages and other apps.

However, protesters don't feel like this system does enough to hold predators accountable for possessing CSAM and would rather it reinstate its formerly abandoned system.

"We're trying to engage in a dialog with Apple to implement these changes," protester Christine Almadjian said on Monday. "They don't feel like these are necessary actions."

This isn't the first time that Apple has butt heads with Heat Initiative, either. In 2023, the group launched a multi-million dollar campaign against Apple.