While Apple's controversial plan to hunt down child sexual abuse material with on-iPhone scanning has been abandoned, the company has other plans in mind to stop it at the source.

Apple announced two initiatives in late 2021 that aimed to protect children from abuse. One, which is already in effect today, would warn minors before sending or receiving photos with nude content. It works using algorithmic detection of nudity and only warns the kids — the parents aren't notified.

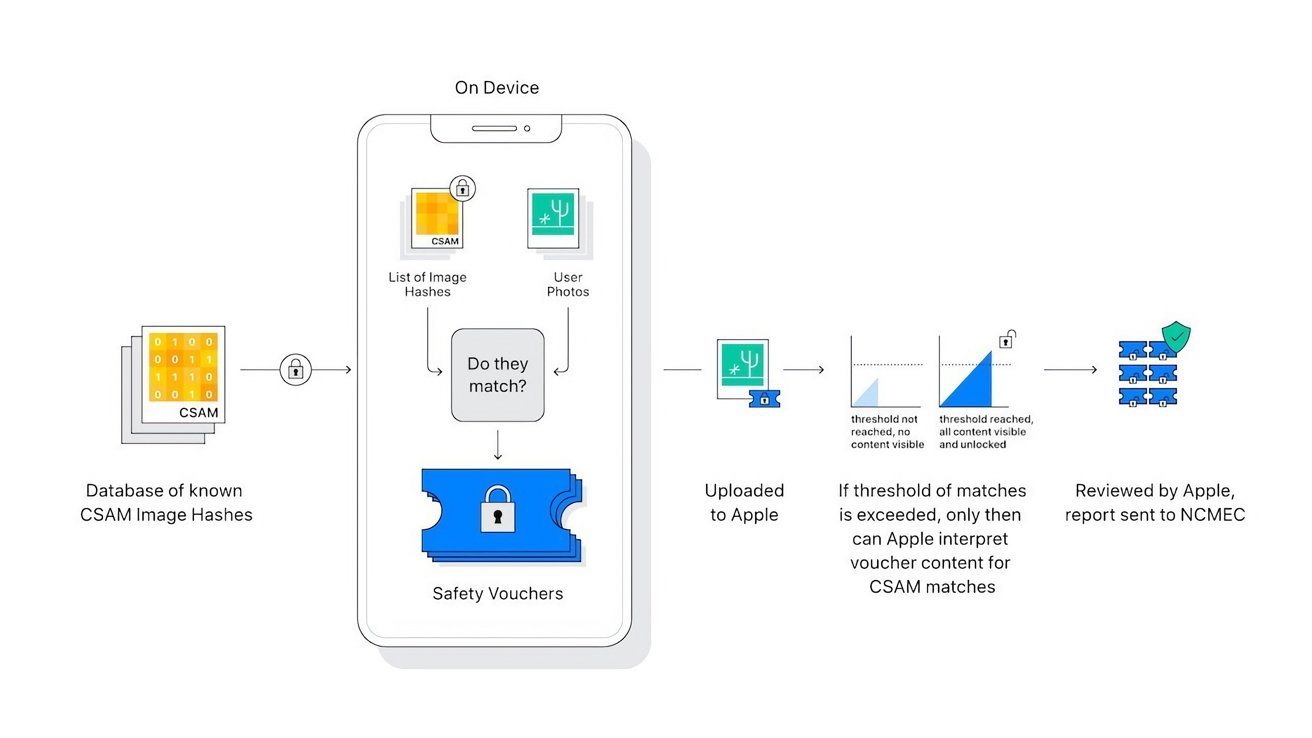

The second, and much more controversial feature, would analyze a user's photos being uploaded to iCloud on the user's iPhone for known CSAM content. The analysis was performed locally, on device, using a hashing system.

After backlash from privacy experts, child safety groups, and governments, Apple paused the feature indefinitely for review. On Wednesday, Apple released a statement to AppleInsider and other venues explaining that it has abandoned the feature altogether.

"After extensive consultation with experts to gather feedback on child protection initiatives we proposed last year, we are deepening our investment in the Communication Safety feature that we first made available in December 2021.""We have further decided to not move forward with our previously proposed CSAM detection tool for iCloud Photos. Children can be protected without companies combing through personal data, and we will continue working with governments, child advocates, and other companies to help protect young people, preserve their right to privacy, and make the internet a safer place for children and for us all."

The statement comes moments after Apple announced new features that would end-to-end encrypt even more iCloud data, including iMessage content and photos. These strengthened protections would have made the server-side flag system impossible, which was a primary part of Apple's CSAM detection feature.

Taking a different approach

Amazon, Google, Microsoft, and others perform server-side scanning as a requirement by law, but end-to-end encryption will prevent Apple from doing so.

Instead, Apple hopes to address the issue at its source — creation and distribution. Rather than target those who hoard content in cloud servers, Apple hopes to educate users and prevent the content from being created and sent in the first place.

Apple provided extra details about this initiative to Wired. While there isn't a timeline for the features, it would start with expanding algorithmic nudity detection to video for the Communication Safety feature. Apple then plans on expanding these protections to its other communication tools, then provide developers with access as well.

"Potential child exploitation can be interrupted before it happens by providing opt-in tools for parents to help protect their children from unsafe communications," Apple also said in a statement. "Apple is dedicated to developing innovative privacy-preserving solutions to combat Child Sexual Abuse Material and protect children, while addressing the unique privacy needs of personal communications and data storage."

Other on-device protections exist in Siri, Safari, and Spotlight to detect when users search for CSAM. This redirects the search to resources that provide help to the individual.

Features that educate users while preserving privacy has been Apple's goal for decades. All the existing implementations for child safety seek to inform, and Apple never learns when the safety feature is triggered.