In the days before WWDC, it was widely believed that Apple would be playing catch-up to everyone else in the consumer tech industry by rushing to bolt a copy of ChatGPT onto its software and checking off the feature box of "Artificial Intelligence." That's clearly not what happened.

Rather than following the herd, Apple laid out its strategy for expanding its machine learning capabilities into the world of generative AI in both writing and creating graphic illustrations as well as iconic emoji-like representations of ideas, dreamed up by the user. Most of this could occur on device, or in some cases, delegated to a secure cloud instance private to the individual user.

In both cases, Apple Intelligence, as the company coined its product, would be deeply integrated into the user's own context and data. This is instead of it just being a vast Large Language Model trained on all the publicly scrapeable data some big company could appropriate in order to generate human-resembling text and images.

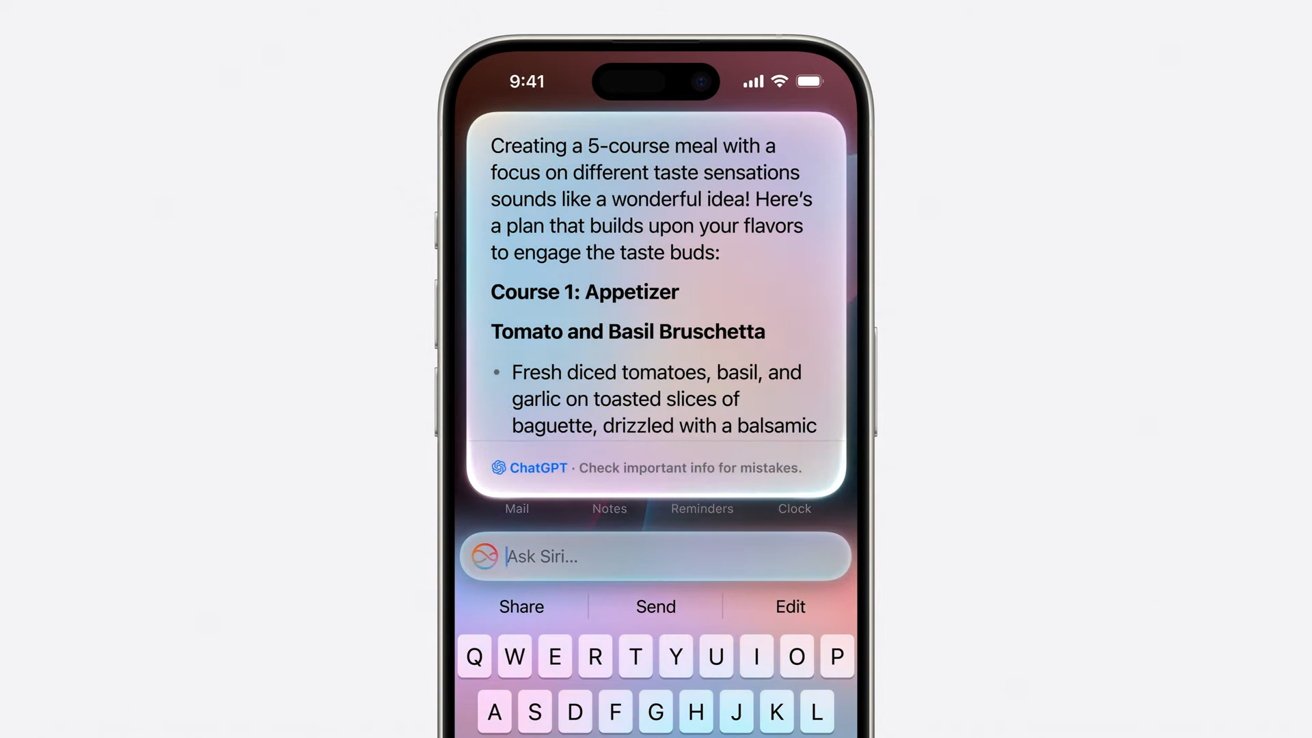

At the same time, Apple also made it optional for iOS 18 and macOS Sonoma users to access such a vast LLM from Open AI. They did this by enabling the user to grant permission to send requests to Chat GPT 4o, and even take advantage of any subscription plan they'd already bought to cover such power hungry tasks without limits.

Given that Apple delivered something vastly different than the AI tech pundits predicted, it raises the question: is it possible that Apple Intelligence isn't really delivering true AI, or is it more likely that AI pundits were really failing to deliver Apple intelligence?

The Artificial Intelligence in the tech world

Pundits and critics of Apple long mused that the company was desperately behind in AI. Purportedly, nobody at Apple had ever thought about AI until the last minute, and the cash strapped, beleaguered company couldn't possibly afford to acquire or create its own technology in house.

Obviously, they told us for months, the only thing Apple could hope to do is crawl on its knees to Google to offer its last few coins in exchange for the privilege of bolting Google's widely respected Gemini LLM onto the side of the Mac to pretend it was still in the PC game.

It could also perhaps license Copilot from Microsoft, which had just showed off how far ahead the company was in rolling out a GPT-like chat-bot of its own. Microsoft had also paid AI leader Open AI extraordinary billions to monopolize any commercial productization of its work with the actual Chat GPT leading the industry in chat-bottery.

A few months ago when Open AI's unique non-profit status appeared on the verge of institutional collapse, Microsoft's CEO was hailed as a genius for offering to gut the startup for brains and leadership by hiring away its people out from under its stifling non-profit chains.

That ultimately didn't materialize, but it did convince everyone in the tech world that building an AI chat bot would cost incredible billions to develop, and further that the window of opportunity to get started on one had already closed and one way or another, Microsoft had engineered a virtual lock on all manner of chat-bot advancements promised to drive the next wave of PC shipments.

Somehow, at the same time, everyone else outside of Apple had already gained access to an AI chat-bot, meaning that just Apple and only Apple would be behind in a way that it could never hope to ever gain any ground in, just like with Apple Maps.

Or like the Voice First revolution lead by Amazon's Alexa that would make mobile apps dry up and blow away while we delightfully navigate our phones with the wondrous technology underlying a telephone voicemail tree.

Or the exciting world of folding cell phones that turned into either an Android tablet or just a smaller but much thicker thing to stuff in your pocket because iPhones don't fit in anyone's pockets.

Or smart watches, music streaming, or the world of AR/VR being exclusively lead by Microsoft's HoloLens in the enterprise and Meta and video game consoles among consumers. It keeps going but I think I've exhausted the limits of sarcasm and should stop.

Anyway, Apple was never going to catch up this time.

Apple catches up and was ahead all along

Just as with smart watches and Maps and Alexa and HoloLens, it turns out that being first to market with something doesn't mean you're really ahead of the curve. It often means you're the beginning of the upward curve.

If you haven't thought out your strategy beyond rushing to launch desperately in order to be first, you might end up a Samsung Gear or vastly expensive Voice First shopping platform nobody uses for anything other than changing the currently playing song.

It might be time to lay off your world-leading VR staff and tacitly admit that nobody in management had really contemplated how HoloLens or the Meta Quest Pro could possibly hope to breathe after Apple walked right in and inhaled the world's high end oxygen.

There's reason to believe that everyone should have seen this coming. And it's not because Apple is just better than everyone else. It's more likely because Apple looks down the road further and thinks about the next step.

That's obvious when looking at how Apple lays its technical foundations.

Pundits and an awful lot of awful journalists seem entirely disinterested in how anything is built, but rather only in the talking points used to market some new thing. Is Samsung promising that everyone will want a $2000 cell phone that magically transforms into an Android tablet with a crease? They were excited about that for years.

When Apple does all the work behind the scenes to roll out its unique strategic vision for how something new should work, they roll their eyes and say it should have been finished years ago. It's because people who have only delivered a product that is a string of words representing their opinions imagine that everything else in the world is also just an effortless salad tossing of their stream of consciousness.

They don't appreciate that real things have to be planned and designed and built.

And while people who just scribble down their thoughts actually believed that Apple hadn't imagined any use for AI before last Monday, and so would just deliver a button crafted by marketing to invoke the work delivered by the real engineers at Google and Microsoft or Open AI, the reality was that Apple was delivering the foundations of a system wide intelligence via AI for years right out in the public.

Apple Intelligence started in the 1970s

Efforts to deliver a system wide model of intelligent agents that could spring into action in a meaningful way relevant to regular people using computers began shortly after Apple began making its first income hawking the Apple I nearly 50 years ago.

One of the first things Apple set upon doing in the emerging world of personal computing is fundamental research into how people used computers, in order to make computing more broadly accessible.

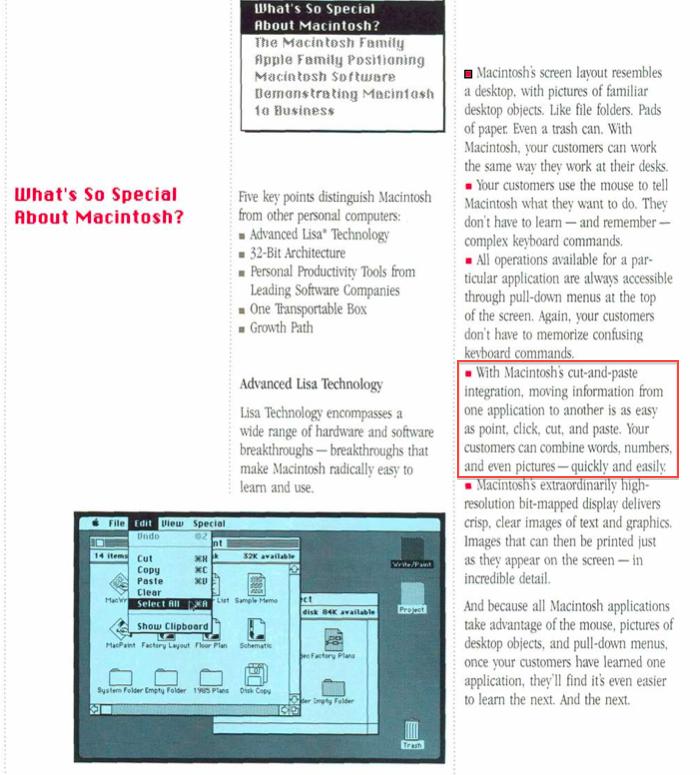

That resulted in the Human User Interface Guidelines, which explained in intricate detail how things should work and why. They did this first in order for regular people to be able to make sense of computing at a time when you had to be a full time nerd hacker in order to load a program off tape and enable it to begin to PEEK and POKE at memory cells just to generate specific characters on your CRT display.

In Apple's HUIG, it was mandated that the user needed consistency of operation, the ability to undo mistakes, and a direct relationship between actions and results. This was groundbreaking in a world where most enthusiasts only cared about megahertz and BASIC. A lot of people, including developers, were upset that Apple was asserting such control over the interface and dictating how things should work.

Those that ignored Apple's guidelines have perished.

Not overnight, but with certainty, personal computing began to fall in line with the fundamental models of how things should work, largely created by Apple. Microsoft based Windows on it and eventually even the laggards of DOS came kicking and screaming into the much better world of graphical computing first defined by the Macintosh, where every application printed the same way, and copy/pasted content and open/saved documents using the same shortcuts, and where Undo was always right there when you needed it.

An era later, when everyone it the tech world was convinced that Microsoft had fully plundered Apple's work and that the Macintosh would never again be relevant and that Windows would now take over and become the interface of everything, Apple completely refreshed the Mac and made it more like the PC (via Intel) and less like the PC (with OS X). Apple incrementally clawed its way back into relevance, to the point where it could launch the next major revolution in personal computing: the truly usable mobile smartphone.

Apple crushed Microsoft's efforts at delivering a Windows Phone by stopping once again to determine — after lengthy research — not just how to make the PC "pocket sized," but how to transform it so that regular people could figure it out and use it productively as a mobile tool.

iPhone leaped over the entire global industry of mobile makers, not because it was just faster and more sophisticated, but largely because it was easy to use. And that was a result of a lot of invisible work behind the scenes.

Pundits liked to chalk this up to Apple's marketing, as if the company had pulled some simple con over the industry and tricked us all into paying too much for a cell phone. The reality was that Apple put tremendous work into designing a new, mobile Human User Interface that your mom— and her dad— could use.

Across the same decades of way-back-when, Apple and the rest of the industry was also employing elements of AI to deliver specific features. At the height of Apple's actual beleaguerment in the early '90s, the company strayed from its focus on building products for real people and instead began building things that were just new.

Apple's secret labs churned out ways to relate information together in networks of links, how to summarize long passages of text into the most meaningful synopsis, how to turn handwriting into text on the MessagePad, and how to generate beautiful ligatures in fonts — tasks that few saw any real use for, especially as the world tilted towards Windows.

What saved Apple was a strict return to building usable tools for real people, which started with the return of Steve Jobs. The iMac wasn't wild new technology; it was approachable and easy to use. That was also the basis of iPod, iPhone, iPad and Apple's subsequent successes.

At the same time, Apple was also incorporating the really new technology Apple had already done internally. VTwin search was delivered as Spotlight, and other language tools and even those fancy fonts and handwriting recognition were morphed into technologies regular people could use.

Rather than trying to copy the industry in making a cell phone with a Start button, or a phone-tablet, or a touch-PC or some other kind of MadLib engineered refrigerator-toaster, Apple has focused on simplicity, and making powerful things easy to use.

In the same way, rather than just bolting ChatGPT on its products and enabling its users to experience the joys of seven finger images and made-up wikipedia articles where the facts are hallucinated, Apple again distilled the most useful applications of generative AI and set to work making this functionality easy to use, as well as private and secure.

This didn't start happening the day Craig Federighi first saw Microsoft Copilot. Apple's been making use of AI and machine learning for years, right out in the open.

It's used in finding people in images, and text throughout the interface for search indexing and even in authenticating via Face ID. Apple made so much use of machine learning that they started building Neural Engine cores into Apple Silicon with the release of iPhone X in 2017.

Artificial Intelligence gets a euphemism in AI

Apple didn't really call any of its work with the Neural Engine "AI." Back then, the connotation of "Artificial Intelligence" was largely negative.

It still is.

To most people, especially those of us raised in the '80s, the notion of "artificial" usually pertained to fake flowers, unnatural flavorings, or synthetic food colors that might cause cancer and definitely seemed to set off hyperactivity in children.

Artificial Intelligence was the Terminator's Skynet, and the dreary, bleak movie by Steve Spielberg.

Some time ago when I was visiting a friend in Switzerland, where the three official languages render all consumer packaging a Rosetta Stone of sorts between French, Italian and German, I noticed that the phrase "Artificially Flavored" on my box of chocolatey cereal was represented with a version of English's "artificial" in the Latin languages, but in German used the word Kunstlich, literally "Artistic."

I wondered to myself why German uses "like an artist" rather than the word for "fake" in my native language before suddenly coming to the realization that artificial doesn't literally mean "fake," but rather an "artistic representation."

The box didn't say I was eating fake chocolate puffs; it was instead just using somebody's artistic impression of the flavor of chocolate. It was a revelation to my brain. The negative connotation of artificial was so strongly entrenched that I had never before realized that it was based on the same root as artistic or artifice.

It wasn't fraud, it was a sly replacement with something to trick the eye, or in this case, the tastebuds.

Artificial Intelligence is similarly not just trying to "fake" human intelligence, but is rather an artistic endeavor to replicate facets of how our brains work. In the last few years, the negative "fake" association of artificial intelligence has softened as we have begun to not just rebrand it as the initials AI, but also to see more positive aspects of using AI to do some task, like to generate text that would take some effort otherwise. Or to take an idea and generate an image a more accomplished artist might craft.

The shift in perception on AI has turned a dirty word into a hype cycle. AI is now expected to respark the doldrums of PC sales, or speed up the prototyping of all manner of things, and glean meaningful details out of absurd mountains of text or other Big Data. Some people are still warning that AI will kill us all, but largely the masses have accepted that AI might save them from some busy-work.

But Apple isn't much of a follower in marketing. When Apple began really talking about computer "Intelligence" on its devices at WWDC, it didn't blare out term "Artificial Intelligence." It had its own phrase.

I'm not talking about last week. I'm still talking about 2017, the year Apple introduced iPhone X and where Kevin Lynch introduced the new watchOS 4 watch face "powered by Siri intelligence."

Phil Schiller also talked about HomePod delivering "powerful speaker technology, Siri intelligence, and wireless access to the entire Apple Music library." Remember Siri Intelligence?

It seems like nobody does, even as pundits were lining up over the last year to sell the talking point that Apple had never had any previous brush with anything related to AI before last Monday. Or, supposedly, before Craig experienced his transformation on Mount Microsoft upon being anointed by the holy Copilot and ripped off all of its rival's work — apart from the embarrassment of Recall.

Apple isn't quite ashamed of Siri as a brand, but it has dropped the association — or maybe personification — of its company wide "intelligent" features with Siri as a product. It has recently decided to tie its intelligence work to the Apple brand instead, conveniently coming up with branding for AI that is both literally "A.I." and also proprietary to the company.

Apple Intelligence also suavely sidesteps the immediate and visceral connotations still associated with "artificial" and "artificial intelligence." It's perhaps one of the most gutsy appropriations of a commonplace idea ever pulled off by a marketing team. What if we take a problematic buzzword, defuse the ugly part, and turn it into trademark? Genius.

The years of prep-work behind Apple Intelligence

It wasn't just fresh branding that launched Apple Intelligence. Apple's Intelligence (nee Siri) also had its foundations laid in Apple Silicon, long before it was commonplace to be trying to sell the idea that your hardware could do AI without offloading every task to the cloud. Beyond that, Apple also built the tools to train models and integrate machine learning into its own apps— and those of third party developers— with Core ML.

The other connection between Apple's Intelligence and Siri: Apple also spent years deploying the idea among its developers of Siri Intents, which are effectively footholds developers could build into their code to enable Siri to pull results from their apps to return in response to the questions users asked Siri.

All this systemwide intelligence Apple was integrating into Siri answers also now enables Apple Intelligence to connect and pool users' own data in ways that is secure and private, without sucking up all their data and pushing it through a central LLM cloud model where it could be factored into AI results.

With Apple Intelligence, your Mac and iPhone can take the infrastructure and silicon Apple has already built to deliver answers and context that other platforms haven't thought to do. Microsoft is just now trying to begin pooping out some kind of AI engines in ARM chips that can run Windows, even though there's still issues with Windows on ARM, and most Windows PCs are not running ARM, and little effort in AI has been made outside of just rigging up another chat-bot.

The entire industry has been chasing cloud-based AI in the form of mostly generative AI based on massive models trained on suspect material. Google dutifully scraped up so much sarcasm and subterfuge on Reddit that its confident AI answers are often quite absurd.

Further, the company tried to force its models to generate inoffensive yet stridently progressive AI that it effectively forgot that the world has been horrific and racist, and instead just dreams up history where the founding fathers were from all backgrounds and there was no controversies or oppression.

Google's flavor of AI was literally artificial in the worst senses.

And because Apple focused on the most valuable, mainstream uses of AI to demonstrate its Apple Intelligence, it doesn't have to expose itself to the risk of generating whatever the most unpleasant corners of humanity would like it to cough up.

What Apple is selling with today's AI is the same as its HUIG sold with the release of Macintosh: sales of premium hardware that can deliver the value of its work in imagining useful, approachable, clearly functional and easy to demonstrate features that are easy to grasp and use. It's not just throwing up a new AI service in hopes that there will someday be a business model to support it.

For Apple Intelligence, the focus isn't just catching up to the industry's "artificial" generation of placeholder content, a sort of party trick lacking a clear, unique value.

It's the state-of-the-art in intelligence and machine learning.