Apple exploring Kinect-like 3D input for controlling Macs

The concept was disclosed this week in a U.S. patent application discovered by AppleInsider entitled "Three-Dimensional Imaging and Display System." It describes a system akin to Microsoft's Xbox Kinect platform, which allows for controller-free interaction with devices.

Apple's solution would optically detect a user's hands and fingertips and measure their movements. In this manner, users could use their hands to control a Mac without the need for a mouse or keyboard.

In its application, Apple notes that current computer input devices like the mouse allow users to control a system in two dimensions, along the 'X' axis and 'Y' axis. But when manipulating objects in three dimensions, input methods like a joystick or mouse can be cumbersome.

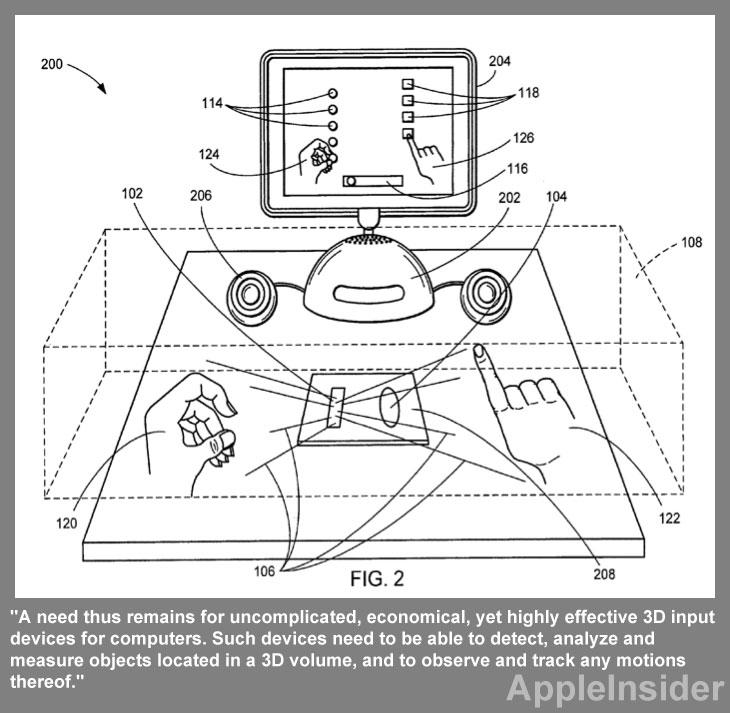

"A need thus remains for uncomplicated, economical, yet highly effective 3D input devices for computers," the filing reads. "Such devices need to be able to detect, analyze and measure objects located in a 3D volume, and to observe and track any motions thereof."

Apple's filing notes that for any system to be successful, it needs to be "economical, small, portable and versatile." It must also provide a user with "integral, immediate feedback," and it needs to be adapted well for use with a number of devices, ranging from full-fledged Macs to portable electronics like the iPhone.

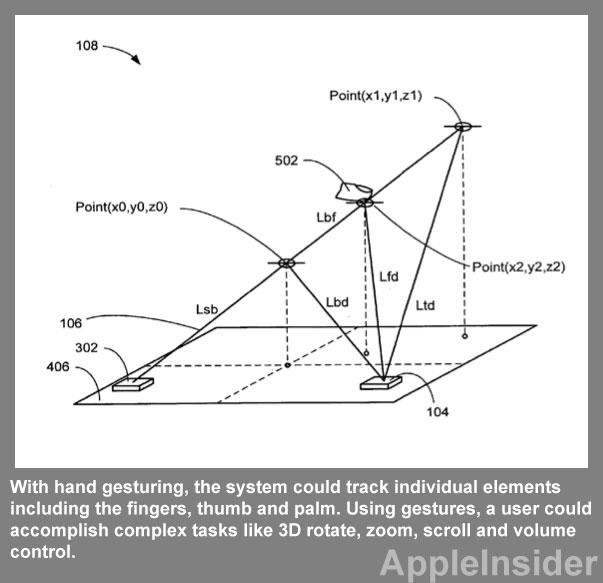

The hardware described by Apple in the application would be capable of tracking a user's head as well as their hands. Potential components include an infrared sensor or visible laser, high-speed photo detector, digital signal processor, dual-axis scanning device, and subsystems for analog and video.

Apple envisions a user interface that would allow a person to more naturally use their hands and fingertips to control a device. An accompanying display could show knobs, sliders and buttons that a user could virtually manipulate in a three-dimensional space.

In one image accompanying the application, a user is shown using both of their hands to select inputs on an iMac. With their left hand, the user is twisting a virtual knob, while on the right hand, their index finger is being used to press a button.

The system would aid the user with on-screen visual cues, allowing them to more easily manipulate objects on the screen. For example, Apple's system includes a virtual representation of the user's hand displayed on the screen of the iMac.

In the application, Apple provides a number of potential uses for its described system. For example, head tracking could be used to tilt an image or zoom in on the screen based on the location of a user's head. Accordingly, moving person could be followed and tracked with a motorized camera system.

With hand gesturing, the system could track individual elements including the fingers, thumb and palm. Using gestures, a user could accomplish complex tasks like 3D rotate, zoom, scroll and volume control.

Another feature of the system described by Apple is user presence detection. With this, the system could detect whether a user is sitting in front of a display, and could even identify unique users. The system could also automatically shut down and save power when the user walks away.

Other potential uses listed by Apple are surveillance, bar code reading, object measuring, image substitution or replacement, or a virtual keyboard. In the keyboard example, Apple notes that individual fingers could be tracked for typing, and the image of a keyboard could be projected onto a desktop to give users a visual aid while typing.

The proposed invention, made public this week by the U.S. Patent and Trademark Office, was first filed by Apple in August of this year. It is credited to Christoph H. Krah.

Neil Hughes

Neil Hughes

Amber Neely

Amber Neely

Thomas Sibilly

Thomas Sibilly

AppleInsider Staff

AppleInsider Staff

William Gallagher

William Gallagher

Malcolm Owen

Malcolm Owen

Christine McKee

Christine McKee

40 Comments

Nice and all, but how's this 'entirely new' if MS' Kinect already allows "users to perform gestures with their hands in a three-dimensional space" as a substitute for device input/controllers?

Ever since Minoriry Report I have wanted to have something like this.

Notice how old this is? That iMac is from the early 2000's.

Notice how old this is? That iMac is from the early 2000's.

Is this the predecessor comment to saying Microsoft must have copied Apple again?

Is this the predecessor comment to saying Microsoft must have copied Apple again?

It is obvious that Apple thought of it first. Dont you know that apple have a patent on thinking of ideas before patenting them?