Apple's display tech lets users interact with 3D objects in mid-air

Giant Mirage 3D hologram device uses parabolic mirrors to create illusion of floating three-dimensional objects. | Source: Optigone

Last updated

A document discovered on Thursday describes an interactive three-dimensional display system that allows users to "touch" objects in mid-air, presenting the illusion of an advanced hologram.

The U.S. Patent and Trademark Office published an Apple patent application for an "Interactive three-dimensional display system," which details a method of presenting users with what appears to be a 3D image that can be manipulated with touches, swipes and other gestures.

In practice, Apple's invention uses a variety of known display techniques, along with a bevy of sensors, to present the pseudo-hologram. The basis of the technology can be found in the popular UFO-shaped mirror boxes that "project" a 360-degree 3D image of an object placed inside. To the user, a strawberry, stone or other solid entity seemingly floats just above an opening in the middle of the box.

While the generic mirror box relies solely on optical illusions to function, Apple's solution is a bit more complex.

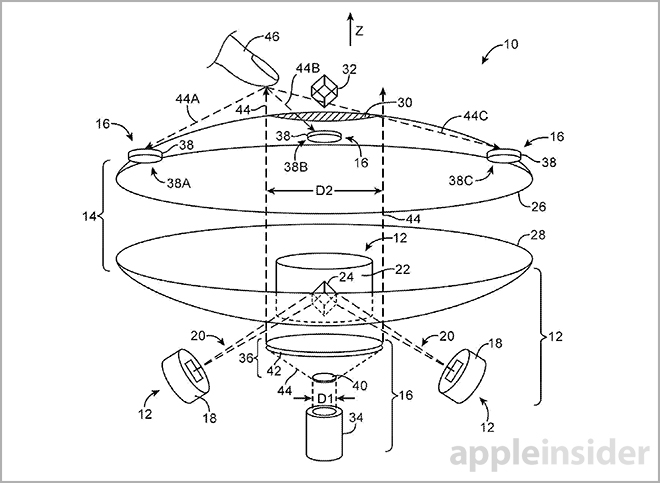

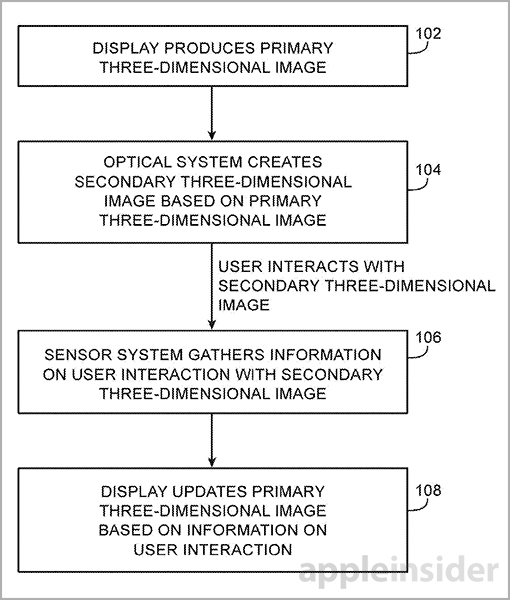

The technique is comprised of three parts: a display system that creates a primary 3D image, an optical system that translates the first image into a secondary 3D image in mid-air and a sensor assembly to log user input. Support structures include a processing unit and control circuitry to facilitate feedback.

First, the image being projected is digital and not a reflection of a physical object. Apple describes a system in which infrared lasers, or other light emitting devices, project an image into a medium such as a non-linear crystal.

The medium itself may serve as an optical frequency up-converter for light passing through. When configured correctly, the medium can mix and up-convert infrared laser light to the visible spectrum, thus creating a primary 3D image.

According to the document, the non-linear medium is located between two parabolic mirrors, like the aforementioned mirror box. The primary image is reflected off the upper mirror to the lower mirror and ultimately out of a hole in the top mirror. As a consequence of this internal reflection, a secondary hologram-like image appears just above the upper mirror.

Next, a 3D input detection system collects and translates user motion data. To detect movement in 3D space, an infrared, ultraviolet, x-ray or other laser is coupled to a beam expander positioned in the bottom mirror assembly. The laser emits a beam that exits the top mirror and strikes a user's hand, finger or other control object. Reflected light is collected by image sensors and translated into positioning data by the onboard processor.

In addition to narrowing down a control surface's location, other sensors can be disposed to track gestures such as "pinch-to-zoom," swipes and presses. This data is fed into the feedback mechanism, which adjusts the primary 3D image accordingly.

Apple's 3D image presentation and tracking system is similar to a 360-degree viewable display debuted by Microsoft Research in 2011. As seen above, the system, dubbed "Vermeer," used many of the same concepts as Apple's invention to achieve a fairly high-resolution interactive image.

Apple's interactive 3D image display patent application was first filed for in 2012 and credits Christoph H. Krah and Marduke Yousefpor as its inventors.

Mikey Campbell

Mikey Campbell

Amber Neely

Amber Neely

Thomas Sibilly

Thomas Sibilly

AppleInsider Staff

AppleInsider Staff

William Gallagher

William Gallagher

Malcolm Owen

Malcolm Owen

Christine McKee

Christine McKee

18 Comments

Prepare for the future release of the Apple app 'Find My Droids': [IMG]http://forums.appleinsider.com/content/type/61/id/42243/width/300/height/600[/IMG]

This patent appears to be related to the following fantastic Apple patent application published last year... http://appleinsider.com/articles/13/08/20/apple-patents-3d-gesture-ui-for-ios-based-on-proximity-sensor-input Both patent applications were filed with the USPTO in 2012.

I doubt we'll see this in any products soon, but it's a fun one.

[quote name="GTR" url="/t/178800/apples-display-tech-lets-users-interact-with-3d-objects-in-mid-air#post_2522025"]Prepare for the future release of the Apple app 'Find My Droids': [IMG]http://forums.appleinsider.com/content/type/61/id/42243/width/300/height/600[/IMG][/quote] Samsung will try to enter this as evidence of prior art at their patent trial, someday.

I've having trouble thinking of a single consumer use case scenario for this type of technology.