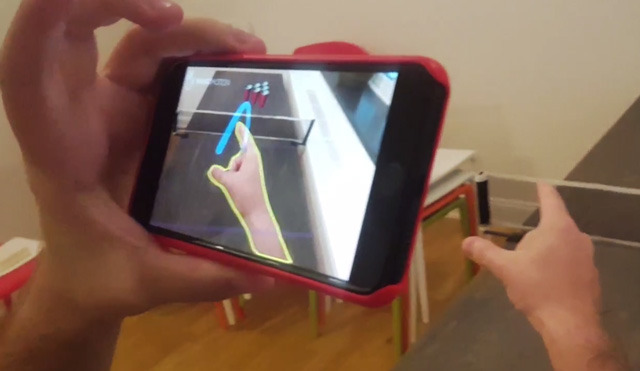

New demonstrations using Apple's ARKit may suggest some of the possibilities of the augmented reality platform, including the integration of hand tracking. [Updated to correct ModiFace info]

In "ManoPong," a demonstration created by computer vision firm ManoMotion, people can play beer pong by positioning one hand in front of the camera and flicking at virtual cups on a flat surface. ManoMotion says it has paired ARKit with its 3D gesture recognition technology, which can follow motions such as swipes, tapping, and grabbing without any extra hardware.

An updated ManoMotion SDK should be available in the next few weeks. The technology will initially be limited to apps based on Unity, but broader support should come later.

Another company, ModiFace, is showcasing technology that can simulate numerous different hair colors, projecting them onto a person's real-time image. The project is said to be the result of six years of work, and compatible with a variety of hair styles.

One of the more unusual approaches to ARKit comes from artist Zach Lieberman, who has coded a way of visualizing audio recordings in space. People can play material forwards or backwards simply by moving through the trails audio snippets leave behind.

Quick test of recording sound in space and playing back by moving through it (video has audio !) #openframeworks pic.twitter.com/GdZcK3rj1L

— zach lieberman (@zachlieberman) September 6, 2017

Commercial use of ARKit will have to wait for the arrival of iOS 11, which could happen as soon as next week following Apple's Sept. 12 press event introducing new iPhones. An AR-oriented camera should be one of the enhancements on the "iPhone 8."

Update: ModiFace says that its hair color technology does not in fact use ARKit.