Future iPads and iPhones could adapt to actually being used underwater, and a new way to sense orientation using the Face ID camera could see display rotation guided by the angle of your face in relation to the device.

It may seem as if the iPhone, iPad, and even Mac, have not changed their user interface in years, but in truth Apple is continually revising its software. Apple is also increasingly good at hardware surviving underwater, plus it continues to look into actually making devices remain usable when submerged.

These issues are revisited in two new patents, one of which will concern anyone who's truly wanted to operate an iOS device underwater. And the other uses technology to solve a small but recurring annoyance.

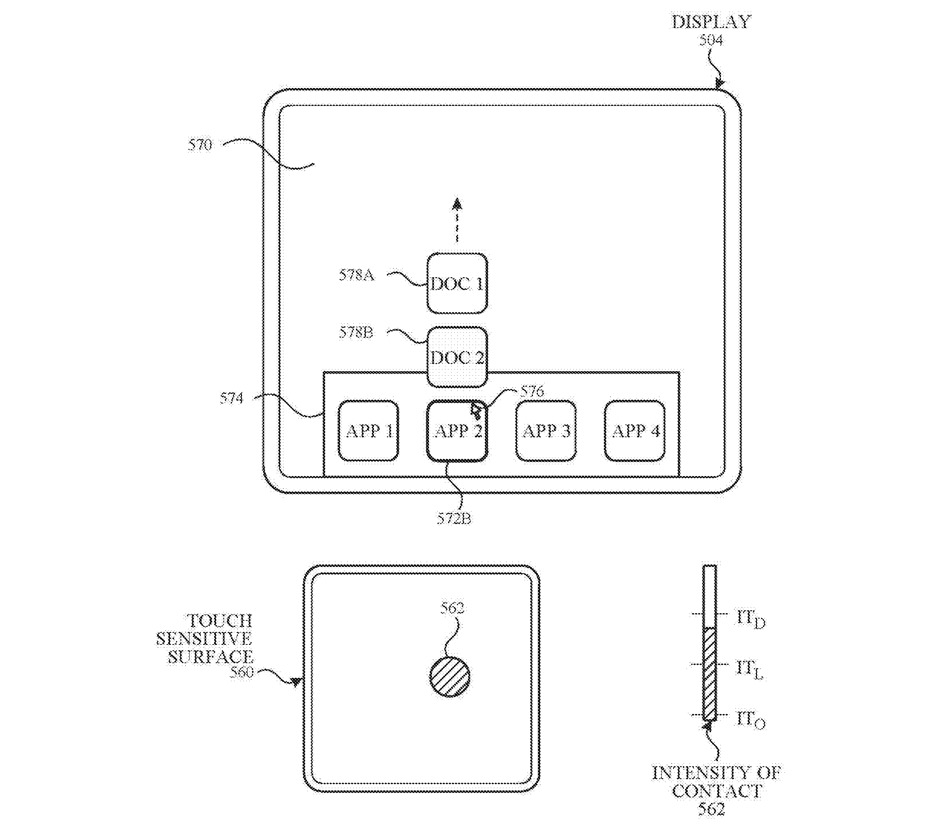

"Underwater User Interface" argues that if you're going to use your device underwater, then the controls need to be simplified while you're submerged.

That's definitely the thing to be focusing on, how simple it is to operate the iPhone or iPad when you would otherwise be concentrating on swimming to the surface.

"Current methods for displaying user interfaces while an electronic device is under water are outdated, time consuming, and inefficient," complains the patent. "For example, some existing methods use complex and time-consuming user interfaces, which may include multiple key presses or keystrokes, and may include extraneous user interfaces."

Apple also reckons that when something is cumbersome, it's also time-consuming. "In addition, these methods take longer than necessary, thereby wasting energy," it says. "This latter consideration is particularly important in battery-operated devices."

A future device might alter and simplify its display controls when underwater, and also take into account the difference water makes to touch detection

"There is a need for electronic devices that provide efficient methods and interfaces for accessing underwater user interfaces displayed on the electronic devices," continues the patent. "Such techniques can reduce the cognitive burden on a user who accesses user interfaces while the electronic device is under water, thereby enhancing productivity. Further, such techniques can reduce processor and battery power otherwise wasted on redundant user inputs."

In other words, if you're swimming for your life, you don't want to have to an "Are you sure?" dialog. However Apple's patent is not just about how many buttons you have to press. It's also about how the device tells you that you've pressed on it.

"[The device] optionally also includes one or more tactile output generators," it says. "In some embodiments, at least one tactile output generator is collocated with, or proximate to, a touch-sensitive surface (e.g., touch-sensitive display system) and, optionally, generates a tactile output by moving the touch-sensitive surface vertically (e.g., in/out of a surface of device) or laterally (e.g., back and forth in the same plane as a surface of device)."

So the aim is that when you happen to be underwater with your device, the whole user interface will change. It can show you simpler controls, or remove steps, and it can then vibrate the device to show when you've used it.

There is much more detail — some 40,000 words of it — and the invention is credited to no less than 16 people. These include Richard J. Blanco, who is also listed on a related patent for "Sealed electronic connectors for electronic devices." And also Xuefeng Wang, previously credited on a patent for a way that devices can detect when they're under sufficient strain that they may be damaged, and alerting the user about it.

Using your face to orient the device

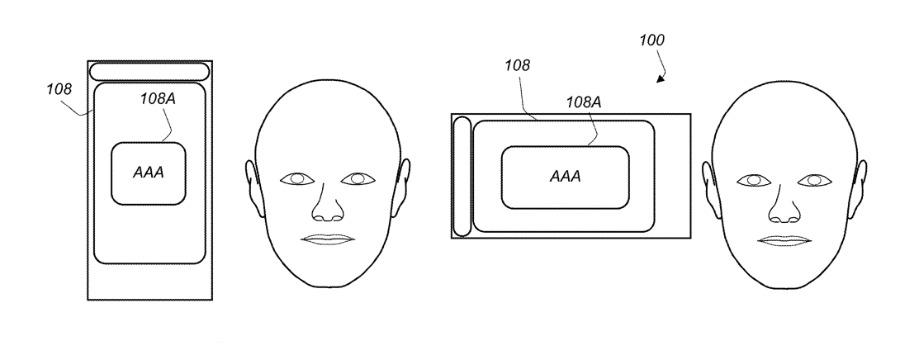

Separately, an application for "Using Face Detection to Update User Interface Orientation" is about rotating a display to the right position.

All iPads have always automatically rotated their screen so that you can hold them in landscape or portrait, and such that you can hold them any way up. However, every iPad user has also had the experience of having to physically rotate the device in order to get it to check again after it's turned the wrong way.

"The orientation that the content is presented is often determined using accelerometers and/or other inertial sensors that determine the orientation of the device relative to gravity," says Apple's patent.

"There are, however, often situations in which these sensors cannot accurately or confidently determine the orientation of the device relative to gravity and the device will not change or update the orientation of the content to the proper orientation for the user or will update the orientation incorrectly," it continues.

It gets confused, really, and the most common cause of the confusion is when the iPad is not being held up by the user, but instead is on a table.

"[The] device may not be able to provide the content in a proper orientation to the user when the device is lying flat (e.g., face up on a flat surface)," says the patent. "When lying flat, even though the sensors may be accurately aware of the position of the device, the orientation relative to the user may be unknown."

It's hardly a gigantic problem, but it is annoying and Apple has clearly heard this a lot from people. "In either of these situations, the user may become frustrated with the need to reorient the device and/or move the device to get content to display in the proper orientation," it says.

The solution is to no longer rely solely on these sensors, and instead supplement them with more information. Specifically, us. The iPad Pro has facial recognition now in the form of the Face ID that we use to unlock the device.

The same feature, or rather a small part of it, could be used to determine which way around our face is in relation to the device. So it wouldn't have to actually recognize us for authentication, it would simply see which way we must be looking at the device and alter what it shows to match.

"[Orientation] data obtained from a face detection process is used to determine or update the orientation of an application user interface (e.g., text and/or content) being displayed on a display of a device," says the patent.

Future devices could detect the orientation of a user's face and use that to determine the right rotation for its display

This could happen whenever the user picks up or wakes the device, and it could happen during the regular Face ID process. However, it could also be automatically triggered if the iPad has sufficient doubt about whether its other sensors are correct.

Again, this would not require a full Face ID recognition scan, but also again, it would be making more use of the existing technology. Apple's patent suggests that all of this could go further, with the device using Face ID not only to orient itself, but to change the information it displays.

Alongside all of the technical detail about implementing such a system, Apple also stringently examines the privacy issues. An iPad might be unlocked, and then re-orient itself, according to the face it sees, but beyond that, the user must be able to decide what else happens.

"Use of such personal information data enables calculated control of access to devices," says the patent. "[Entities providing or analyzing information] would be expected to implement and consistently apply privacy practices that are generally recognized as meeting or exceeding industry or governmental requirements for maintaining the privacy of users.

This patent is credited to Kelsey Y. Ho, Eric J. Blumberg, Benjamin Biron, and Colin C. Terndrup. Ho has previously been listed as inventor on multiple related patents, including "Facial recognition operations based on pose," and "Automatic retries for facial recognition."