A detailed investigation of the camera array rolled into Apple's 2020 iPad Pro illustrates the shooters are — like every iPad that came before — not up to the same standards as the current top-tier iPhone model.

With the 2020 iPad Pro, Apple for the first time built a dual-camera array into its popular tablet, mirroring capabilities first seen on iPhone 7 Plus. It also added a brand new LiDAR Scanner to the rear "bump" for good measure.

A closer inspection of the camera setup performed by Halide Camera app developer Sebastien de With, however, reveals the iPad Pro's shooters once again appear to be inferior to the array integrated into Apple's current premium handset series, iPhone 11.

Side-by-side comparisons show photos from the 2020 iPad Pro's wide angle lens are nearly identical to those coming out of the 2018 iPad Pro. Image processing is "a little different," but the changes are chalked up to minor software tweaks. More significant improvements, like iPhone 11's Deep Fusion computational photography, are not available as iPad Pro is powered by what is essentially the same A-series processor found in the 2018 model.

A technical readout of included hardware confirms de With's suspicions.

"If you need something to compare it to, it's the iPhone 8 camera," de With writes. "Don't expect parity with Apple's latest iPhone 11 Pro shooters, but it's still a great set of cameras."

Interestingly, iPad Pro's ultra-wide angle camera is not the same module that debuted with iPhone 11 and 11 Pro last September. Instead of an effective equivalent focal length of 13mm, the tablet's shooter provides a slightly narrower 14mm equivalent.

The sensor, too, is changed. Unlike the 12-megapixel module deployed in iPhone 11, the new iPad Pro's ultra-wide is a 10MP sensor with an image output capped at 3,680 pixels-by-2,760 pixels. As de With notes, the sensor represents the most inappreciable resolution bump in a "new" camera since the 8MP iPhone 6.

That said, the smaller sensor allows for slightly improved sensitivity at a minimum exposure of 1/62,000 second compared to 1/45,000 second on iPhone 11. Minimum and maximum ISO is also different at a respective 19 ISO and 1824 ISO, compared to 21 ISO and 2016 ISO.

Photo from iPhone 11 Pro (left) compared to same scene capture by 2020 iPad Pro (right). | Source: Sebastien de With

As for the new LiDAR Scanner, de With, like others, notes the sensor's resolution is likely inadequate for photo enhancement applications, at least at this time. Data gleaned from the system is good for resolving larger objects at room scale, but likely not detailed enough to augment existing technologies like Portrait Mode, for example.

"The only reason we won't say it could never support portrait mode is that machine learning is amazing," de With writes. "The depth data on the iPhone XR is very rough, but combined with a neural network, it's good enough to power portrait mode. But if portrait mode were a priority, we'd put our money on Apple using the dual-cameras."

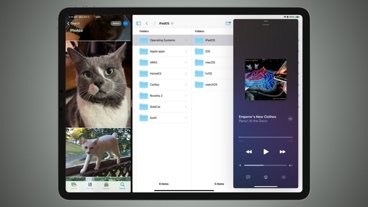

Halide built a proof-of-concept called Esper to illustrate the LiDAR Scanner's potential as a tool for creating augmented reality environments which, according to Apple, is the module's main purpose. The results are worth checking out.