Apple's introduction of CSAM tools may be due to its high level of privacy, with messages submitted during the Epic Games App Store trial revealing Apple's anti-fraud chief thought its services was the "greatest platform for distributing" the material.

Apple has a major focus on ensuring consumer privacy, which it builds as far as possible into its products and services. However, it seems that focus may have some unintended consequences, in enabling some illegal user behavior.

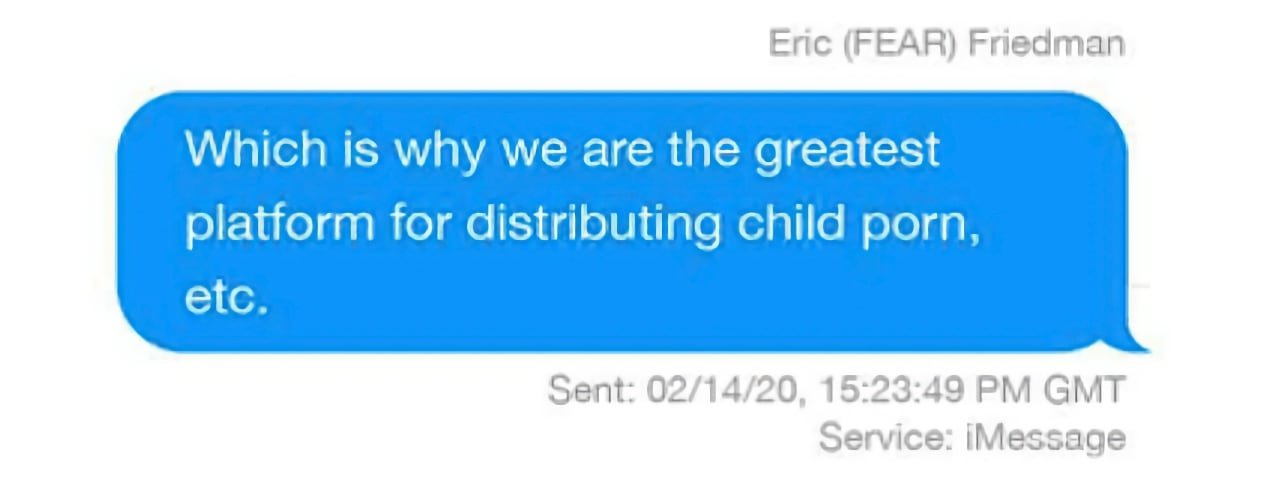

Uncovered from documents submitted during the Apple-Epic Games trial and discovered by The Verge, an iMessage involving Apple Fraud Engineering Algorithms and Risk head Eric Friedman from 2020 seems to hint at the reasons behind Apple's introduction of CSAM tools.

In the thread about privacy, Friedman points out that Facebook's priorities differ greatly from Apple's, which has some unintended effects. While Facebook works on "trust and safety," in dealing with fake accounts and other elements, Friedman offers the assessment "In privacy, they suck."

"Our properties are the inverse," states the chief, before claiming "Which is why we are the greatest platform for distributing child porn, etc."

When offered that there are more opportunities for bad actors on other file-sharing systems, Friedman highlights "we have chosen to not know enough in places where we really cannot say." The fraud head then references a graph from the New York Times depicting how firms are combatting the issue, but then suggests "I think it's an underreport."

Friedman also shares a slide from a presentation he was going to make on trust and safety, with "child predator grooming reports" listed as an issue for the App Store. It is also deemed an "active threat," as regulators are "on our case" on the matter.

While the findings aren't directly attributable to the introduction of CSAM tools, they do indicate that Apple has considered the problem for some time, and is keenly aware of its faults that led to the situation.

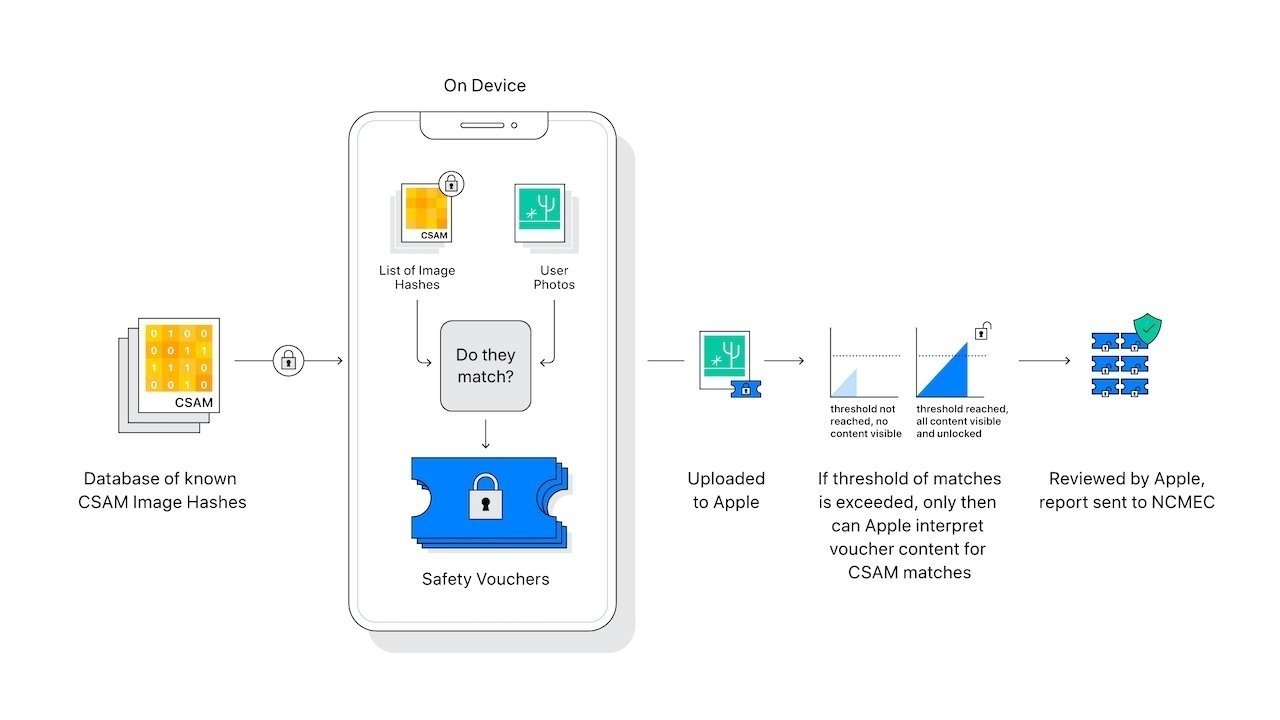

Despite Apple's efforts on the matter, it has received considerable pushback from critics over privacy. Critics and government bodies have asked Apple to reconsider, over concerns that the systems could lead to widespread surveillance.

Apple, meanwhile, has taken steps to de-escalate arguments, including detailing how the systems work and emphasizing its attempts to maintain privacy.