TV versus Monitor: The pros and cons of using each with your Mac

Televisions and computer monitors are relatively similar in how they function and what they do, but they're not really interchangeable products. This is why monitors and TVs should be used for different purposes.

At face value, there are a lot of similarities between your typical television and a computer monitor. At the core is that both can take a video image and display it to the user or multiple people.

There's a lot of crossover between the two devices as well, with the almost ubiquitous use of HDMI meaning you could plug most things with a video output into either a TV or a monitor.

Then there are other similarities, such as the plethora of high-resolution screens and the ability to play sound and attach multiple inputs to one screen.

All that before you consider that a monitor is generally much more expensive than a television, even of a larger size.

The thing is, while the two may use somewhat similar technologies at a basic level, they are made for entirely different purposes.

A butter knife and a chainsaw can both cut things. However, you're not going to chop down a tree with cutlery nor use a lumbering tool to cut some cheese.

As tempting as it is, you may not necessarily get that much joy from buying an 80-inch 4K TV to use with a Mac mini. There's not much technically stopping you from doing so, but it will not be quite right.

They're similar but simultaneously different. This is what you need to bear in mind about using one over the other.

Size and neck strain

The two products' most obvious differences are the screen's physical dimensions. Ignoring any stands or mounts either uses, a monitor and a TV are large display panels, but televisions are typically bigger.

Ignoring things like resolution for the moment, consider the practicalities of using a monitor versus a television.

When watching TV, you're looking at a large screen a few feet away from your eyes. You can easily see the edges in your peripheral vision and the entire picture at once.

On the other hand, a monitor is typically sat on a desk directly in front of the user, between one and two feet away from their eyes. Again, all bits of the display are visible at a time, with users able to flit from one corner to the other with minimal effort.

To use a large-screen television on a desk, the user must turn their head to see all screen areas. Or, they will have to use a much deeper desk or mount the TV away from their desk to get the same all-screen coverage without moving their head.

That's not to say that an ambitious computer user can't take advantage of ample screen space beyond the 27 to 32-inch typical monitor size. You could buy monitors up to and beyond 50 inches in size if your wallet could take it, putting them in the same physical realm as many off-the-shelf televisions you can acquire at supermarkets.

Users may get the same effect by using multiple screens rather than just one, but you get the idea.

Yes, you could get an 80-inch television and put it on your desk, but your neck won't be happy.

Resolution variety

Another difference is in how the two deal with resolution. Both have various options, and higher-resolution screens can show images meant for lower-resolution models, but there are variations in how they are handled.

Discounting standard definition televisions and older monitors, you're generally going to see the same set of resolutions being bandied about for televisions. A 1080p (1,920x1080) television is the new "standard" resolution, while 4K or UHD (3,840x2180) is the popular version everyone is slowly migrating towards owning.

There are 8K-resolution televisions, but unless you're willing to pay a premium on it and the content you want to view at that scale, it's not worth it at this time.

Again, computer monitors tend to follow their television counterparts in that they have 1080p and 4K screens, but there are many more variations out there.

For example, QHD — also known as 2K — is 2,560 x 1,440 pixels, which slots in as a median between 1080p and 4K if the computer user thinks the latter is too high a resolution for their uses.

But then, there are more exotic resolutions at play. For example, Samsung's Odyssey Gaming Monitor lineup includes one model with a "Dual-QHD" resolution of 5,120x1,440, an expansive display equating to two QHD displays side-by-side on the desk.

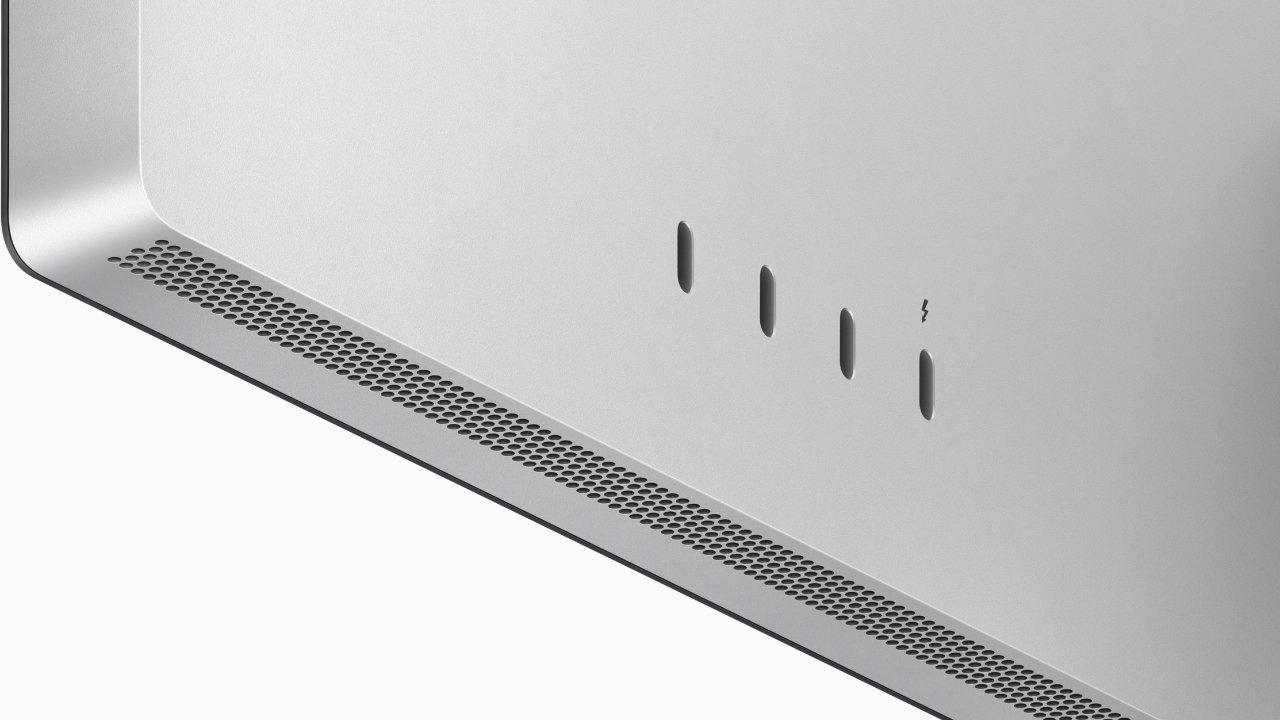

You've also got Apple's offering of things like the 5K Studio Display and the 6K Pro Display XDR.

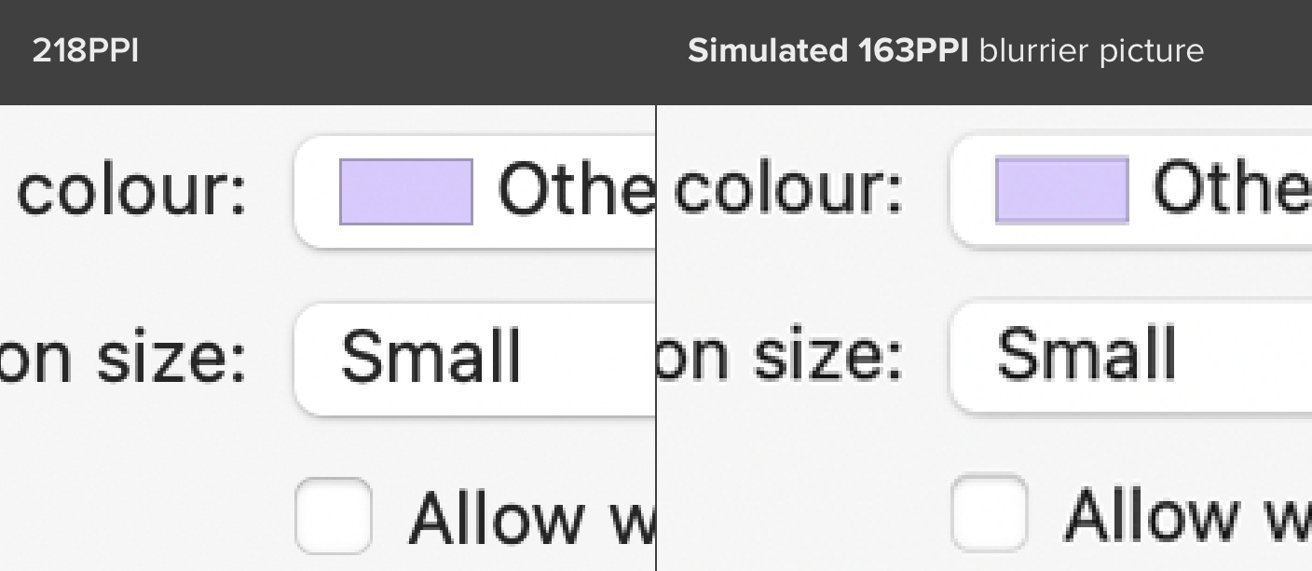

Pixel Density

If you combine size and resolution, you then enter the realm of pixel density. This topic is all about how many pixels you can fit into a specific space, such as a square inch.

You calculate the pixel density by using the Pythagorean Theorem to get the number of pixels the display has on the diagonal. Then we divide the diagonal number of pixels by the diagonal in inches. This yields the pixels per inch or PPI.

Typically speaking, the more pixels per square inch, or the higher the pixel density, the better. More pixels means the image displayed will be crisper and more detailed than a lower-resolution display of a similar physical size.

A 32-inch display with a 1080p resolution will have a far lower pixel density than a 32-inch display with a 4K resolution. Likewise, a 32-inch 1080p display will have a lower density than a 27-inch display of the same resolution.

With that in mind, televisions are usually bigger than monitors and can be bought with similar resolutions. The monitor will tend to have a higher pixel density than the TV.

At its most extreme, the previously-alluded 80-inch television on a desk would have a very low pixel density. Since you're also very close to the screen, you will also see those pixels close up.

For Apple's displays, we usually have quite high pixel densities. Both the Studio Display and the Pro Display XDR have pixel densities of 218 PPI.

Display Technologies

The screen's technology to show the image can be an important factor that certainly impacts the price.

There are quite a few marketing terms out there, but generally, the technologies in use boil down to whether it is an OLED screen or LCD, and for the latter, the kind of backlighting it uses.

OLED is a reasonably expensive display technology, with self-illuminating pixels allowing for a bright image to be produced.

LCD is a very mature technology and cheaper to produce, and it relies on a backlight from LEDs to shine through pixels to create an image. Standard LCD screens aren't as bright as OLED but can be much cheaper to buy in comparison.

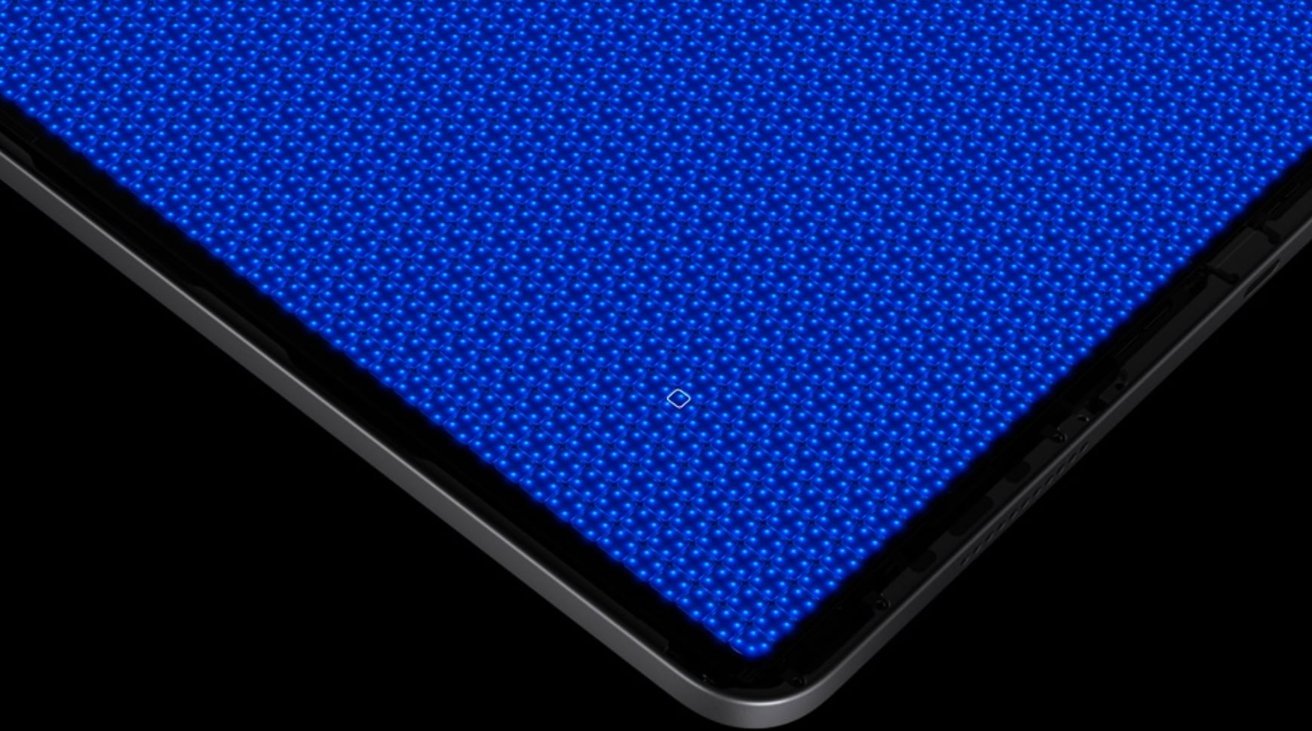

However, mini LED backlighting is starting to become an important new technology, as it uses thousands of miniature LEDs across the display for illumination. This can enable higher contrast levels for an image and a more vibrant picture, much like OLED.

Of the technologies, OLED has become the premium option for televisions. It has rarely been used in monitors, simply because it adds an extra cost to a product that tends to be more expensive than a television.

Mini LED is only just becoming an important technology, with it being used by Apple in its iPad Pro lineup as well as its 14-inch MacBook Pro and 16-inch MacBook Pro. While it is more expensive than LCD, it does offer a much better picture without costing as much as OLED.

As OLED will become more prevalent in the TV market, so will miniLED in monitors. LED continues to be the main one in use across the board

This also plays into HDR, as both monitors and TVs are marketed as being able to display the content. OLED and mini LED screens will be able to show it all better, mainly because they can produce a brighter and higher contrast image.

Refreshing and Responsiveness

One area in that monitors have a distinct advantage over televisions is in dealing with changing pixels. There are two elements to this: the response time and refresh rate.

The response time relates to how long it takes for a pixel to change color, such as from black to white. Refresh rate deals with how many times a display will update per second.

On response times, monitor manufacturers tend to offer the statistic in specifications for their products, whereas television producers don't usually bother for most models.

The type of panel used can dictate the response times, with IPS panels being the fastest, followed by VA, then lastly TN.

As a general rule, monitors will be better at pixel response times than televisions of comparable size.

For refresh rate, it is again an element that monitors dominate. Televisions generally have a refresh rate of 60Hz, rising to 120Hz in some cases.

Meanwhile, gaming monitors can reach far higher values, such as one from Alienware that can hit 360Hz.

Refresh rate and responsiveness aren't that useful for TV watchers as they don't impact a good long Netflix session. Avid gamers may be more concerned about these elements.

Connectivity and Features

At the most basic, a TV and a monitor work the same way: plug something into the back, and you'll see the image. One thing to consider is how that takes place.

Both monitors and TVs offer a selection of ports and connections for video sources. TVs usually provide more consumer device-friendly options, such as HDMI, while monitors generally have fewer and more computer-centric options.

For example, you're probably not going to see a Thunderbolt port on a TV. Likewise, you'll never see a European SCART socket on a monitor.

In some cases, monitors can offer a minimal set of connections. For example, Apple's Studio Display has just one Thunderbolt 4 port and three USB-C ports, with the Thunderbolt used to handle video and data from a Mac.

Televisions could also more easily accept multiple inputs and switch between them than a monitor at an earlier time. Modern monitors can do the same trick and, in some cases, can do advanced TV-like features such as picture-in-picture.

Modern televisions, especially smart TVs, also provide something that monitors generally do not: the ability to show content without using an external source. This includes taking a signal from an aerial and using built-in tuners.

A smart TV also includes the brains of a set-top streaming box, accessing the Internet directly. For a living room, eliminating extra set-top boxes can be advantageous in reducing cables and clutter.

A monitor generally has to rely on an external source for video, like a connected Mac.

Then there's control of the display itself. While TVs and monitors usually have onboard buttons, televisions also have a remote control.

Lastly, there's audio. TVs have large speakers for use far away from the user so that they can produce a great sound.

By contrast, the speakers of a typical monitor are generally quiet and unimpressive. Using other speakers or headphones connected to the computer is usually recommended.

Compromises can be made

While it is generally a bad idea to save money on a monitor by buying a TV instead, there's nothing stopping anyone from doing so. This is especially true if the circumstances dictate what you need to buy.

If you don't care about refresh rate, pixel density, or any of the computer-specific features of a monitor, you may be able to save a good sum by opting for a TV. And there's nothing wrong with that.

For those that want or need a very large monitor, beyond 32 inches, a TV may be the only option available. Conference rooms, offices, and other places may benefit from this larger display, especially if used as a secondary monitor.

There are also new options that fit a middle ground, working as both a TV and as a monitor equally. Samsung's Smart Monitor M8 combines the utility of a 32-inch 4K computer monitor with the benefits of a smart TV.

With careful planning, you could have the best of both worlds, with a TV that's good enough to be a monitor or vice versa. You just may have to compromise.

Malcolm Owen

Malcolm Owen

William Gallagher

William Gallagher

Mike Wuerthele

Mike Wuerthele

Wesley Hilliard

Wesley Hilliard

Mike Wuerthele and Malcolm Owen

Mike Wuerthele and Malcolm Owen

14 Comments

Good article.

You’re forgetting that I’m many countries, you have to pay an annual license fee to the government to own and use a tv…

I dumped using a tv years ago. I have a Phillips 40” 4K monitor which is excellent for working from home and is pretty good for watching movies etc on. And in the 7 years I’ve been using it, the saving in tv license fees more than covered the original purchase.

A monitor will obviously get you a better picture but I’m perfectly happy with my 40” TV that I’m using with my Mac mini. I’ve got it set up in the living room and I have a TV tray and folding chair sitting right in front of the TV. I very easily switch back and forth from TV to computer. Maybe if I had a family I would have TV and computer be two separate things but it’s working out for me right now. I also have my printer sitting on an end table right in the living room. It might seem odd to most people but it works well for me.

I been use a 32” 4K UHD QLED Samsung TV as a PC monitor for pass 3 years and everything is fabulous! I do not see that much difference in picture quality.

I’m using my 65” wall mounted 4K ATYME TV ( $470. @ FRY’S) with my M1 Mac mini, wireless keyboard and trackpad & Asus Vivo PC wired keyboard & mouse in living room. In bedroom, 32” 1080p Vizio TV with old iMac white wired keyboard as mouse and have no gripes with resolution!