The U.S. Patent and Trademark Office on Thursday published an Apple patent application that takes the company's existing mapping apps and extends them to new levels of interactivity with data-rich layered viewing modes.

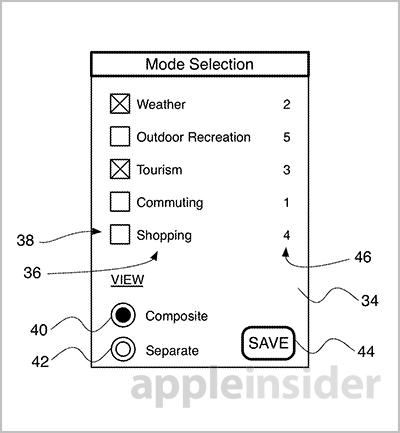

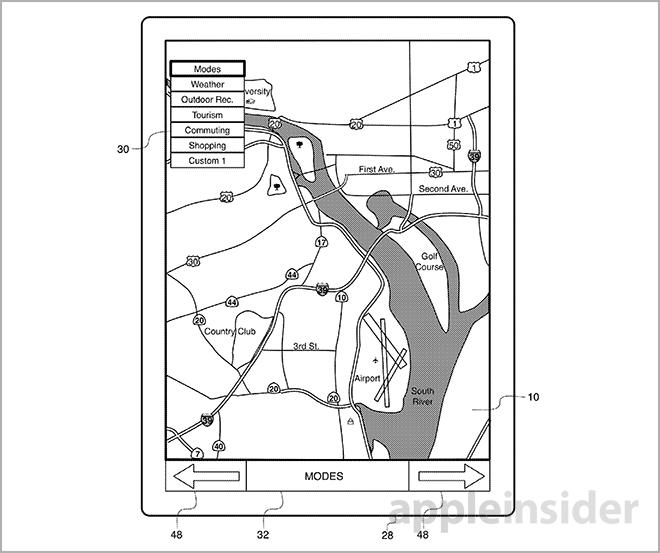

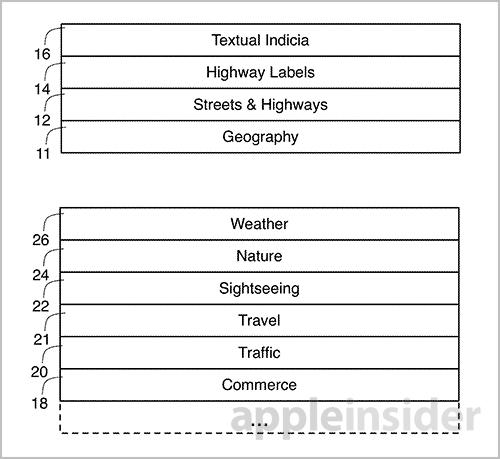

Apple's "Interactive Map" patent filing details a mapping program that enables users to dynamically adjust and view different "layers" of content pulled from the Internet. Examples include commuting, tourism and weather map layers, among others.

According to the document, the map would be able to emphasize any surrounding features of a selected point of interest, which in many cases will be a user's current position. The filing gives the example of a user who is viewing a weather-centric layer and sees a storm approaching. With Apple's invention, the person can quickly switch to a different viewing mode to locate a shopping mall or other structure relatively close to their position.

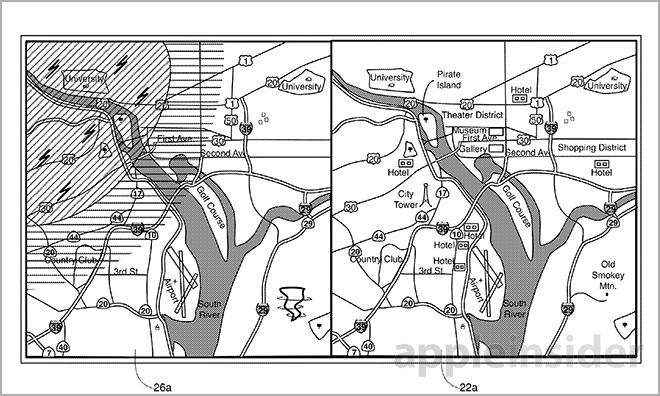

This secondary view can include a combination of layers that overlap, much like the current Hybrid view in Apple Maps. In one example, a basic graphical user interface may be provided to search for map features like highways and buildings. A user-selectable filter list may also be supplied that can drill down into the received data for a more refined experience.

Importantly, feature searches are connected to the layer or layers a user is currently viewing. Users can type in keywords like "food" to bring up nearby restaurants. While the current Maps app includes this functionality to a certain degree, the patent application goes further by determining which mapping mode a user is viewing and presenting results based on that information. For example, if a user were to be in hiking mode, the results for "food" would show camping supply stores, while the same search in tourist mode would display cafes or fine dining.

Perhaps the most intriguing idea described in the document is the ability to pull data about specific features or landmarks in real time. In one scenario, a user can click or touch a city name to display current census data. Another embodiment will give detailed information about a certain highway.

Further, a user can touch two points on the map to create a route. Currently, the iOS and OS X Maps apps support route making through the use of addressed or dropped pins. The proposed touch UI automatically generates route data like distance, then recommends which one to select based on an artificial situational awareness. If a person is traveling in one direction and searches for a hotel, the app would return only those establishments located in the general direction they are moving.

Another interesting aspect is the implementation of geospatial applications which can be used to provide relevant data for a given route. In practice, the system would work similar to iOS geofencing capabilities. For example, if a user is in tourist mode, the map app can provide contextual information about the history of a location. Users would receive the data via on-screen text or audible cues.

Alternatively, when in shopping mode, the app may be able to use a device's location to provide ads or specials from a nearby store. This particular implementation fits well with Apple's recent rollout of iBeacon, a Bluetooth-based location system that can transmit pertinent product data to the customer while providing an operator with statistical feedback on consumer habits.

Pins are also mentioned in Thursday's application, though their utility is much more robust than existing applications. For example, before placing a pin, a magnifying loupe will appear, showing surrounding street names, places of interest and buildings for precise dropping.

In yet another view, temporal statistics may be viewed, such as historical housing sales data. Other, more detailed implementations are described in the lengthy filing, as well as a technical overview of how the invention may be incorporated into Apple's present data infrastructure.

It is unclear if Apple is merely investigating the above mapping features, or is actively building software toward a fully functional layered system, but much of the filing's groundwork has already been laid with the existing Maps app. The power of the layered view, however, depends on external assets that are not currently in place. While Apple provides a basic structure that third-party developers and content creators can use to add content, deals have to be made and contracts need to be signed.

If Apple can bring a rich data set together, its mapping invention could potentially outperform any consumer-facing solution currently in service.

Apple's interactive map patent application was first filed for in 2012 and credits Christopher Blumenberg Jaron I. Waldman, Marcel van Os and Richard J. Williamson as its inventors.

Mikey Campbell

Mikey Campbell

Malcolm Owen

Malcolm Owen

Christine McKee

Christine McKee

William Gallagher

William Gallagher

13 Comments

This requires a patent? Sounds like extensions of what is already there. I just with I could modify the suggested routes Maps gives by dragging part of the route.

Introducing "iMaps"!

I just with I could modify the suggested routes Maps gives by dragging part of the route.

that's patented

This sounds interesting, but for Apples Maps to be a serious contender, it first needs to be able to plan a route to more than one location at a time. Without this, it will be bypassed by many

[quote name="Ploth" url="/t/161251/apple-hints-at-future-maps-app-in-layered-map-patent-filing#post_2448030"]This sounds interesting, but for Apples Maps to be a serious contender, it first needs to be able to plan a route to more than one location at a time. Without this, it will be bypassed by many[/quote]OT but I'd also like it to be able to detect the direction I'm traveling so it doesn't offer stops for fuel and food after I've already passed them. Basically I want everything that came in my TomTom app for the iPhone years ago. I'd also like a web interface while we're at it.