Apple's subtly flattering new FaceTime feature in iOS 13 beta 3 corrects the appearance of your attention so that you appear focused on your caller — as if perfectly staring at the camera — even when you're looking at the screen. The magic behind it has incrementally developed across years of evolving software and hardware advancements, offering some interesting insight into how Apple uniquely charts out the future with its products.

Attention Correction in FaceTime

Discussing the "Attention Correction" feature, developer Mike Rundle tweeted, "This is insane. This is some next-century sh*t." Users on Reddit howled "Absolutely incredible" and "the future is now!"

But rather than being showcased as a "revolutionary" advance in video chat that will require everyone to buy a new device, Apple's new FaceTime feature builds on a series of technologies the company has incrementally rolled out over the past several years.

The feature currently works in iOS 13 betas on A12 Bionic iPhone XS models and the latest iPad Pro with A12X Bionic. Because the image adjustment occurs locally on your phone, your gaze will appear more naturally focused to whomever is on the other end, regardless of what iOS version, A-chip, or FaceTime capable Mac the recipient is using.

It's the perfect example of a practical application that leverages many of the various technologies Apple has introduced over the past few years. And it highlights how all of the OS, app, silicon and advanced sensor work that Apple is performing — and uniquely deploying globally at incredible scale — is so different from commodity phones that knock off the basic outlines of Apple's designs without duplicating the core of its innovations.

Evolutionary, not revolutionary

It's common for tech writers to make a big deal about specific new features that can be easily copied by rivals. But much of the advanced work Apple is doing in imaging and elsewhere is not just a series of one-off features, but is instead built on top of set of expanding frameworks and foundational technologies. This leads to an increasingly rapid pace of development.

It also allows Apple to share its technologies with third party developers in the form of public APIs (Application Programming Interfaces). This leverages the coding talent outside of Apple to find even more uses for its foundational technologies, including Depth imaging, Machine Learning and Augmented Reality. A big part of Apple's annual Worldwide Developer Conference involves introducing developers to new and advanced tools Apple has created for building powerful third-party software for its platforms.

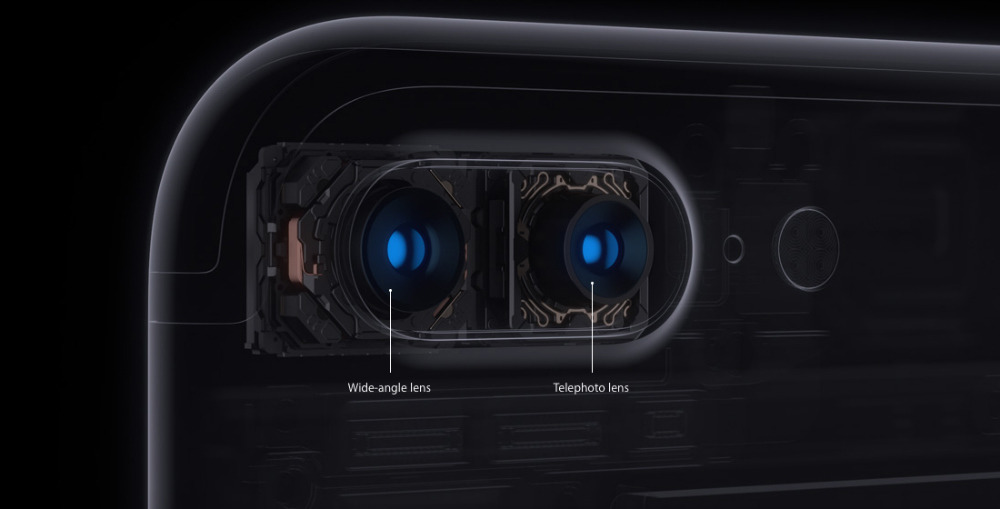

At WWDC17, Apple's Etienne Guerard presented "Image Editing with Depth," which detailed how iPhone 7 Plus— introduced the previous year with a new Portrait mode — captured differential depth data using its dual rear cameras.

He detailed how Apple's Camera app used this data to create flattering Portrait shots that focused on the subject and blurred out the background. Starting in iOS 11, Apple enabled third party developers to access this same depth data to build their own imaging tools using Apple's Depth APIs. A notable example is Focos, a clever app that provides precise control over depth of field and other effects.

In parallel, Apple also introduced a variety of other technologies including its new Vision framework, CoreML and ARKit for handling all the heavy lifting in building Augmented Reality graphics that appear anchored in the real world. It wasn't entirely clear how all those pieces would fit together, but later that fall Apple introduced iPhone 8 Plus with enhanced depth capture featuring Portrait Lighting.

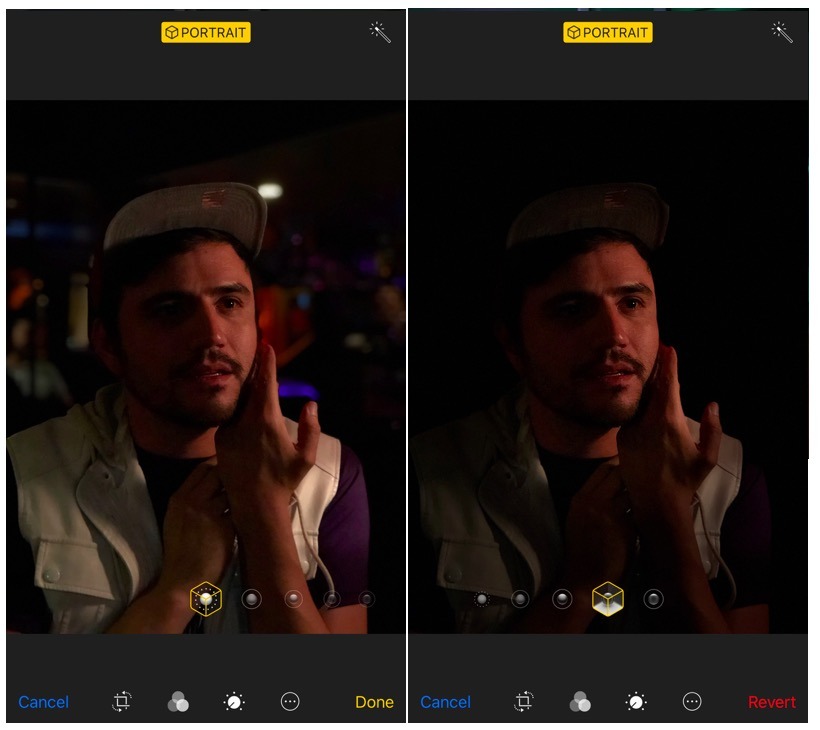

Portrait Lighting advanced the concept of capturing depth data entering the world of face-based AR: it created a realistic graphical overlay that simulated studio lighting, dramatic contour lighting or stage lighting that appeared to isolate the subject as if seated on a darkened stage. ARKit then effectively augmented users' reality by anchoring subtle graphics to a subject's face.

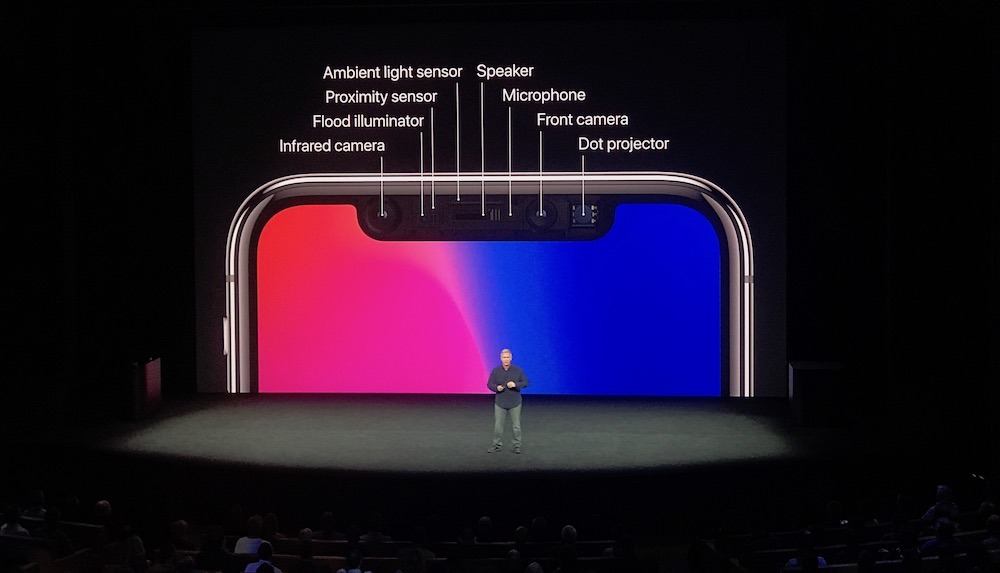

That feature was also expanded upon for the new iPhone X, which in addition to a dual rear cameras also supplied a new type of depth imaging using its front facing TrueDepth hardware. Rather than taking two images, it used a structure sensor to capture a depth map and color images separately.

In addition to taking Portrait Lighting shots, it could also take Portrait Lighting selfies. And of course Apple's TrueDepth also introduced Face ID for fast, effortless authentication and Animoji, using ARKit's face tracking to completely overlay your face with an animated emoji avatar that picked up your subtle head and face movements to create another you.

Apple also demonstrated the power of ARKit in the hands of third parties, showing off advanced selfie filters by Snapchat that could realistically map all kinds of extravagant imagery on your face using TrueDepth hardware.

Apple has continued to advance its processing capacity and introduce new features, including an adjustable aperture setting for iPhone XR and iPhone XS models. At this year's WWDC19, Apple also revealed software advances under the hood of Vision and CoreML, including advanced recognition features and intelligent ML tools for analyzing the saliency or sentiment of images, text and other data — intelligence shared across iOS 13, iPadOS, tvOS and macOS Catalina.

It also outlined a new kind of Machine Learning, where your phone itself can securely and privately learn and adapt to your behaviors. Apple had been internally using this to adapt to your evolving appearance in Face ID, but now third parties can build their own smart ML models that get even smarter in way that's personalized specifically to you. And again, it's private to you on a premium level because Apple is doing the processing locally using the advanced computing power of your iOS device, rather than shipping your data into the cloud, saving it and sharing it indiscriminately with "partners" the way Amazon, Facebook and Google have been.

Apple also makes use of TrueDepth attention sensing to know when you're looking at your phone and when you're not. It uses this to dim and lock your phone faster when you're not using it, or to remain lit up and active while you read a long article or watch a video, even when you're not touching the screen. These frameworks are also shared with developers so that they can build similar awareness into their apps.

A hard act to follow

Other mobile platforms are increasingly having trouble keeping up with Apple because they haven't invested similar effort into OS, frameworks, and hardware integration. Microsoft famously dumped billions into generations of Windows Phone and Windows Mobile but couldn't attract a base of users capable of attracting a critical mass of development.

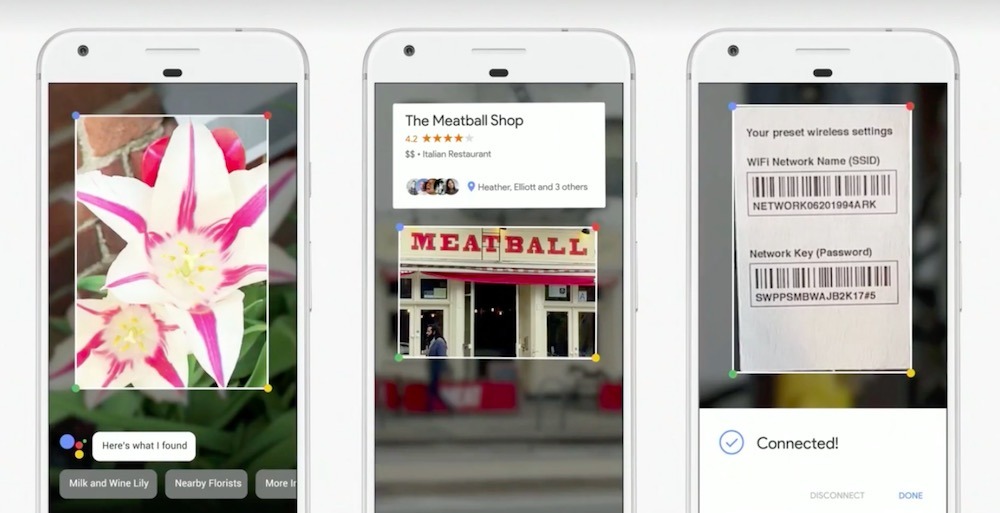

Google has furiously copied Apple's high level features in Android and even added some of its own, including dark image capturing on its own Pixel phones. But because it has been unable to sell very many Pixel phones, this is a money-losing effort that isn't sustainable. Further, developers can't justify targeting features unique to Pixel. Google also isn't doing much of the work to open up its technologies to allow third parties to this the way Apple has been.

Both the Pixel's camera features and the advanced ML image and barcode recognition features of the Google Lens app are proprietary. Google hasn't opened up a platform for either. And while it tried to equate its own version of Portrait capture, which used ML rather than dual camera or structure sensor depth capture, with iPhone X, the reality is that it's limited to the features Google created and lacks an optical zoom and a front facing 3D imaging system capable of supporting anything like Portrait Lighting, Face ID or Animoji.

Android licensees have also been hesitant to build advanced sensors like TrueDepth or powerful processors capable of advanced imaging and neural net calculations into very many of their phones, given that most Androids sell at an average of $250 or less. So despite shipping hundreds of millions of total handsets, there are far fewer high end Androids in total than there are modern iPhones. So just as with Windows Phone, developers are doing their best and most interesting work on iOS, not Android.

Both Microsoft and Google began working in depth imaging well ahead of Apple, but Microsoft didn't move past its Xbox Kinect body controller, and Google's Tango similarly expected to use depth imaging on the backside of devices like a rear camera. Apple determined that it would be a lot smarter and more powerful to point its depth sensor at the user, enabling all of the advanced features it has shown off to date, including the latest FaceTime Attention Correction.