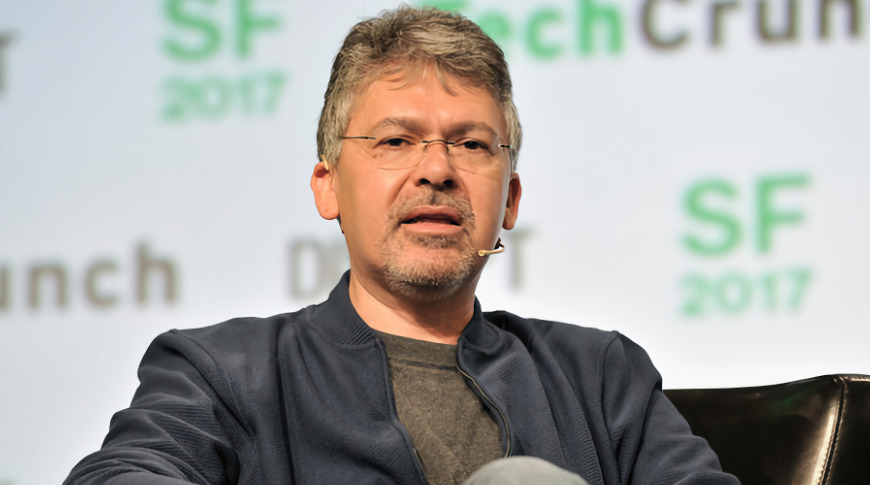

In a wide-ranging interview, Apple's head of Artificial Intelligence John Giannandrea states that it's "technically wrong" for AI to be processed at data centers instead of on a user's own device.

John Giannandrea, Apple's Senior Vice President for Machine Learning and AI Strategy, has answered critics who say that the company can never succeed in Artificial Intelligence because of its insistence on the privacy of processing on device. He denies that without leveraging mass-collected information in data centers, Apple's Machine Learning must be constrained.

"I understand this perception of bigger models in data centers somehow are more accurate," he told Ars Technica in an interview, "but it's actually wrong. It's actually technically wrong."

"It's better to run the model close to the data, rather than moving the data around," he continued. "And whether that's location data — like what are you [the user is] doing [such as] exercise data, what's the accelerometer doing in your phone — it's just better to be close to the source of the data, and so it's also privacy preserving."

As an example of privacy, speed and even practicality, he talked about how cameras can now help you choose when to take a photo. "If you wanted to make that decision on the server, you'd have to send every single frame to the server to make a decision about how to take a photograph," he said.

"That doesn't make any sense, right? So, there are just lots of experiences that you would want to build that are better done at the edge device," he continued.

Moving from Google to Apple

Giannandrea was Google's head of AI and search until 2018 when he moved to Apple and was put in charge of Siri and Machine Learning.

"When I joined Apple, I was already an iPad user, and I loved the Pencil," he said. "So, I would track down the software teams and I would say, 'Okay, where's the machine learning team that's working on handwriting?'"

There wasn't one, so he created it and says that there is also now practically no part of Apple that isn't engaging with AI and ML. "I find it very easy to attract world-class people to Apple," he said, "because it's becoming increasingly obvious in our products that machine learning is critical to the experiences that we want to build for users."

"I guess the biggest problem I have is that many of our most ambitious products are the ones we can't talk about and so it's a bit of a sales challenge to tell somebody, 'Come and work on the most ambitious thing ever but I can't tell you what it is," he said.

Giannandrea says that he was attracted to Apple, and believes it is the right place to work on these topics, because of this same issue of being focused on experiences. "I think that Apple has always stood for that intersection of creativity and technology," he said.

"And I think that when you're thinking about building smart experiences, having vertical integration, all the way down from the applications, to the frameworks, to the silicon, is really essential," he continues. "I think it's a journey, and I think that this is the future of the computing devices that we have, is that they be smart, and that, that smart[ness] sort of disappear[s]."

Apple Silicon

The next known step for Apple is the announced transition to Apple Silicon, and while Giannandrea unsurprisingly won't directly say what this means to AI, he does say it will have an impact outside Apple.

"We will for the first time have a common platform, a silicon platform that can support what we want to do and what our developers want to do," he says. "That capability will unlock some interesting things that we can think of, but probably more importantly will unlock lots of things for other developers as they go along."

Giannandrea explains that this is specifically because of how Apple is going to be able to leverage the Apple Neural Engine (ANE) works. "It's a multi-year journey because the hardware had not been available to do this at the edge five years ago," he said.

"The ANE design is entirely scalable," he continued. "There's a bigger ANE in an iPad than there is in a phone, than there is in an Apple Watch, but the CoreML API layer for our apps and also for developer apps is basically the same across the entire line of products."

As well as his technology work within Apple, Giannandrea has recently been lobbying European Union officials over their plans to regulate the use and implementation of AI. Separately, Apple is continuing to acquire companies specifically to aid in the development of AI such as Siri.