HomePod owners may not necessarily have to even call out the word "Siri" in future, with Apple researching ways to use gaze detection for a device to know it's wanted.

If you have multiple Apple devices, then you know that it's difficult to get Siri to respond on the one you want. When you are in a room that contains an iPhone, an iPad, and a HomePod mini, Apple has all sorts of systems to assess which device you want, but they routinely fail.

Furthermore, not everyone feels comfortable with the "Siri" prompt, even if it is better than the original "Hey Siri" one. You can still say either version, and so can your TV set — it's common for something said on a show to be close enough to "Siri" that it prompts a query you didn't ask for.

Then there is also the possibility of users needing to interact with devices without using their voice at all. There can of course be situations where a command needs to be issued from a distance, or where it could be socially awkward to talk to the device.

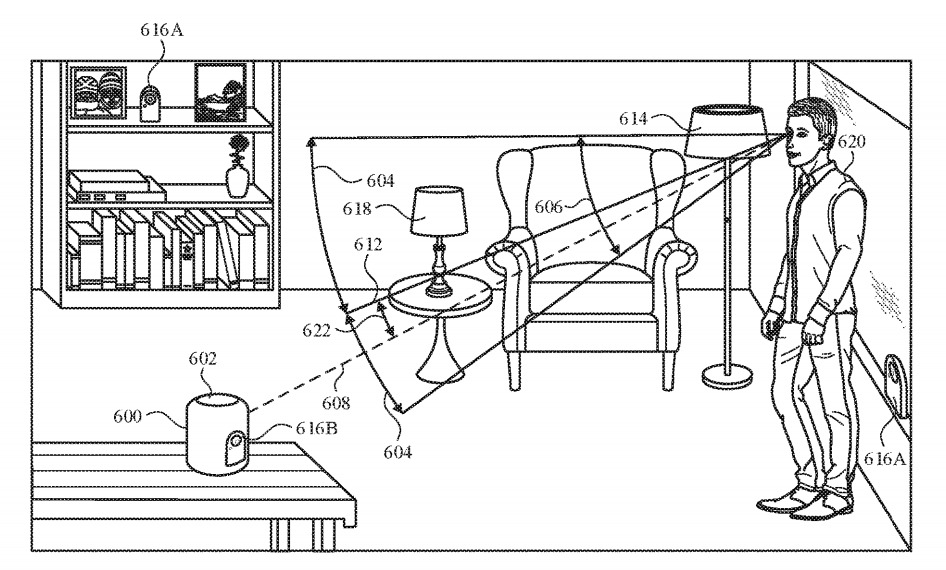

In a newly granted patent called "Device control using gaze information," Apple suggests it may be possible to command Siri visually. Specifically, it proposes that devices could detect a user's gaze to determine if that user wants that device to respond.

It would need HomePods, or other devices, that had cameras and other sensors capable of determining the location of a user and the path of their gaze, to work out what they are looking at. This information could be used to automatically set the looked-at device to go into an instruction-accepting mode where it actively listens, in the expectation that instructions will be told to it.

That would be similar to the way that an iPhone's "always on" screen will actually switch off until you look at it. So there is already a device that can detect when it is being looked at.

Apple could extend that to interpret a gaze as being the equivalent of a verbal trigger. Users could still call out "Siri" if they weren't looking at the device, but it would give them an extra option.

Using the gaze as a barometer for whether the user wants to tell the digital assistant a command is also useful in other ways. For example, gaze detected looking at the device could confirm that the user actively intends the device to follow commands.

A digital assistant for a HomePod could potentially only interpret a command if the user is looking at it, the patent suggests.

In practical terms, this could mean the difference between the device interpreting a sentence fragment such as "play Elvis" as a command or as part of a conversation that it should otherwise ignore.

The patent filing mentions that simply looking at the device won't necessarily register as an intention for it to listen for instruction, as a set of "activation criteria" needs to be met. This could merely consist of a continuous gaze for a period of time, like a second, to eliminate minor glances or false positives from a person turning their head.

The angle of the user's head is also important. For example, if the device is located on a bedside cabinet and the user is laid in bed asleep, the device could potentially count the user facing the device as a gaze depending on how they lie, but could discount it as such for realizing the user's head is on its side instead of vertical.

It would be a judgement, taking into account whether the user's eyes were open, as well as what the angle was. That would avoid unintended glances triggering Siri, but as useful as that would be, there is then a concomitant problem.

That's how if the device won't always respond to a gaze, the user has to know whether it has or not. It can't be that the user speaks a long command only to have Siri eventually say, "You talkin' to me?"

So in response to an intentional gaze, a device could provide a number of indicators to the user that the assistant has been activated by a glance, such as a noise or a light pattern from built-in LEDs or a display.

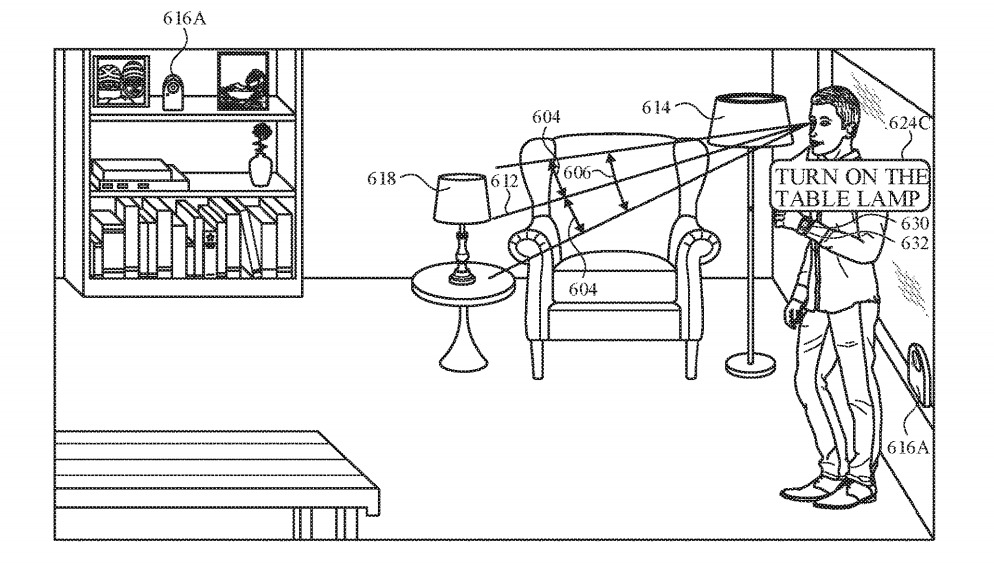

Given the ability to register a user's gaze, it would also be feasible for the system to detect if the user is looking at an object they want to interact with, rather than the device holding the virtual assistant.

For example, a user could be looking at one of multiple lamps in the room, and the device could use the context of the user's gaze to work out which lamp the user wants turned on from a command.

The patent also describes situations where the user might begin a command before turning to the device to complete it. Or alternatively, that they might look at the device and turn away before finishing the command.

In either case, the device would need to have determined that it is the one being addressed. But if the gaze isn't detected right away, Apple suggests that the device could ask the user what they want, and thereby get their attention.

This is not the first time that Apple has applied for parents concerning being able to remotely operate a device has cropped up in earlier patent filings a few times. For example, a 2015 patent for a "Learning-based estimation of hand and finger pose" suggested the use of an optical 3D mapping system for hand gestures, which may well have led to the Apple Vision Pro.

The new patent, though, is also not the first time Apple been granted one on precisely this same patent. A version of it was originally filed in 2019, and was then granted in 2020.

Apple applies for many hundreds of patents every year, and it's not uncommon for it to re-apply even after one is granted. It can be, for instance, that there is a minor but significant update in the new version.

In this case, the fact that Apple originally applied for the patent in 2019 means that there has been time to see at least parts of it becoming shipping products. Face ID had already launched in the 2017 iPhone X, but exceptional gaze detection has been a key element in the Apple Vision Pro.

It's still true, however, that even the existence of repeated patents on the same technology is not proof that this specific idea will ever launch. But the patent's drawings consistently show a HomePod, and we are now closer to having a similar HomeHub device that might well have cameras.

Originally filed on August 28, 2019, the patent lists its inventors as Sean B. Kelly, Felipe Bacim De Araujo E Silva, and Karlin Y. Bark.