Apple is researching new types of user interaction methods, including a system that could trigger certain features if it detects a user is present — including marking a notification as read.

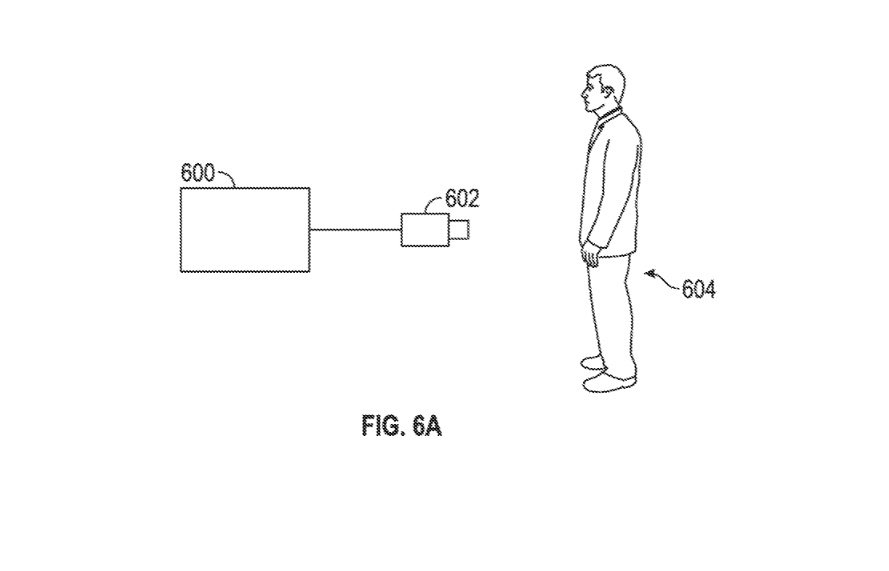

In a patent application published Thursday by the U.S. Patent and Trademark Office, Apple outlines a system that could detect whether a user is in its immediate vicinity. It also includes detection of a user's gaze for more granular UX capabilities, opening up a range of control possibilities for devices like the iPhone, Apple TV, Mac, or HomePod.

"Interaction with such devices can be performed using various input devices, such as touch screen displays, touch-sensitive surfaces, remote controls, mice and other input devices. However, there are instances where user interaction with the computing devices would be enhanced if the user were not required to physically provide input to the computing devices, but rather if the computing devices were to take certain actions autonomously based on user detection," the patent application reads.

For example, the system could detect a specific person's face and perform an action based on the identification. That's basically the functionality of Apple's Face ID, but the patent hints at uses beyond authentication.

Apple gives other examples, such as a device determining where a user is looking or playing specific audio when a certain person in present. Additionally, one embodiment focuses on triggering an action based on how close or far a user is from the device.

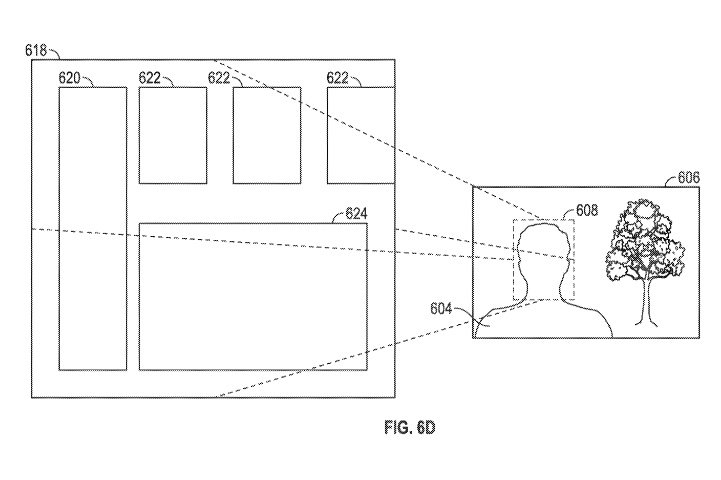

Some of the practical applications of the user detection system differ based on the capabilities of the device. One example is a reader device that could track which portions of a piece of text a user has actually read based on their gaze.

Other features could include distance determination for display devices. In this case, a device could autonomously change the size of content or images displayed on a device depending on how near or far a user is from it.

The specific patent application on Thursday is an omnibus continuing previous work on user detection, and it seems to focus specifically on the tracking of text reading. Apple contends that the gaze detection system could be used to track how much of a message a user has read.

However, there are use specific use applications outlined in some of the patent continuation text. One embodiment suggests that a system could automatically mark a notification as read if a user is in front of a display.

On devices meant to be used by multiple people, the system could associate certain audio content with user profiles based on detection. In other words, the system could learn a user's taste and provide tailored recommendations based solely on whether they are present when content is being played.

The patent application lists Avi E. Cieplinski; Jeffrey Traer Bernstein; Julian Missig; May-Li Hoe; Bianca Cheng Costanzo; Myra Mary Haggerty; Duncan Robert Kerr; Bas Ording; and Elbert D. Chen as its inventors. Many of the inventors have been listed on previous Apple patents, ranging from Siri on a Mac to iPad control mechanisms that involve multi-function keys and "touch-strips."

Apple files numerous patents applications on a weekly basis. Many technologies described in patent applications never end up being used in a user device, and the applications themselves give no timeline for a potential release.