Siri on HomePod improved its standing in an annual smart speaker comparison carried out by analyst Gene Munster's Loup Ventures, with Apple's virtual assistant weathering a barrage of accuracy tests to place second behind Google Assistant.

For the test, Muster and fellow analyst Will Thompson asked four smart speakers — Amazon Echo with Alexa, Google Home with Google Assistant, HomePod with Siri and and Harmon Kardon Invoke with Microsoft's Cortana — a series of 800 questions and recorded the accuracy of the response, as well as the understanding of the query.

Munster and Thompson designed the test based on what most users would expect from a smart speaker. Questions fell into five categories: local points of interest, commerce, information, navigation and hardware commands.

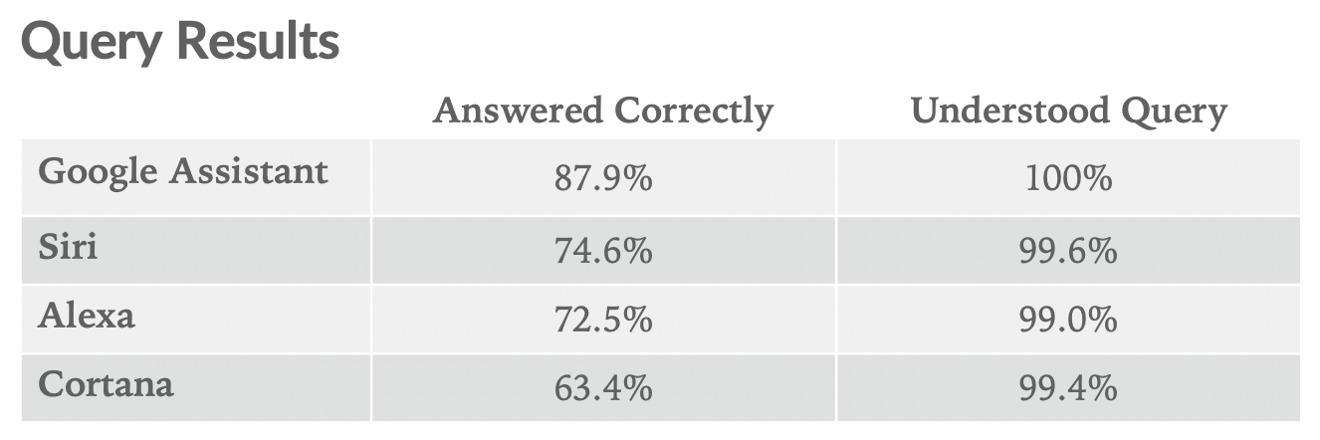

Google assistant was able to understand all queries, of which it provided correct answers for about 88 percent. Siri came in second, understanding 99.6 percent of the queries and answering 74.6 percent correctly. That performance just ekes out Alexa, which understood 99.0 percent and correctly answered 72.5 percent. Cortana pulled up the rear only answering 63 percent of the questions correctly.

Siri excelled in the command category, which included many music related queries, of which HomePod specializes in thanks to native integration with Apple Music. This was the only one of the five categories where Siri came out on top.

Commerce was the area in which Siri was least knowledgeable, understandable as HomePod doesn't really allow you to buy anything as Alexa does. Alexa's eagerness to sell consumers products from Amazon stuff hampered its own score, however, as can be readily seen by asking "how much does a manicure cost."

Alexa recommends a manicure set for $60 dollars on Amazon, whereas Google Assistant gives an impressively detailed response, saying, "on average, a basic manicure will cost you about $20. However, special types of manicures like acrylic, gel, shellac, and no-chip range from about $20 to $50 in price, depending on the salon." Google's massive search engine capabilities clearly come into play here.

In terms of accuracy, Siri has improved 22 percentage points over the last nine months, where Google Assistant, Amazon Alexa, and Microsoft Cortana only improved between 7 and 9 points over the past year. As much flack as Apple's assistant has taken from critics of the service, these figures are quite telling.

The results shouldn't be entirely surprising given the relative investments each tech firm puts into their respective AI system, and it is encouraging to see Siri improve so drastically since the last time the test was run. When Loup Venture last conducted the evaluation in February, Siri came in dead last.