The Apple Vision Pro will bring an entirely new class of spatial apps to users. Here's how to get started by checking out Apple's sample code.

When Apple introduced the Apple Vision Pro and visionOS two months ago at WWDC 2023, it shook the world. Apple Vision Pro promises to bring immersive apps to users in a simple but elegant way.

Along with a demonstration of the system and what it will look like, Apple also added a resources page to its developer website. One of the sections of those pages has sample code that you can download to see how you can make your own visionOS apps.

There are currently four sample apps from Apple:

- Hello World

- Destination Video

- Diorama

- Happy Beam

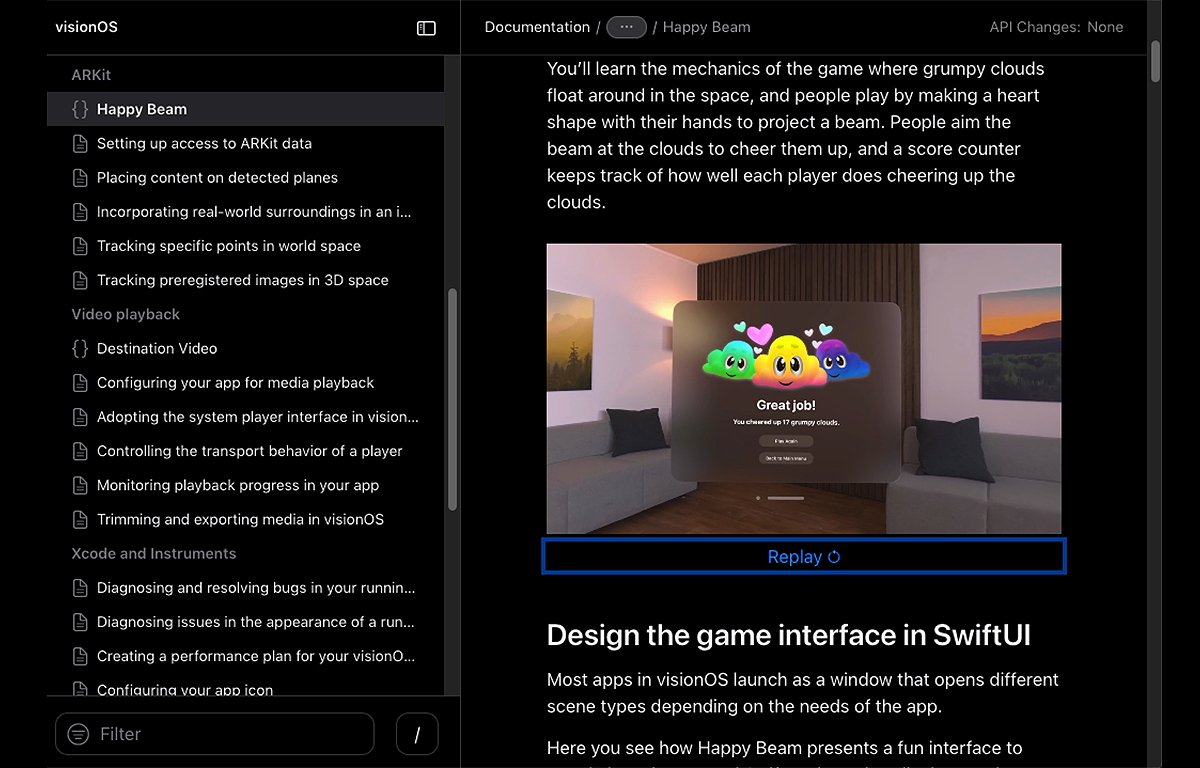

All of the sample app pages have short playable videos that let you see what they look like without having to build them in Xcode.

Getting started

First, you'll need to download and install the macOS Sonoma beta onto a spare drive, boot into it, and run any updates. Then you must install Xcode beta 4, its command line tools, and the visionOS simulator.

All three components are separate downloads on Apple's developer downloads page. You'll need an Apple ID to log in to download.

Once your software environment is set up, head to the visionOS documentation page. Scroll to the bottom of the page and you'll see all four of the sample apps listed.

Click each sample app page, then click the Download button on each to download the sample app projects one by one.

To build and run each app, you'll need to be familiar with Xcode, Swift, SwiftUI, and in some cases ARKit and 3D tools.

Hello World

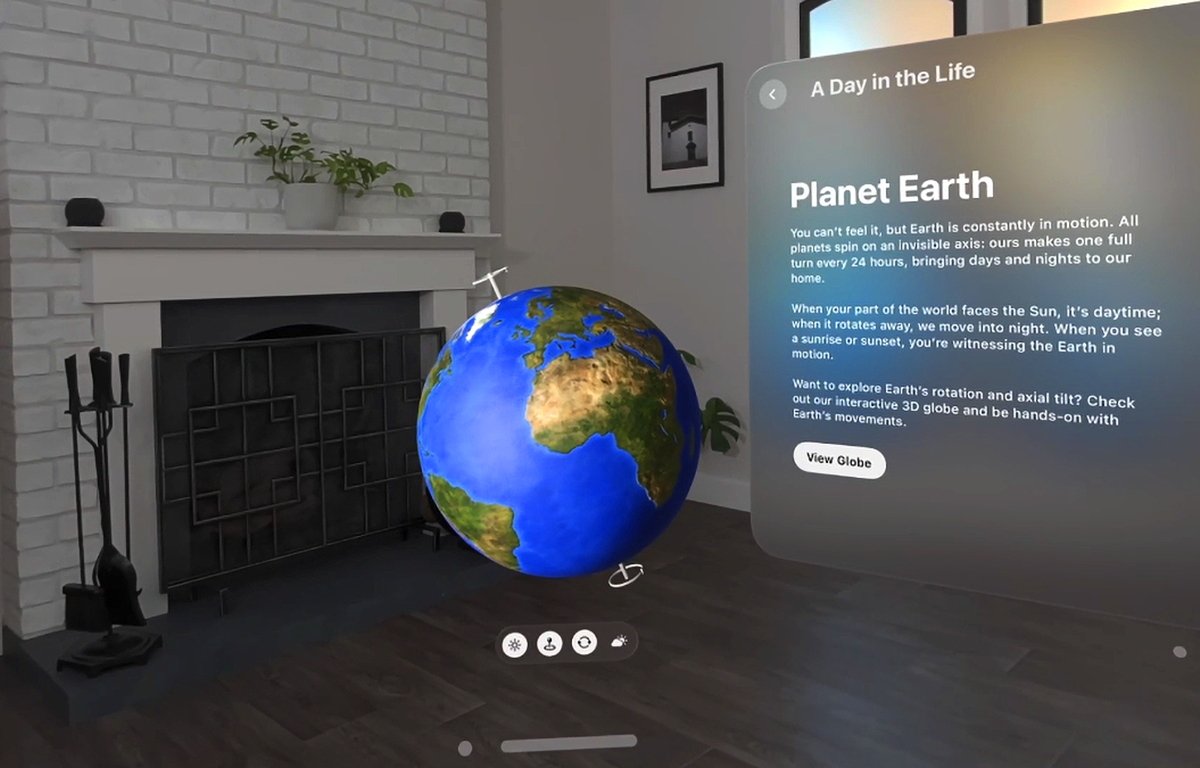

More than the traditional Hello World app, the visionOS Hello World is a 2D and 3D SwiftUI app that displays the Earth, objects in orbit, and the solar system.

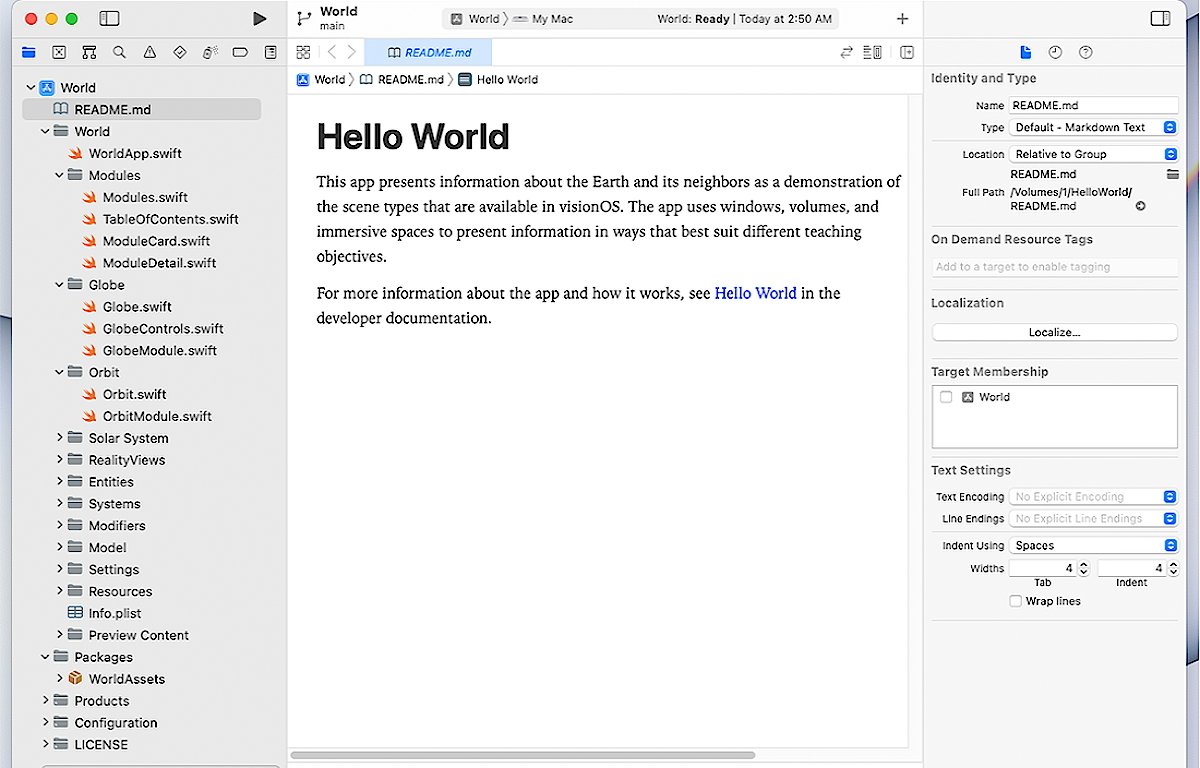

Unlike most Hello World apps, the visionOS version comprises over forty files - there are files pertaining to the app itself such as models, settings, globe objects, orbit files, solar system files, and reality views.

Hello World uses immersive spaces and 3D volumes to display the Earth and solar system in 3D in a room. You can grab objects in space and move them around, scale them, and see other objects related to them such as objects in orbit.

One thing is clear: visionOS apps are going to be more complex than most iOS, or macOS apps. Be prepared to spend significant time learning the new technologies needed to make visionOS apps.

Destination Video

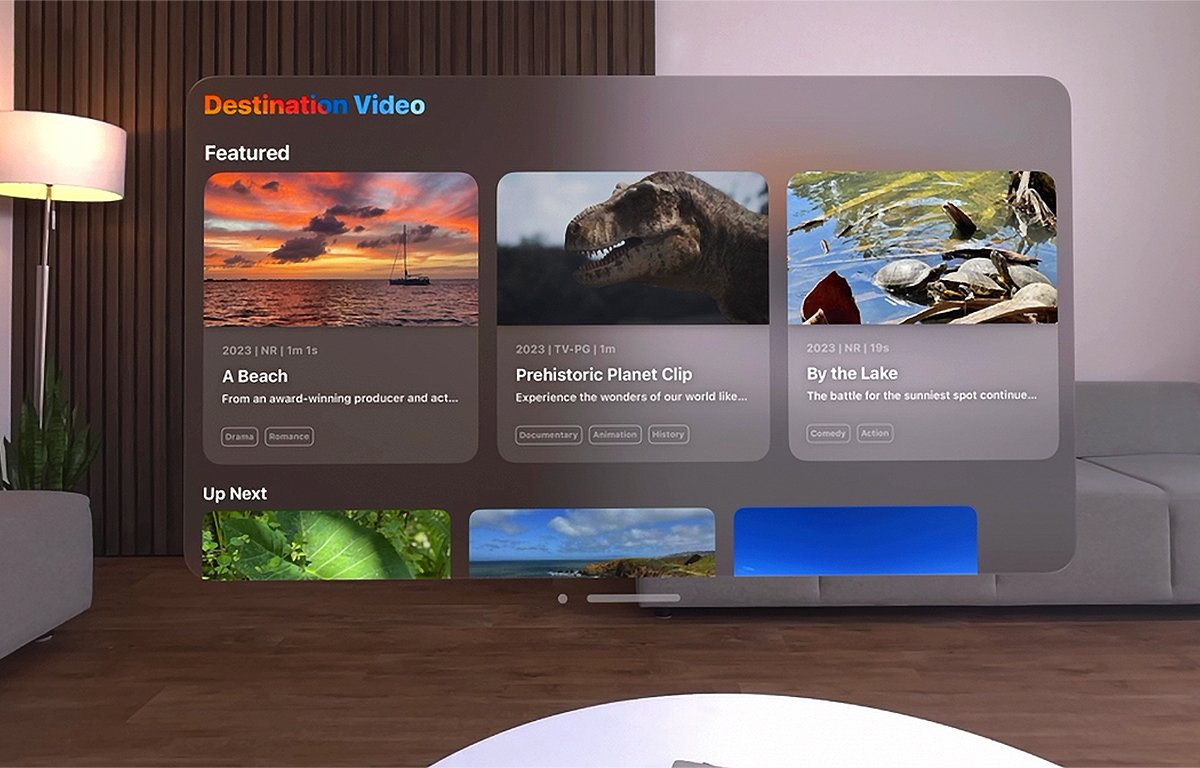

Destination Video is a multiplatform video streaming app that runs on visionOS, iOS, and tvOS. It allows you to playback video using a common interface across platforms, but it still offers an immersive experience on visionOS.

Destination Video uses Apple's tried and true AVFoundation framework which provides high-level APIs for audio and video playback, and media handling.

For a great introduction to AVFoundation, see Bob Mccune's excellent book Learning AV Foundation: A Hands on Guide to Mastering the AV Foundation Framework published by Addison Wesley.

Diorama

Diorama is an app that demonstrates how to use Apple's RealityKit and Reality Composer Pro (RCP) to create an interactive 3D map that users can rotate and navigate in 3D space.

Diorama allows you to visit two real-world hiking places in California on a 3D map: Yosemite National Park, and Catalina Island.

In order to create an interactive 3D app like Diorama, you first use or create 3D assets such as objects, images, and RCP scenes. You can also add audio.

RCP provides a library of objects you can use, or you can make your own. Diorama uses custom assets instead of assets from RCP's library.

To add assets to your Swift visionOS app, you can import them into RCP, or add them to your app's .rkassets bundle in the Swift package.

You can also use the industry-standard 3D object file format Universal Scene Description USDZ files. However, they don't perform as well as RCP's own natively compiled assets.

RCP projects can have multiple scenes containing a hierarchy of objects called entities. Entity hierarchies are displayed in a new class still in beta called a RealityView.

The sample code also shows how to attach points of interest and then transition to them when the user navigates to them, and how to use shader graphs to add custom textures to objects.

Happy Beam

Happy Beam is a little game app that demonstrates how to build a simple interactive 3D game in visionOS. The game allows you to cheer up sad floating clouds in a 3D immersive space by shooting them with rainbows.

You can use either hand gestures or a game controller. The app uses ARKit's 3D hand tracking to recognize and track heart-shaped hand gestures as part of the user interface. Users are prompted for, and must authorize, hand gestures in visionOS before the game can run.

The NSHandsTrackingUsageDescription user info key explains to the user why hand tracking is being requested. Happy Beam uses ARKit's advanced image analysis and simd matrix floating point math library to determine where the user's hands are in 3D space.

The method computeTransformOfUserPerformedHeartGesture() in the sample code is interesting because it actually analyzes the joints on the user's fingers to determine if a heart shape is being made by the user.

Multiplayer is also supported via Apple's SharePlay.

Happy Beam provides an exciting look at what 3D immersive games using interactive hand gestures will look like on visionOS.

A good start for a new platform

Apple Vision Pro and visionOS are exciting new advances in computing and Apple has provided a lot of new material to get developers started. Just these few sample apps are really exciting.

If these kinds of apps are already possible on visionOS today, it's not hard to imagine what apps will be like in a few years' time as the system becomes perfected.

It's a better time than ever to be an Apple developer and Apple Vision Pro promises to keep the excitement going for developers for years to come.

Chip Loder

Chip Loder

Charles Martin

Charles Martin

Wesley Hilliard

Wesley Hilliard

Stephen Silver

Stephen Silver

William Gallagher

William Gallagher

Marko Zivkovic

Marko Zivkovic

Andrew Orr

Andrew Orr

Amber Neely

Amber Neely