Amazon Echo vulnerability allows hackers to eavesdrop with always-on microphone

A security researcher has shown off the potential danger of internet connected speakers being used to listen in on private conversations by publishing details of how to hack earlier models of the Amazon Echo via a hardware-based vulnerability that cannot be fixed with a software patch.

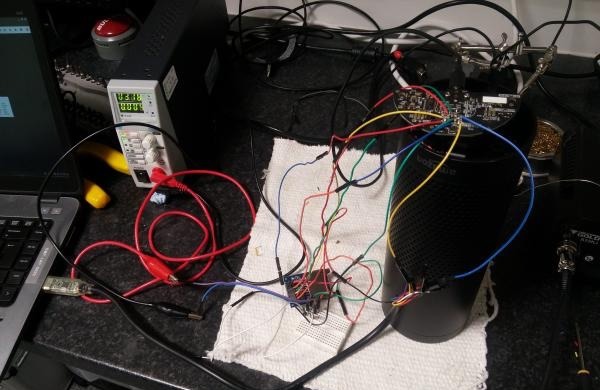

The 2015 and 2016 models of the Amazon Echo can be exploited by using 18 debug connection pads, accessible by removing the rubber base from the device, according to MWR InfoSecurity researcher Mark Barnes. An external SD card breakout board was attached to the debug pads, allowing Barnes to boot from an SD card and rewrite the onboard firmware, making it remotely accessible.

The firmware changes take advantage of a way the Echo functions for verbal commands by monitoring a file created by the Echo to constantly listen out for a verbal command prefix such as "Alexa." Motherboard reports a script is then used to continuously write the raw microphone data to a file, which is subsequently streamed to an external device and potentially either listened to or recorded remotely.

With different instructions, Barnes suggests the persistent remote access to the Echo could be used to access other data, such as customer authentication tokens.

Notably, the attack requires physical access to the Echo in order to take place, making it a tougher hack to accomplish, and severely limiting its usability. Even so, the method leaves behind no obvious sign of an attack, once the extra hardware is removed and the base replaced, with normal functionality of the smart speaker said to be completely unaffected by the code changes.

Despite gaining access to the "always-on" microphone, the hack cannot get around the physical mute button on the device, which disables the microphone completely. This switch is a hardware mechanism that cannot be altered with software, though it is feasible that with extra work this button could be physically disabled by a determined attacker.

"Rooting an Amazon Echo was trivial, however it does require physical access which is a major limitation," writes Barnes. "However, product developers should not take it for granted that their customers won't expose their devices to uncontrolled environments such as hotel rooms."

The attack has been confirmed to work on the 2015 and 2016 editions of the Amazon Echo, but a change to the debug pad prevents external booting using the technique in the 2017 model. Considering it is estimated that more than 7 million Echo units were sold in 2015 and 2016, it is unlikely that Amazon will make any changes to already-sold Echo devices to fix the vulnerability.

It appears the compact Amazon Dot is not vulnerable to the same attack, and it is unclear if the Echo Show and the Echo Look will be susceptible to a similar technique. Both of these recently-launched devices introduce cameras to the device, which if successfully attacked, could provide hackers with a live video feed.

"Customer trust is very important to us," a statement from Amazon begins. "To help ensure the latest safeguards are in place, as a general rule, we recommend customers purchase Amazon devices from Amazon or a trusted retailer and that they keep their software up-to-date."

The hack is a reminder of the potential security risk in-home devices may pose to their owners, and the possibility of smart home gadgets being used for surveillance purposes. Previous Wikileaks publications, such as the "Vault 7" leaks, show the CIA is working on ways to break the security of devices in order to monitor the agency's targets without being discovered.

Apple has already taken steps to secure the HomePod, its own smart speaker due for release in December, revealing some of its security in response to a report about iRobot potentially collecting maps of customer homes generated by its cleaners.

"No information is sent to Apple servers until HomePod recognizes the key utterance 'Hey Siri,' and any information after that point is encrypted and sent via an anonymous Siri ID," Apple advised to a customer query. "For room sensing, all analysis is done locally on the device and is not shared with Apple."

Malcolm Owen

Malcolm Owen

Amber Neely

Amber Neely

Thomas Sibilly

Thomas Sibilly

AppleInsider Staff

AppleInsider Staff

William Gallagher

William Gallagher

Christine McKee

Christine McKee

31 Comments

Well Surprise Surprise...

I always knew that the security is a major problem for Amazon Echo or Google Pod or any cloud based processing with "always listen" devices, but not until this news it really sinks in how dangerous it could be without anonymous ID token or end-to-end encryption like Apple. It's not so much about thief taking advantage of it, it's more about losing your privacy in your own home, even if you live by yourself.

This crap never ends, does it?

"Notably, the attack requires physical access to the Echo in order to take place" same with keyboard keyloggers which is far more intrusive than echo.

Can anyone comment on owncloud.org efficacy? I keep hoping Apple might offer something in the MacOS server app...