There is nobody keener than an Apple fan to take a leaked version of iOS, pore over it in depth, and find out all its hidden secrets — except one. Even more motivated and determined than even the greatest Apple spelunker, is the criminal, the terrorist, the bad actor — and they absolutely shouldn't be given the chance to do so with an encryption backdoor of any sort.

It can be fun for us to know what's coming next, and we might even see it as useful. Based on the apparent leak of iOS 14, for instance, you might well put off buying an iPhone 11 because it looks like the "iPhone SE 2" will be out soon.

You might be right, you might be wrong. The worst that can happen is that you go for a few weeks without an iPhone. But, there is a larger point to be made.

We must not have encryption backdoors

These very leaks, and how they are being torn apart for any scintilla of clues in the code, are themselves proof that we must not ever have an encryption backdoor. Like all these features in iOS 14, holes will be found, and exploited.

Although, if you seriously ever doubted this, you're probably in an agency or a company that would benefit from a way to unlock iOS. That's not to be flippant about such agencies, it is to say that they know backdoors are a fallacy, but they continue to pursue them anyway.

Any backdoor will be found, any security lapse will be exploited, and it will be used — those companies that specialize in breaking into iPhones are proof positive. If you ever doubted that, the spotting of everything from icon images to unannounced hardware in an operating system that won't publicly see the light of day until June, surely shows you the truth.

The argument they state publicly, is that law enforcement requires this access in order to protect us from people or organizations what would do us harm. Embedded in this is the unspoken idea that the world is divided neatly into goodies and baddies, that only people wearing white hats will use this backdoor.

It boils down to the old stance of if you've got nothing to hide, you shouldn't object to law enforcement being able to see what you do. That argument has always tried to use rhetoric to ride roughshod over civil liberties, but now it's been used as loudly as possible, and solely for political gain.

The safety argument simply does not work. There cannot actually be anyone in the FBI, or any law enforcement agency in the world, who actually believes in it. If you pay the idea more than even the slightest passing glance, you recognize that what's a backdoor for law enforcement is exactly as much a backdoor for anyone else, and the miscreants outnumber the good guys at least 100 to 1.

Apple under pressure

Apple pulled off a difficult job when it argued against opening a backdoor into iOS in the wake of the San Bernardino shooting. It's precisely when we are most shaken by events like this that cynical agencies can ramp up the pressure and bet on public support.

Apple's steady, calm, explanations of its counter-arguments won the day then, but the agencies have tried again after every shooting.

While this has applied to any administration, most recently US Attorney General William Barr has tried to raise the stakes. If Apple can successfully make its case for keeping encryption when it could help thieves, shooters, and terrorists, no one can stand agains the truth that children need protection from sexual abuse.

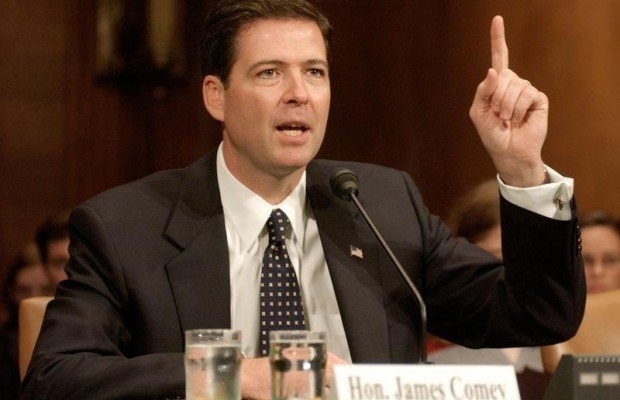

U.S. Attorney General William Barr speaking at the Center for Strategic & International Studies in February

Barr has urged Apple to do more to combat such abuse, and while he avoided using the words backdoor or encryption, every measure he proposed would require precisely those.

This time around, Barr put the spotlight on online child abuse, and at the same time he kept it away from terms like backdoor. He continues to make it sound as if Apple and other big technology firms could open a backdoor with the flick of a switch with no impact to day-to-day security.

You can as easily make an argument that politicians are just uninformed as you can accuse them of playing short-term politics.

Nobody expects politicians to know how to keep sensitive data secure. They haven't demonstrated knowledge yet, and we're not expecting it to suddenly appear. However, everyone expects the Government's own National Security Agency to know — and it does not.

This is the NSA, and it has admitted to multiple breaches of its security leading to the exfiltration of its highly secure hacking tools that it hoards.

Despite this, the NSA's general counsel, Glenn S. Gerstell, is in favor of adding backdoors to iOS. He didn't have a shooting to use as topical leverage, so he wrote his 2019 New York Times opinion piece about it for the day after September 11.

The NSA can't keep its own house in order, and as with so many politicians, it attempts to work not by making a case, but by herding public opinion. It wants to make us champion encryption backdoors when it not only knows they can't work, it knows its own systems are vulnerable even without a backdoor.

It's easy to see the leaders of agencies, and to think of politicians, as somehow separate from government bodies, but together they are supposed to have our safety at heart, and they are failing. Instead, it's a commercial company that is doing the better job.

Apple, whether you like its products or not, exists to make money, not to be a political body. Its hugely successful tools are hugely successful in part because Apple seems to take security more seriously than the government.

Apple's security and anti-leak measures appear to have failed with the leak of iOS 14, but that's endangered corporate commercial trade secrets, not lives. Apple's job is to keep its users' privacy safe, and if anything the government should working with Apple to keep the public safe, instead of trying to use it as a scapegoat because it wants things to be easy.

This is not about technology

The leak of iOS 14 will not dissuade any government or law enforcement agency from making the specious arguments against encryption, or for back doors. The gain for these agencies is not greater security for us all, it is the creation of a boogeyman.

Law enforcement, so the idea goes, would be able to prevent every crime and tragedy if only Apple would let them into all iPhones, at the drop of a hat. And if it couldn't have unfettered access, a convenient key under the doormat would be good. The only problem with that argument is everybody would know the key is under the doormat, as plain as if it was labeled with an arrow and a giant label saying that it's there.

Tim Cook runs a company whose purpose is to make money, yet he wants to face the DOJ in court because security is so crucial

It is a lot easier to blame a trillion-dollar company for your problems than to admit them or do anything to fix them. It's even easier when big technology companies are cause for genuine concern over privacy and security. We are in a world where Facebook has a billion users, and somewhere in its offices is probably a sign saying "2 Days Without Security Breach."

We are primed to be wary of big tech, and so we should be. There is reason to doubt, and to question, and to be careful about our use of technology. Our entire society needs law enforcement agencies, it just needs them to be doing their job instead of hoping we are giving only the odd passing glance to the problems that face us.

Google doesn't appear to be under a fraction of the pressure Apple is. Android phones hugely outsell iPhones, and given recent user base numbers, Android devices are used in the perpetration of more crimes than iPhones are. But it's Apple that gets called out even by the government.

Even with its security focus, and the commercial benefits that stance gets it, Apple is no more guaranteed to keep its software safe than the NSA is. The last few days clearly demonstrate that willingly opening a door that cannot, by its very nature, be kept secure is a very bad idea.