The U.S. Supreme Court is preparing to hear arguments that could result in major changes to Section 230 — and any ruling will change how the Internet fundamentally works in the US. Here's what you need to know.

The United States Supreme Court will, in late February, be hearing oral arguments in a pair of cases that involve the freedom of speech on the Internet. The case of Gonzalez v. Google will be heard on February 21, while Twitter v. Taamneh will occur on February 22.

Both cases are closely linked, as they deal with the legal responsibilities that fall upon platform holders as it pertains to user-generated content.

Depending on what the Supreme Court says, the cases could have a major bearing on how lawmakers handle so-called Section 230 protections, and potentially force companies with online platforms to change how they operate.

What is Section 230?

Section 230 refers to a part of the Communications Decency Act, which was passed in 1996. With the Internet starting to emerge, the Communications Decency Act aimed to try and regulate adult material on the Internet, much like other communication mediums.

However, the creators of the law determined that the potential freeing discourse of the Internet could be stifled very easily by heavy-handed lawsuits, including those attempting to limit speech under the threat of expensive litigation.

That fear led to the inclusion of Section 230, which provides a form of immunity for websites, apps, and other platforms to exist, without worrying about content posted by third parties, namely other Internet users.

Specifically, Section 230 is specific that "no provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider."

In short, the provider of the site that content is hosted on cannot be considered the publisher of that content if it is posted there by someone else.

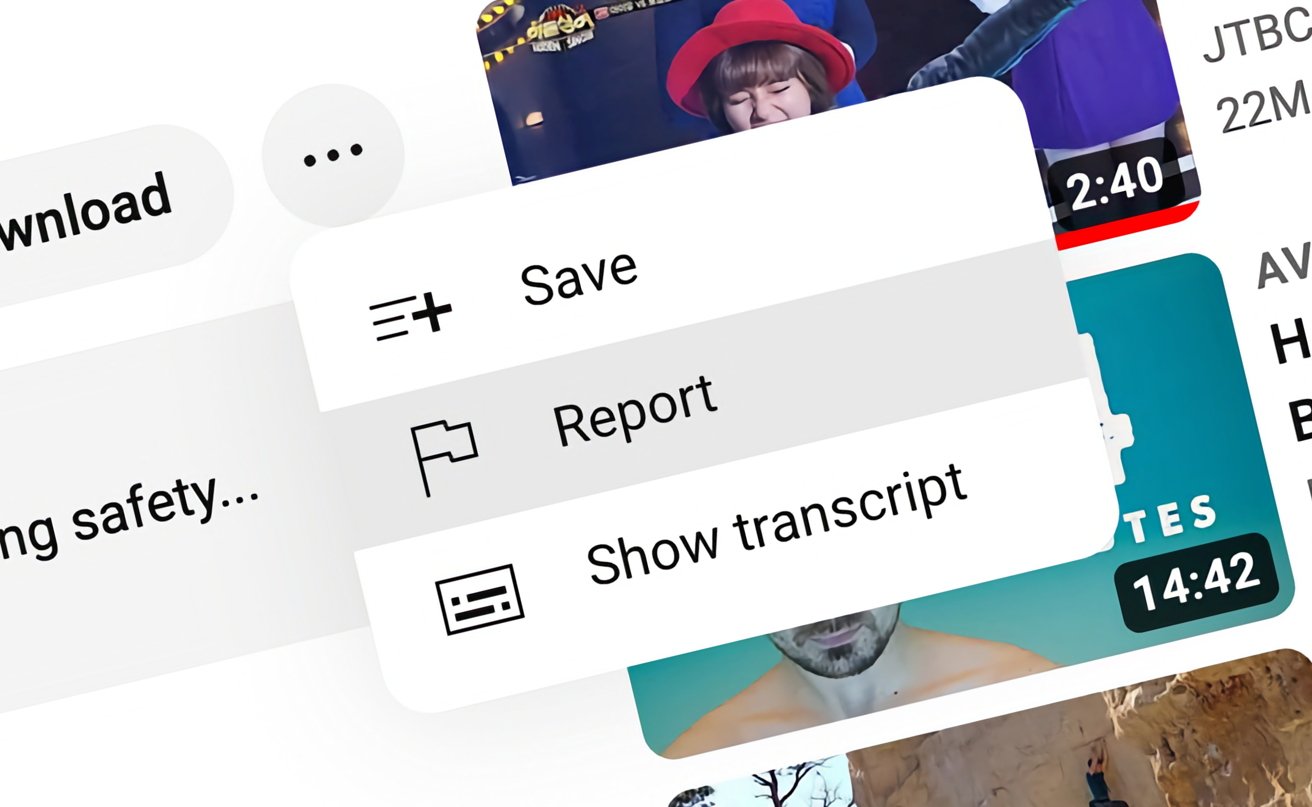

So long as YouTube tries to take down objectionable content where possible, Section 230 protects the company.

A second "Good Samaritan" section provides protection from civil liability for those same interactive computer service operators, so long as they make a good-faith attempt to moderate or take down content from third parties considered "obscene, lewd, lascivious, filthy, excessively violent, harassing, or otherwise objectionable, whether or not such material is constitutionally protected."

As a practical example, consider if someone decided to post a copyrighted movie, or one with illegal content depicted on YouTube.

Alphabet, YouTube's parent company, would be protected from being sued by the original copyright holder by taking down the video since that's a civil liability for the company. The tech company would also escape punishment for openly hosting objectionable material, since it would be making an effort to take it out of view when alerted, either by users or via its algorithms.

Specifically, the legal responsibility for the unauthorized posting of that content to YouTube would fall on the user who uploaded it in the first place. They would become the target of lawsuits and relevant criminal proceedings, if applicable, not YouTube nor Alphabet.

Section 230 has enabled the Internet to grow and function without any real legal problems for content hosts. The problem is that, while the law is helpful in general, it may be considered too helpful to service providers in some cases, simply by offering more shielding than the intended original scope of the law.

Shielding a political barrier

A problem with the existence of Section 230 is that some parties believe it is too protective of service providers. The definition of the law is broad on purpose, but that leads to accusations that it is being overused.

Part of that is the definition of the objectionable content that could be considered removable. When it comes to content that could be considered political in nature, the removal of that content may also be thought of as a political commentary, or worse, censorship.

Foe example, if a big public figure or a follower talks about a political event, amplifying their remarks with death threats or excessively salty language, their content can and should be removed from view, much like many other people on the Internet. The problem is that the same commentator could view the taking down of political content as being a censure of political discourse, regardless of the actual words used.

Add in that a lot of moderation on larger sites like Twitter and Facebook is now automated, alongside teams of humans, simply because there's a lot to get through. Add in the potential for a wide variety of biases to be written into the algorithm either by active development or as a product of machine learning, and the moderation system itself can come under attack.

This was evident in the various attempts by U.S. lawmakers to take tech companies to task over alleged political censorship. In 2020, tech CEOs were ordered to testify before Congress about Section 230 and allegations that some political viewpoints were suppressed in favor of others.

Apple CEO Tim Cook has also met with Republican House Leaders in September 2022 to discuss a perceived Big Tech bias against conservative views, though that didn't stop the House Judiciary Chairman Jim Jordan (R-OH) from subpoenaing Cook along with other tech CEOs over the matter in February.

Issues about Section 230 are felt on both sides of the fence, but for different reasons.

On the Democrat side, there's the general opinion that tech companies such as Facebook, Google, and others should be held liable for the spread of misinformation. For example, sensitive topics such as the 2020 election and access to health-related information which can easily find Internet users swamped by conspiracy theories and other misleading chicanery.

Critics of tech companies feel that the platforms hosting content bear some responsibility for hosted content, as well as failures in existing efforts to moderate or take down offending items.

There have been many suggestions from lawmakers on how to fix the assumed issues, including bills to strip protections from ad-monetized platforms if harmful content is discovered.

Two cases

For Section 230, two cases are being discussed in front of the Supreme Court in the near future. They concern the protections afforded to tech companies by the law, in two fairly similar situations.

For Gonzalez v. Google, the justices will need to determine whether Section 230 protects platforms when algorithms recommend someone else's content to users.

In this case, the question stems from a lawsuit from the family of Nohemi Gonzalez, a woman who died in a 2015 ISIS attack on a Parisian bistro. The lawsuit, brought under the Anti-terrorism Act, claims Google's YouTube helped aid ISIS recruitment by allowing videos to be published that incited violence, and recommended the videos to users algorithmically.

Google and YouTube insist they have taken "increasingly effective actions to remove terrorist and other potentially harmful conduct." There is still an assertion that Section 230 still provides immunity from treating the platform as a publisher.

In the other case, Twitter v. Taamneh, the justices are to determine whether Twitter and other social platforms could be held liable for aiding international terrorism, again via ISIS's use of the services.

The original lawsuit was by the family of Nawras Alassaf, who was among 39 people killed in a January 2017 ISIS attack in Istanbul. Filed in California, the Antiterrorism Act allows U.S. nationals to sue those who "aids and abets, by knowingly providing substantial assistance" to terrorism.

The logic is that, since Twitter and others knew their services were used by ISIS, the tech companies didn't work hard enough to remove the content from view. Twitter believes that knowing there's terrorism isn't the same as knowing about "specific accounts that substantially assisted" in the attack, which it could directly act upon.

In both cases, the Biden administration is leaning on the side of the families rather than the tech companies. In the Gonzalez case, Google and YouTube should not be entitled to immunity under Section 230.

However, in the Taamneh version, though the administration agrees a defendant could be held liable under the ATA if it didn't specifically know about a terrorist attack nor provide support for the act, it doesn't think that there's enough to demonstrate that Twitter provided more than "generalized support."

The future of the Internet

The conservative-leaning Supreme Court, along with bipartisan support for changes (albeit with different motives) and the Biden administrationall point to there being change on the way. Something will eventually happen about Section 230 protections.

A full repeal of Section 230 is unlikely, as it will briefly become open season for lawsuits affecting almost all possible platforms. And given the low bar for launching a lawsuit, these can be for practically any reason at all.

A tweaking of Section 230 is more plausible, but again, determining how to do so is a problem as well. Both sides of the aisle want change, but they want different and incompatible changes.

With most online services being US-centric, any changes to the law will be felt far beyond North America. Services changing their policies regarding user-generated content will affect practically all users, not just those in the U.S.

As evidence of this, consider the implementation of GDPR in Europe. Years after its inception, there were still some U.S. outlets that block European Internet users from viewing news stories, under an unwillingness to adhere to that continent's data protection standards, even though they're based on a completely different land mass.

There is another route, in that no changes happen to Section 230 at all. This result may seem like the best for tech companies, but it does mean they may be emboldened and feel more protected by Section 230 than ever before.

After all, promises of self-regulation will also only go so far before things return back to the angst-ridden normal.

That means there will be even more political yelling and more demands from lawmakers to make changes in the future.

The Supreme Court's musings and resulting legal decisions will have a global impact on how the Internet itself functions, for better or for worse. It's like the tagline for Alien Vs. Predator: Whoever wins, we lose.

![Social media companies rely on Section 230 for the business model to function. [Unsplash]](https://photos5.appleinsider.com/gallery/53088-106363-41200-79829-Social-Media-Header-l-xl.jpg)