How to use Live Text for Video in iOS 16

Apple has expanded its Live Text feature from images to video in iOS 16. Here's how to capture text from a video frame, all on your iPhone.

Live Text is a feature that uses intelligent optical character recognition on an image to capture visible text for further use. In short, you can highlight text in an image and do things with it.

With its introduction in iOS 15 and macOS Monterey, users have been able to take advantage of little snippets from a photograph or image for other purposes than just reading. You could copy the text, translate or search with it, and use it in other apps without needing to transcribe it manually.

Live Text for video is the natural progression of the feature in that it works with moving images.

Strictly speaking, it doesn't work with actively playing video, but paused video. Halt a video at a point where you see some text, and you can use Live Text in the same way as you would a still image, because that's what a paused video effectively is.

Here's how to get started with the new video feature.

How to use Live Text for video in iOS 16

- Use the Camera app to record a video.

- Open Photos and start playing the video.

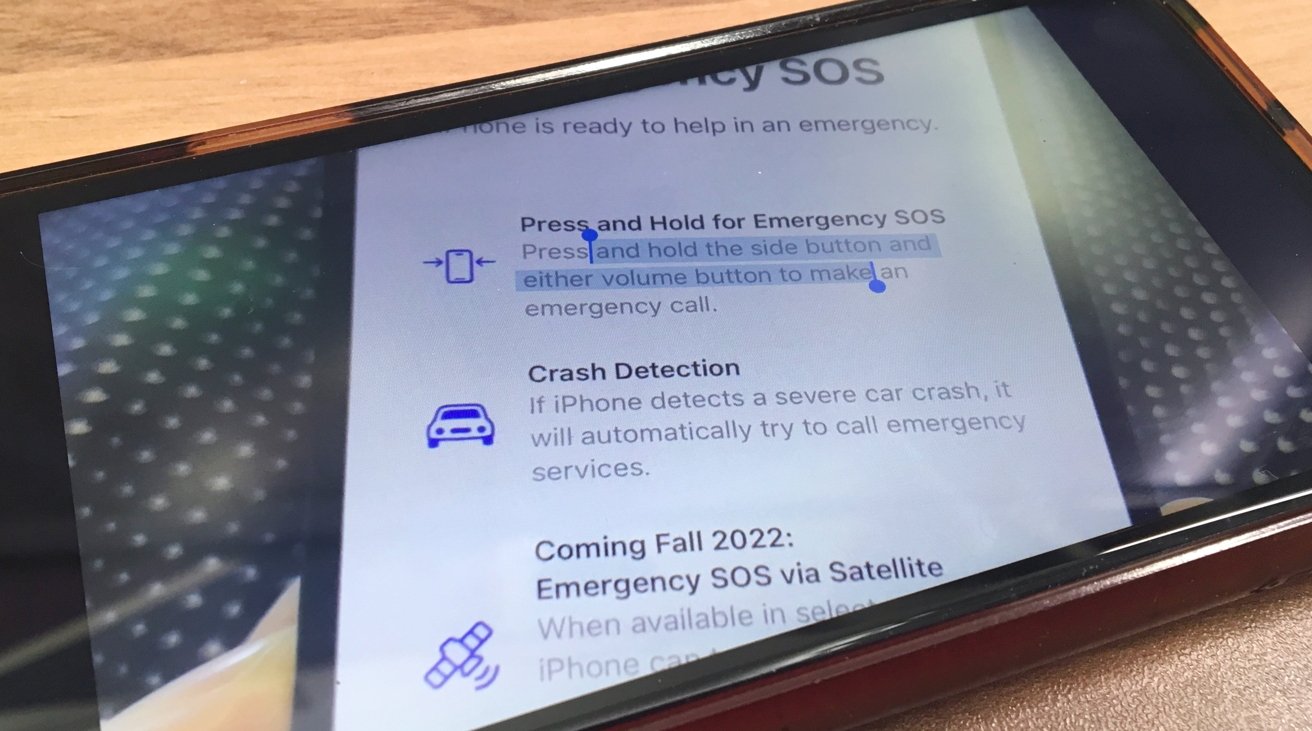

- Scrub through and pause the video at a point where the text you want to use is visible. If necessary, spool on for a clearer shot if the text is blurred or not easily readable.

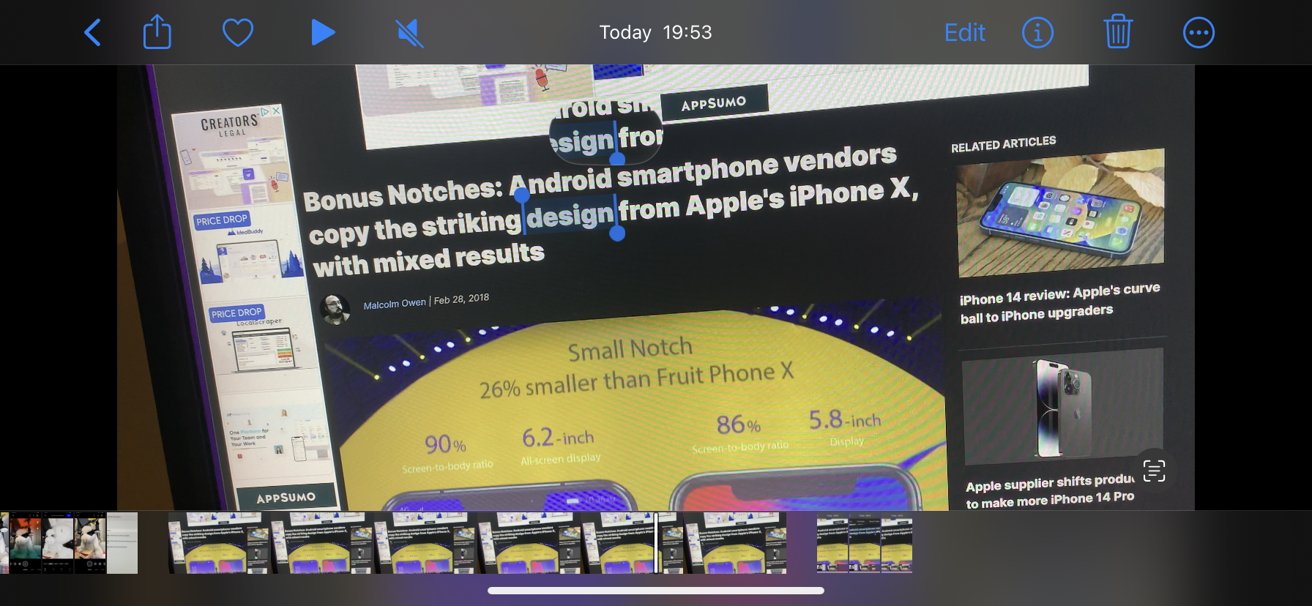

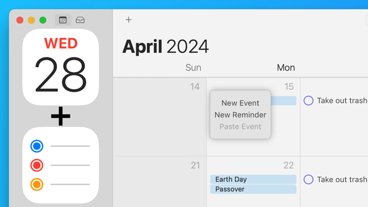

- Long-press on the text until part of it is selected.

- Use the blue indicators to adjust the length of selected text from the visible passage.

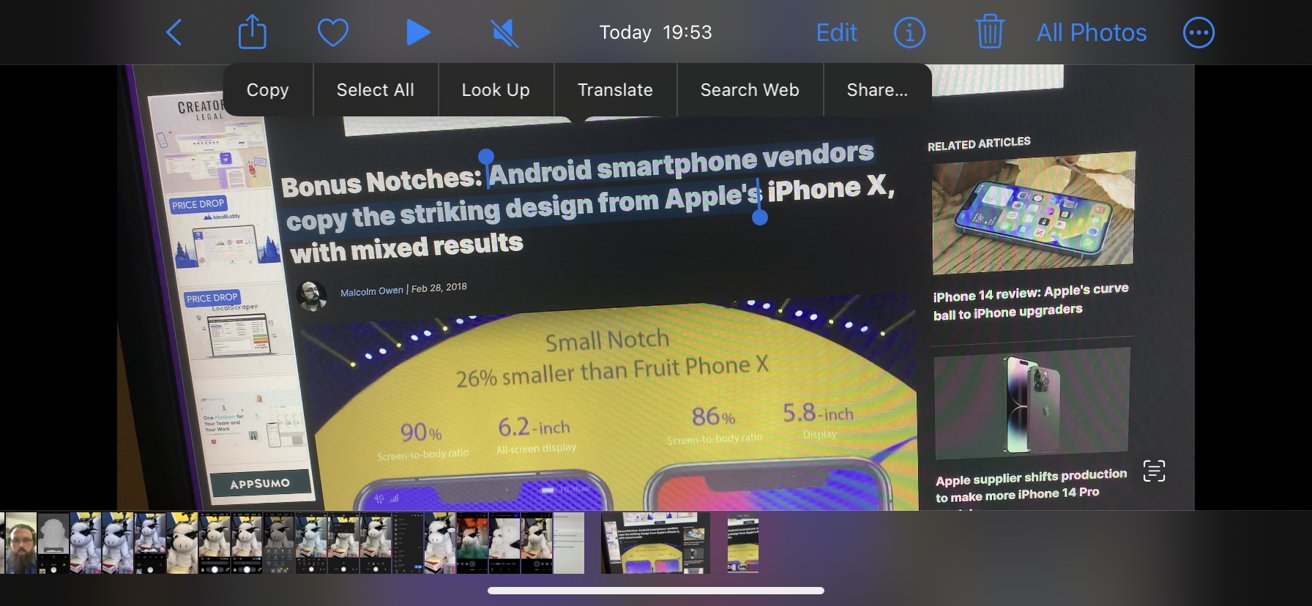

- In the pop-up, select the function you want to use.

These functions cover several areas:

- Copy copies text to the clipboard for you to paste elsewhere, such as another app.

- Select All will select all accessible text within the image.

- Look Up will use Spotlight search to bring up select results.

- Search Web will open up a tab in Safari to search for the text string.

- Translate will bring up the Translate app and attempt to translate the text for you.

- Share brings up the Share box and allows you to share it as text with other people.

Spotty app support

While support for the feature theoretically runs throughout iOS 16, it doesn't necessarily work from app to app.

Apple's suite of apps are the main ones to offer support, including viewing previously-recorded videos within the Camera app, videos in a library in Photos, and paused videos in Safari.

In initial testing, it seems that third-party apps that use video don't currently support the feature at launch. It's possible that support can be added down the line, but it's not immediately usable.

However, you can still take advantage of the browser support of many apps to use the feature in a roundabout way.

For example, you cannot use Live Text for video in the YouTube app. But, if you open the same video in-browser in Safari, you can use Live Text when you pause a clip.

Text Tips

Many of the things you have to consider when using Live Text for video echo what you have to look for in Live Text for still images. There are a few more things to think about, but the general idea of what to do is the same.

When recording, try to position the text, so it takes up as much of the frame as possible without clipping off the ends of sentences or edges of a paragraph. Also, try to keep the text in-focus and on-screen for as long as possible, to make it easier to capture the perfect frame later on when pausing and using Live Text.

Try not to move the camera too much when recording video. Keeping still for a few seconds with no movement will be ideal.

Also, record the video at as high a resolution as possible. This will be especially useful for stretches of text in a small font.

After capturing the text using Live Text itself, do make sure to check the text being copied out to other apps. Due to stylized fonts being used in various places, there's a chance that some optical recognition mistakes could occur and slip through.

Malcolm Owen

Malcolm Owen

Amber Neely

Amber Neely

Marko Zivkovic

Marko Zivkovic

David Schloss

David Schloss

Wesley Hilliard

Wesley Hilliard

Mike Wuerthele and Malcolm Owen

Mike Wuerthele and Malcolm Owen