If Apple is working on a VR or AR headset, it is certainly aware of the different technologies and concepts already used in the market, including the benefits and pitfalls that can be encountered. AppleInsider provides an overview of the current state of the two technologies, and what Apple needs to consider for creating its own headset design.

Editor's note: Given the recent news about the expected 2020 shipment of Apple's Smart Glasses, a second look at what a company needs to make a good AR or VR headset or smart glasses is more relevant than ever.

Despite being a relatively small and young industry, the market for virtual reality and augmented reality-based devices already has a wide variety of different designs to choose from. As the price changes, so does the hardware, and the functionality, with more expensive headsets generally offering a higher-quality experience.

Rumors and speculation from analysts point to Apple entering the VR or AR marketplace in the next few years, but aside from some patent filings, it is unknown what exactly to expect from the iPhone producer's fabled hardware. As a premium device producer in multiple markets, looking at the better and more popular headsets on the market could provide some indication of how Apple's version could turn out.

In creating the headset, Apple faces the task of assembling together a wide array of different components and usage concepts, with each having their own positive and negative points.

Display

Arguably one of the most important elements of AR and VR headsets, the screen or screens are stared at by users for minutes or hours at a time. A good-quality display is an essential component, typically one with a high resolution that minimizes the chance of the user noticing individual pixels, breaking the VR effect.

The refresh rate also needs to be high enough so that the screen can update without the user noticing any jumping between frames. A fast refresh rate and minimal lag between the user moving and it changing on the screen is also essential, in part to keep the illusion intact, as well as to minimize the chance of motion sickness for some individuals.

For the refresh rate, some early headsets relied on two separate screens to show each eye an individual image, but this was an expensive concept to use. Modern VR headsets like the Oculus Rift instead opt for a single larger screen and lenses for each eye, effectively dividing the screen in half.

The single display route saves money when compared to creating two smaller high-resolution screens, but technology has advanced enough that it makes the dual-screen headset viable again, such as with the Magic Leap One Lightwear AR headset. A dual-screen approach could also result in faster refresh rates, due to updating two smaller screens simultaneously instead of the longer process for one larger display panel.

The single panel approach also led to the creation of cheap headsets that use high-resolution display many people already have: their smartphone. Google Cardboard, Samsung's Gear VR, and numerous others use the concept, and even Apple has seemingly caught on in some patent filings, showing glasses with space to insert an iPhone in front of the user's eyes.

It is worth touching on two other display pitfalls that have become less of a problem now, but were noticeable issues in earlier modern headsets.

The "Screen Door" effect was a problem caused by manufacturing the display with pixels that were slightly too far apart, causing a dark grid created by the unlit space between lit pixels to form. These screens looked acceptable for use in other devices, but the close-up nature of VR headsets meant the gaps were more noticeable, giving the effect of looking through the mesh of a screen door.

Mura is an issue where pixels have issues with color accuracy, in that neighboring pixels displaying the same color could show different shades, or even the same shade at slightly different brightnesses. This can sometimes give a screen door-like appearance especially when large blocks of the same color are displayed.

An example of "Mura," where all pixels in a section of a display are set to show the same color, but vary due to the way it was manufactured.

Generally, these two issues have been solved by VR headset producers in their latest releases, but older and cheaper designs will still suffer from these effects.

AR Display Challenges

The main difference between VR and AR hardware is primarily how it provides users with the visual image. While sensors and other areas could largely be the same as VR, the different ways an AR picture can be displayed means that an entirely different headset could be used compared to a VR version.

One way to show an AR scene is to effectively use a VR headset with a live video feed from onboard cameras. The video is sent to the host computer, which then overlays the AR element and sends the composite image back to the headset for viewing.

This system is straightforward and can give what could be the optimal digital-item-in-real-world effect, but with two drawbacks. First, there's the problems associated with VR headsets in general, including lag that can cause nausea, limitations to movement and range, and so on.

There's also the user's expectation of wanting to see the digital extras added to their view of the real world, a live view unimpeded by screens. People think things along the lines of how smart glasses are used in TV shows and movies, namely Google Glass but better.

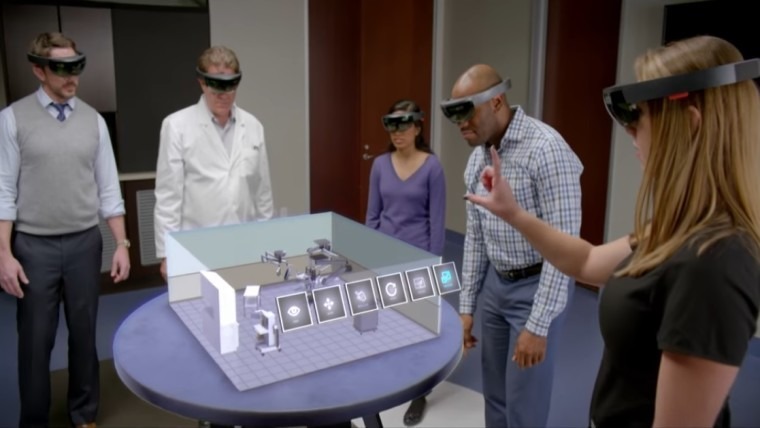

While that's desired, such systems do not currently exist in that form that can provide an augmented reality image. AR headsets do exist and can be bought, such as the Microsoft HoloLens, but are a lot bulkier than the movie magic pretends is possible.

The mixed-reality HoloLens uses a transparent panel that users look through to the outside world, underneath a large headband containing all the sensors and computing power the HoloLens needs to map the environment and the user's movements. A projection system then overlays the image onto these panels, superimposing the view over the world.

As the position of the eyes isn't uniform across all users, AR headsets will also need to figure out where the eyes are located, usually with an eye-tracking system. In some implementations, this can mean beaming light into the eyes and monitoring any reflections of light bouncing off the retina.

By knowing where the eyes are, and where the user is looking, this can help line up the virtual object with the live real-world view more accurately.

Sensors and Motion

In order for a headset to be effective, it needs to have information about the world around it. To correctly produce the scene viewed by the user, the host computing device requires data on movements, both for the current image and in anticipation for the next.

At a base level, this consists of using motion sensors, including gyroscopes and accelerometers, to analyze the head's movement with six degrees of freedom. Accelerometers can help detect how far the head is moving in various directions, while gyroscopes are used to measure any tilting movements of the headset.

Depending on the exact setup required to operate the headset, there may also be the need for cameras or other imaging sensors placed externally. This provides a few things for the headset, such as giving users the ability to see the real world around them without taking the headset off in some apps, as demonstrated with the HTC Vive's Chaperone system.

For augmented reality, these cameras would provide the real-world views that either become the backdrop for the virtual objects to appear on, or can be used to compute where objects can be placed. If multiple cameras are used on the front, this can provide a stereoscopic view of the world and an improved AR effect.

Some VR headsets, especially room-scale versions, can use emitters at strategic locations to provide the headset even more data about its relative position within the space. For example, the "lighthouse" method used by SteamVR requires at least two stations in opposite corners of the space, typically high up so they are viewable everywhere in the room without being obscured, which are picked up by imaging sensors located on the headset.

As with the display, the sensors also need to operate accurately and at speed, both in acquiring data and in sending it to the host for processing. The lag in producing and transmitting the data adds to the overall delay in the VR scene responding to the user's movements, and as already explained, too much of a delay could break the VR illusion or cause motion sickness. Minimal lag is mandated here.

In the cases of Gear VR, Cardboard, and some others that are based around smartphones, the existing onboard sensors are the ones used for VR and AR. There is minimal lag caused through transmitting data between components, leaving only processing power the only possible cause for slow response times.

For AR that uses translucent screens, the external cameras will be vital for computational purposes more than giving the user a decent view. The computer would need to know about the environment, including flat surfaces and empty space in the air that it could feasibly place objects, and to discover points of reference so it can keep said digital items anchored in place.

Sensors for eye tracking would also be extremely useful for AR, not only for determining placement for transparent or translucent displays, but also for applying depth of field and other effects on the digital object, depending on what the user is focusing on.

Scale

Generally speaking, there are two levels of VR scale that needs to be considered when producing a VR-capable headset.

The first is room-scale, which relies upon beacons in an environment to help the headset understand its location. For home use, this uses the aforementioned "lighthouse" system in most cases, with two beacons in opposite corners of the space. This can give users enough space to walk around an area, albeit limited to within the limits of the room.

For home users, the range of movement is also restricted by the length of cables running from the VR headset to a host computer, providing video, audio, and data transfers. This may be less of a problem in the future, as manufacturers are working on ways to wirelessly transmit video and data between the headset and host.

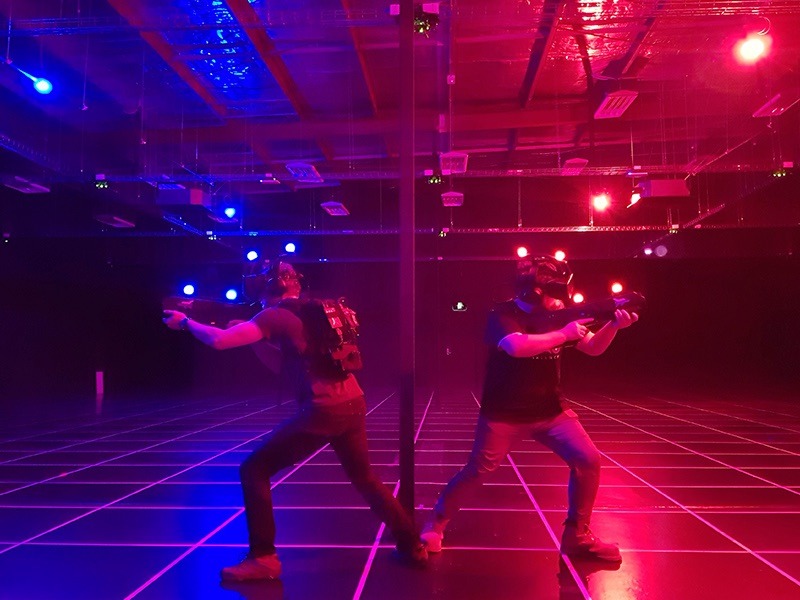

This concept can also be expanded to larger spaces in entertainment venues, as with Zero Latency's system, where the walls and ceilings of a large space are covered with beacons. For this, groups of users can wear computers in a backpack along with the headset, allowing for tether-free roaming of an environment.

VR also works in a much smaller scale, such as Google Cardboard, Samsung's Gear VR, and the Oculus Go, which are designed to be used with as little available space as possible. As they do not need to analyze the rest of the world, instead relying on measuring movement, these can easily be used from a chair, but do not offer the ability to walk around a room.

As AR headsets rely so much on monitoring the outside world without needing to use externally-mounted sensors, they could feasibly be used anywhere. In the case of HoloLens-style hardware, there's the possibility of walking around a large environment, as the user's sight isn't impeded by a display.

The potential for collaborative work also means that scale plays a major part in how group sessions can be conducted, both in VR and AR. Aside from requiring more physical space due to more bodies being involved, it also springs up the issue of the headsets needing to take into account each other's positions.

For tethered lighthouse-based systems, each headset would need to determine its location in space and provide that to the host, which then shares the data with the host computers of other users, while at the same time acquiring up-to-date information on others. This is essential data, as otherwise the users will be blind to the other's positioning, and could physically contact each other.

In the HoloLens-style AR where the real-world view is constantly available, this data is not as urgent as there is no vision-based safety issues, but data does still need to be shared for interactions with virtual objects within apps, for example. Data acquired from sensors regarding the local environment could feasibly be shared with others, depending on the application, but ultimately little data would need to be shared with other participants.

Audio

In many VR and AR applications, it's desirable for the user to hear things. Using built-in headphones, a speaker, or even including some way to connect third-party headphones to the headset are all acceptable ways of providing this, but there are still some things that need to be considered.

Enclosing the user's ear will help immerse them in a VR world, such as gaming, by minimizing distractions. There's also the option of using surround sound headphones with multiple speakers for each ear, though this could easily be simulated in software using standard stereo headphones.

At the same time, opting for a system that still allows the user to hear the nearby environment would be preferable, both from a collaborative viewpoint for work, and from safety. For AR headsets that may be used in a wide variety of locations, hearing the surrounding area would most likely be essential, and if it's to be used for brief periods, could arguably be worth leaving off the device entirely.

Given the variety of different situations, it is probable that headset producers will err towards using a basic on-device audio solution, but still allowing users to plug in their own if needed.

Comfort

While not a technological issue, the ability to wear a headset for a long period of time can be a major factor in a purchasing decision.

The first question is whether the headset is designed to be worn or held. A headset like the Oculus Rift is meant to be held on the head, allowing the hands to be freed up for other peripherals and accessories, but if it is too heavy, it could press on parts of the user's face or add strain to their neck.

The handheld version would be more appropriate for group or brief usage periods, making it ideal for AR applications around a city when on vacation. At the same time, these systems would tie up one or both of the user's hands while in use, limiting what the user could accomplish in the virtual environment.

Another weight-related concern is where the host computer resides. For handheld VR and AR, this could be a smartphone slotted into the headset itself, as with Google Cardboard and Samsung's Gear VR, or connecting to a waist-worn unit like the Magic Leap Creator One.

There is also the option of connecting the headset to a host by long cables, a system currently used by the HTC Vive and Oculus Rift, which offloads processing to a nearby computer. This does prevent any extra weight from being added directly to the headset, but at the same time it introduces cables that could pull on the headset while it's being worn, or could get in the way underfoot while in use.

Usability

In an ideal world, a VR or AR headset would require as little in the way of tuition or setup to get going. In our current reality, we're not quite at that point, but we're getting there.

Starting off with room-scale VR setups, there's the need to connect the headset to a host by cables, the placement of the "lighthouses," and the setup of the equipment, which could put off less determined users.

Handheld smartphone-based headsets or viewers are easier, as it could be as simple as sliding the smartphone into place and running an app. An even easier prospect is the Oculus Go, as it is self-contained and does not require a host to operate, doesn't use lighthouses or similar external elements that need to be configured, and is supplied with a simple controller.

Of course, setting up the hardware is only part of the equation. The setup experience on the software side is another matter entirely, and then there's the applications themselves.

Depending on the complexity of each type of VR or AR headset, there will be different requirements that need to be met by app developers to use the hardware. At the same time, they will also need to tailor the user experience of the app depending on the available resources, including how the user interacts with the virtual space or digital AR items, and their range of motion.

Given the variety of both hardware and software in use today, there is no single way to get started in VR or AR, from either a hardware or software side.

But, where does Apple come in?

All this being said, not every user wants to throw tons of cash at a device when they can get a good experience at a cheaper price. VR enthusiasts will want room-scale setups and the equipment to do practically anything on a digital landscape, but someone's aunt or uncle who wants to see what this VR thing is all about could probably be satisfied with an iPhone shoved into Google Cardboard for ten minutes.

There's other considerations to take into account as well, like a lack of available space at home for room-scale VR. In cases like these, items like the Oculus Go may be a better option.

Headset-based AR is available, but is nowhere near as mature a technology as VR. Apple and third parties have taken large strides towards AR becoming a popular feature of apps, ever since the introduction of ARKit, but outside of Microsoft's HoloLens and its related initiatives, the hardware is nowhere near AR in terms of wide commercial viability.

Is Apple working on a VR or AR headset? It's likely, as patent applications and Apple safety reports do indicate that related technologies are being investigated, but this is by no means a sign that a hardware launch is on the horizon.

What route is Apple likely to follow? Given Apple's nature of creating mass-consumer products, the current room-scale system with a separate host is probably out of the question. An easier to use headset closer to the Oculus Go is more likely, reducing the barrier for entry down to a financial transaction rather than technical knowledge.

Apple also has the benefit of extensive integration knowledge and its control over both the software for the device, the firmware, and the entire manufacturing pipeline. The company's ability to make high performance iPhones despite having nominally lower baseline specifications than its rivals in some areas, in part due to this control, would mean any standalone VR or AR headset would be engineered to an unparalleled level.

Apple AR glasses patent image

In October 2017, Apple CEO Tim Cook noted that AR was an important, but still gestating, technology that could have "exponential" growth, but declined to provide details about anything Apple may be working on. In the interview, he advised "I can tell you that the technology itself doesn't exist to do that in a quality way. The display technology required, as well as putting enough stuff around your face - there's huge challenges with that."

Cook also noted Apple wants to be the best, rather than first to market, and give a great experience to their customers. "But now anything you would see on the market anytime soon would not be something any of us would be satisfied with," suggested Cook. "Nor do I think the vast majority of people would be satisfied."

Given Apple's history of focusing on the user experience, it could be a long wait before consumers get to try out an Apple-produced AR headset. Current estimates from Loup Ventures analyst and Apple observer Gene Munster suggests such hardware could arrive by 2021.

In the meantime, anyone wanting to have an Apple-driven AR experience will have to settle with apps running ARKit on their iPhone or iPad.