In the constant battle to provide consumers the best smartphone camera, one area that Apple has yet to innovate is optical zoom, which could use a so-called "periscope lens." We explain what the technology does, and how it could potentially increase the zoom range on the iPhone and other products with imaging capabilities.

Over the years, smartphone producers have worked hard to give consumers the optimal user experience, and part of this is in the field of photography. Improvements in camera sensors have led to increased resolution sizes for photographs, along with low-light performance gains and optical image stabilization.

The smartphone camera war has also focused heavily on computational photography, where onboard algorithms and potentially the use of multiple camera sensors can produce a far more detailed or better image than a single camera could on its own.

However, one element of photography is a problem smartphone producers are working hard to conquer — improving how far a user can optically zoom.

Space, or the lack of it

The key problem that smartphone producers have to deal with is that enabling a camera lens to zoom optically requires a combination of lens elements and space. For a camera lens to provide a range of zoom levels, typically referred to as focal lengths, those same lens elements also have to move, and potentially take up even more space.

The issue here is that smartphones don't typically have that much internal volume to begin with.

Your average smartphone is only around a third of an inch in thickness, or specifically for the iPhone 12 Pro range, 7.4mm (0.29 inches). That isn't a lot of room to play with for a start, but that space has to be shared with other components.

Even taking into account the extra camera bump, there's simply not enough space for elaborate telephoto lens arrangements in the thin casing of an iPhone

Glass, casing, the display assembly, and any number of other components will take up some of that thickness, leaving just enough for a thin camera sensor to be added. Apple does maximize the amount of space by adding extra thickness in the form of a camera bump, but that doesn't buy much room for the sensor.

The lens assembly on top of the sensor simply doesn't have any space to have any moving components, or to use an advanced lens element arrangement to provide far-off zoom levels, so a fixed lens assembly is used. Sometimes movement is possible, such as for focusing or optical image stabilization, but it's not enough space to improve an optical zoom.

To get around the need to provide a zoom function, smartphone producers use multiple camera sensors on the back, equipped with different lens assemblies to cover telephoto, wide, and ultra-wide images. By switching the sensor being used, and in turn what lenses are being used by that sensor, the smartphone can change the zoom level at that time.

To give users some form of adjustable zoom, smartphone producers augment the optical zoom with digital zoom. This usually involves manipulating the image that came out of the sensor to crop the shot, then resizing the image to meet the resolution expected by users.

While useful enough for smartphone producers and enabling a range of focal lengths to be used, it isn't a great solution. Digital zooms typically create a worse image than an optical zoom of the same level can ever produce, simply because it's a manipulation of data to create more pixels.

Modern digital zoom techniques do still allow smartphone producers to market their devices as being able to zoom at far higher ranges, which is great for advertising purposes.

For example, the iPhone 12 Pro Max has an optical range of 2.5x in to 2x out. Add in the digital zoom element and it is capable of a 12x zoom.

For smartphone producers, the question remains: how to add more of that better-quality optical zoom.

Early zoom enhancement attempts

A few early smartphones managed to get around the problem in a few ways, with varying levels of success.

The Nokia Lumia 1020 went down the route of simply starting with a massive imaging sensor. Its 41.3-megapixel sensor was a massive advantage at its launch in 2013, giving it a lot more leeway when it came to zooming into a scene.

Rather than being a pure optical zoom, the camera instead took advantage of its sheer resolution to simply crop the image from the sensor, so that the final image matched the level of zoom the user wanted, instead of scaling or interpolating the image to resize it.

The resulting image was still at highly usable resolutions, even after considerable cropping was applied.

Another early way to solve the same problem was to borrow the classic zoom mechanism from compact cameras. Devices like the Samsung Galaxy S4 Zoom, again from 2013, included a camera system where the lenses could telescope out from the camera body, changing the position of lens elements and allowing for an extended optical zoom.

While the system worked, providing a 10x optical zoom on a fairly conventional 16-megapixel sensor, it solved the space problem but at the cost of aesthetics. Consumers wanted thinner smartphones, not what could be considered compact cameras with smartphone capabilities.

Creating space in this way worked, but not in a consumer-friendly way. What vendors needed was to develop a way to manage space, but without sacrificing the device's appearance.

Enter periscope lenses

Known by the term "periscope lenses" or "folding lenses," the idea is to increase the amount of space a lens can make within a confined area, by taking advantage of how light reflects. This is somewhat similar to the principles used for a submarine's periscope, but purely for altering the path of light for a smartphone.

Rather than simply pointing a camera sensor directly out the back of a smartphone, the idea is that the sensor can be placed on its side within the small remaining space of a smartphone's thickness. While vendors can't really do much about the overall thickness of a smartphone, it can manage its internal components to give a camera sensor more lateral internal space to use.

Lenses are placed in front of the camera sensor as normal, but then a mirror or prism is used to direct light through an aperture on the back of the smartphone. In effect, the camera is shooting an image from around a corner, using the power of reflection.

![A folded or periscope camera setup mounts the sensor and main lenses on their side, and reflects light using a prism or mirror. [Image via Huawei]](https://photos5.appleinsider.com/gallery/41183-79802-Huawei-folded-lens-system-xl.jpg)

A folded or periscope camera setup mounts the sensor and main lenses on their side, and reflects light using a prism or mirror. [Image via Huawei]

Since the system can generate space between the sensor and the reflective element, this means there's more freedom to use different lens elements than would normally be used in a smartphone's camera. This, combined with space allowing for a wider array of inter-element distances, means designers can create more optimal lens assemblies and arrangements.

As there's space to play with between lens elements, it may even be feasible for component producers to include the ability to shift those elements around. This could allow for lenses to provide more control over where the camera focuses and enable even greater magnification levels.

Think of this as similar to a DSLR or mirrorless camera's zoom lens. In many cases, changing the focal length can alter the length of the lens itself, extending the barrel of the lens to space apart the different elements.

A periscope lens would be capable of similar feats within the limitations of available space.

Initial commercialization

Apple certainly wouldn't be the first to offer a smartphone with a periscope camera, as a few of its competitors have already shipped models using the technology.

Huawei was among the first to market a flagship smartphone that employs a periscope camera system, with the P30 Pro equipped with what was marketed as a SuperZoom Lens. The arrangement provided what Huawei billed as a 10x hybrid zoom, and boasted the possibility of taking some images at a 50x zoom.

An exploded view of the Huawei P30 Pro, showing the periscope lens' different orientation to conventional cameras.

However, Huawei's implementation of the idea wasn't perfect. For a start, while it included a 40-megapixel wide-angle camera sensor and a 20-megapixel ultra-wide-angle version, the sensor used in the folding camera was just 8 megapixels in resolution.

While the smaller sensor benefited from an optical zoom, it did so at up to 5x magnification. To reach the 10x hybrid zoom, Huawei combined the 8MP periscope camera's image with a cropped photo from the 40MP sensor, merging the two to create a highly detailed 10x image.

The 50x zoom relied on more digital zoom techniques, including cropping and image enlargement, supported by algorithmic improvements.

Apple's main rival, Samsung, stepped in with its own version in the Galaxy S20 Ultra in February 2020. Dubbed "Space Zoom," the periscope lens system was combined with a higher 48-megapixel sensor.

This time, the system enabled a 4x optical zoom, a 10x "lossless hybrid optic" zoom that used techniques such as sensor cropping and pixel binning, and more typical digital zoom techniques for its 100x zoom function.

The two releases showed the potential of using a periscope-style lens system, with a far improved optical zoom than normally possible in a smartphone without sacrificing the device's physical styling.

Improved iPhone zoom?

For the moment, it seems that Apple won't be including any sort of periscope zoom in the next round of iPhone launches, though there may not be that long to wait.

A report from analyst Ming-Chi Kuo in November 2020 put forward the idea that Apple will bring out such a system in the 2022 iPhone. However, in March 2021, Kuo revised the forecast to put its debut in 2023.

Away from analyst speculation, other reports have claimed Apple is putting in the groundwork to get a periscope lens camera in a future iPhone.

In November 2020, Apple was reported to be seeking out a supplier of "folded cameras" for future iPhone models. This was said to be part of a triple-camera arrangement, where one would use the zooming technology.

By December 2020, reports pointed to existing Apple camera partner LG InnoTek potentially tapping Samsung for actuators and lenses for the creation of folding camera modules. Those modules would then be destined for use in future iPhone models.

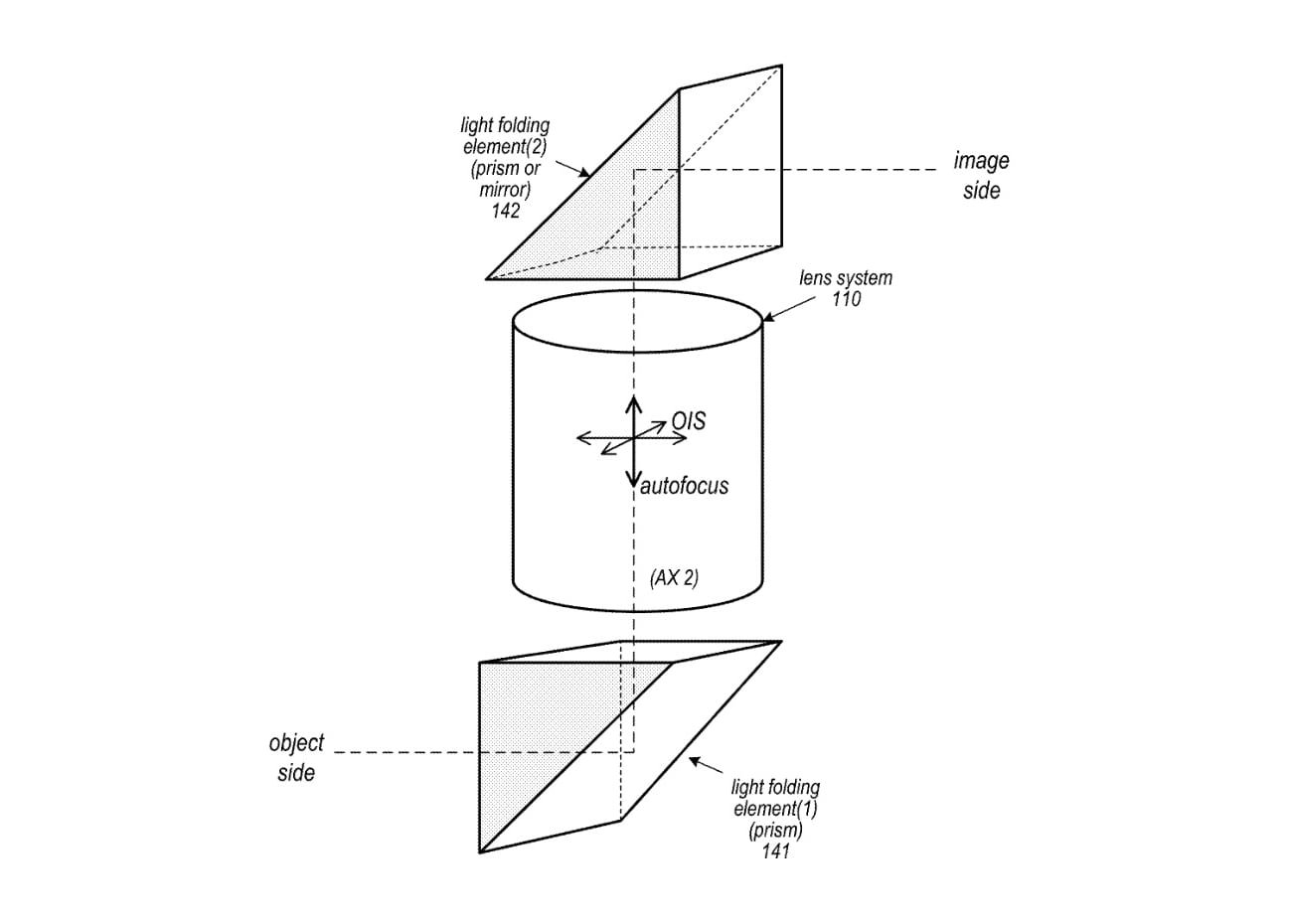

An Apple patent application image for a periscope lens that has moving elements for OIS and autofocus.

Naturally, Apple has filed multiple patent applications in the field. One that surfaced in January 2021 suggested the use of an actuator to move lens elements within a folded camera system, one that could potentially provide optical image stabilization and autofocus capabilities.

Of course, the research and development may not necessarily end up in an iPhone, though it seems a quite obvious application for the system. It's plausible for the technology to be used in other ways.

The "Apple Car," the often-rumored project for Apple to design and build its own self-driving vehicle, could possibly use periscope lenses to drive its automated driving imaging systems. The ability to manage how camera and lens systems are built could enable Apple to create imaging sensor arrays that could be more easily hidden within the bodywork of the vehicle.

All signs certainly point to Apple employing periscope lenses in some fashion in the future. It only remains to be seen when Apple will pull the trigger and include the component, and how far it can push the technology.

With its advances in computational photography to go along with an increased zoom, periscope camera lenses could help push iPhone photography to new heights.