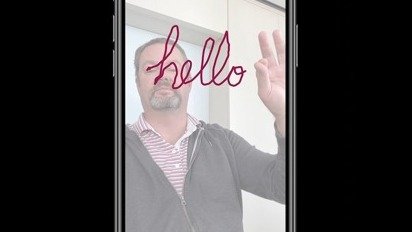

Cough analysis app could detect COVID-19 by sound alone

Researchers at MIT have used machine learning to create software capable of detecting whether a person has caught COVID-19 by analyzing their cough, a development that could eventually result in an iPhone app for daily checks.

Malcolm Owen

Malcolm Owen

Mike Wuerthele

Mike Wuerthele

Mike Peterson

Mike Peterson

William Gallagher

William Gallagher

Mikey Campbell

Mikey Campbell

Daniel Eran Dilger

Daniel Eran Dilger