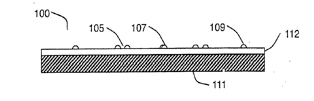

Using an "articulating frame," the surface of such a device would create physical bumps or dots for the user to feel when it is in keyboard mode. Those surface features would retract and disappear when the device is not being used to type. It is detailed in an application entitled "Keystroke Tactility Arrangement on a Smooth Touch Surface." It is similar to an application first filed back in 2007.

"The articulating frame may provide key edge ridges that define the boundaries of the key regions or may provide tactile feedback mechanisms within the key regions," the application reads. "The articulating frame may also be configured to cause concave depressions similar to mechanical key caps in the surface."

The tactile feedback keyboard is revealed as one anonymous source told The New York Times that users would be "surprised" how they interact with the tablet.

Another example in the application describes a rigid, non-articulating frame beneath the surface. It would provide higher resistance when pressing away from the key centers, but softer resistance at the center of a virtual key, guiding hands to the proper location.

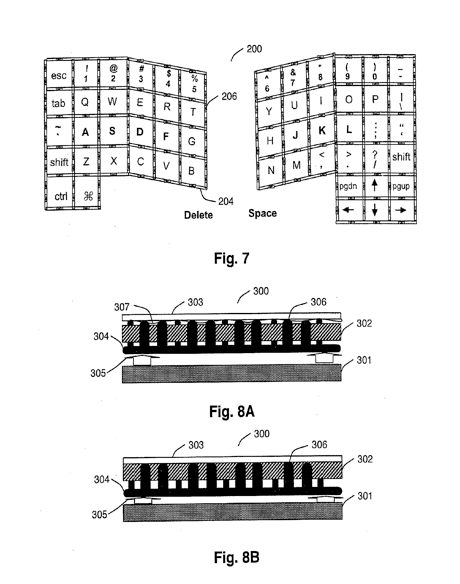

The patent notes that pointing and typing require very different needs: Pointing is best on a smooth surface with little friction, while typing is preferred on keys with edges that fingertips can feel. Simply putting Braille-like dots on the 'F' and 'J' keys, as is on most physical keyboards, is not enough, because it does not address alignment issues with outside keys.

Conversely, while placing dots on every single key on a surface would help a user find their location, it would take away the smooth surface necessary for touch controls that users are accustomed to on a glass screen like the iPhone.

The patent aims to offer the best of both worlds with a new device that could dynamically change its surface.

"Preferably, each key edge comprises one to four distinct bars or Braille-like dots," the application reads. "When constructed in conjunction with a capacitive multi-touch surface, the key edge ridges should separated to accommodate the routing of the drive electrodes, which may take the form of rows, columns, or other configurations."

The system would also intelligently determine when the user wishes to type, and when they intend to use the screen as a pointing device.

"Specifically, the recognition software commands lowering of the frame when lateral sliding gestures or mouse clicking activity chords are detected on the surface," the application states. Alternatively, when homing chords (i.e., placing the fingers on the home row) or asynchronous touches (typing activity) is detected on the surface, the recognition software commands raising of the frame."

Apple filed the Application on Aug. 28, 2009. The invention is credited to Wayne Carl Westerman of San Francisco, Calif.

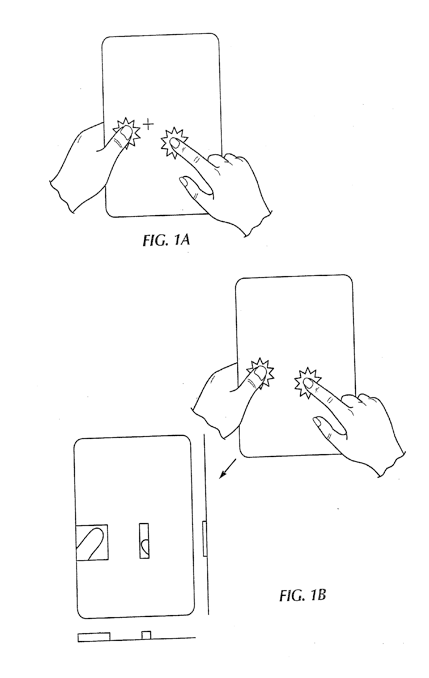

Another Apple patent application revealed this week deals with a multi-touch controller that uses transparent touch sensors and does not require an opaque surface. The description is included in a patent application entitled "Multipoint Touch Surface Controller."

"While virtually all commercially available touch screen based systems available today provide single point detection only and have limited resolution and speed, other products available today are able to detect multiple touch points," the application reads. "Unfortunately, these products only work on opaque surfaces because of the circuitry that must be placed behind the electrode structure."

The described invention would include drive electronics that stimulate the multi-touch sensor and sensing circuits for reading the sensor in a single integrated package. This is said to be different from some previous multi-touch technology, which has been limited in terms of detectable points due to the size of the detection circuitry.

The invention, filed for by Apple on Aug. 27, 2009, is credited to Steven P. Hotelling, Christoph H. Krah and Brian Quentin Huppi of California.

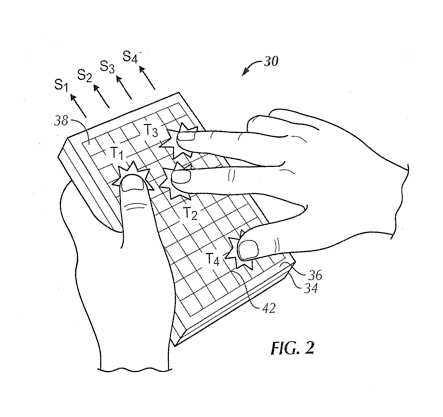

In October, another patent application showed off how a multi-touch tablet interface might work, with a surface that could detect ten individual fingers, along with resting palms, and identify each of them separately. The hand-based system was said to allow "unprecedented integration of typing, resting, pointing, scrolling, 3D manipulation, and handwriting into a versatile, ergonomic computer input device."

Apple is said to have been at work on its rumored tablet device device for many years and has been the number one focus of CEO Steve Jobs since returning to his company this summer.