Apple's decision to design its own chips has helped it exert more control over the capabilities of the iPhone 17 lineup. It will also help further its AI ambitions.

Apple may be one of the leading companies in the smartphone market, but its AI strategy in the form of Apple Intelligence hasn't quite lived up to its promises. However, with Apple's latest iPhone launches, it's placing itself in a better position for AI growth, thanks to its increased control over the device.

In an interview with CNBC published on Sunday, Apple VP of platform architecture Tim Millet and Arun Mathias, VP of wireless software technologies and ecosystems, talked about Apple's new chips in the iPhone 17 generation.

The debut of the new N1 wireless chip and the second-generation C1X modem is a big wireless play for Apple. One that hands over even more control over how hardware acts and performs.

"That's where the magic is," said Millet. "When we have control, we are able to do things beyond what we can do by buying a merchant silicon part."

This is in reference to Apple's strategy of gradually bringing more of the design of iPhone components in-house, instead of relying on third-party solutions. Most famously, this has manifested in the billion-dollar purchase of Intel's modem business in 2019.

In one example of the benefits, Mathias explains that Wi-Fi access points help inform a device of its location. Doing so mean that GPS isn't needed, which saves on power. Doing so in the background and not waking the application processor as much, Mathias says location information can be determined "significantly more efficiently" than before.

The C1X modem in the iPhone Air is the exception to the range, with incumbent iPhone modem supplier Qualcomm still used in the iPhone 17 and iPhone 17 Pro models. While an initial play by Apple in the new model, it's one that again provides more control to Apple.

Mathias explains that the C1X is "up to twice as fast" as the C1 in the iPhone 16e, but importantly it uses 30% less energy than the Qualcomm modem used in the iPhone 16 Pro.

An AI-accelerated future

While the modem benefits are quite clear-cut, the AI side is one that is somewhat muddier. In part because Apple hasn't really delivered a model to consumers in the vein of Google or OpenAI's ChatGPT yet.

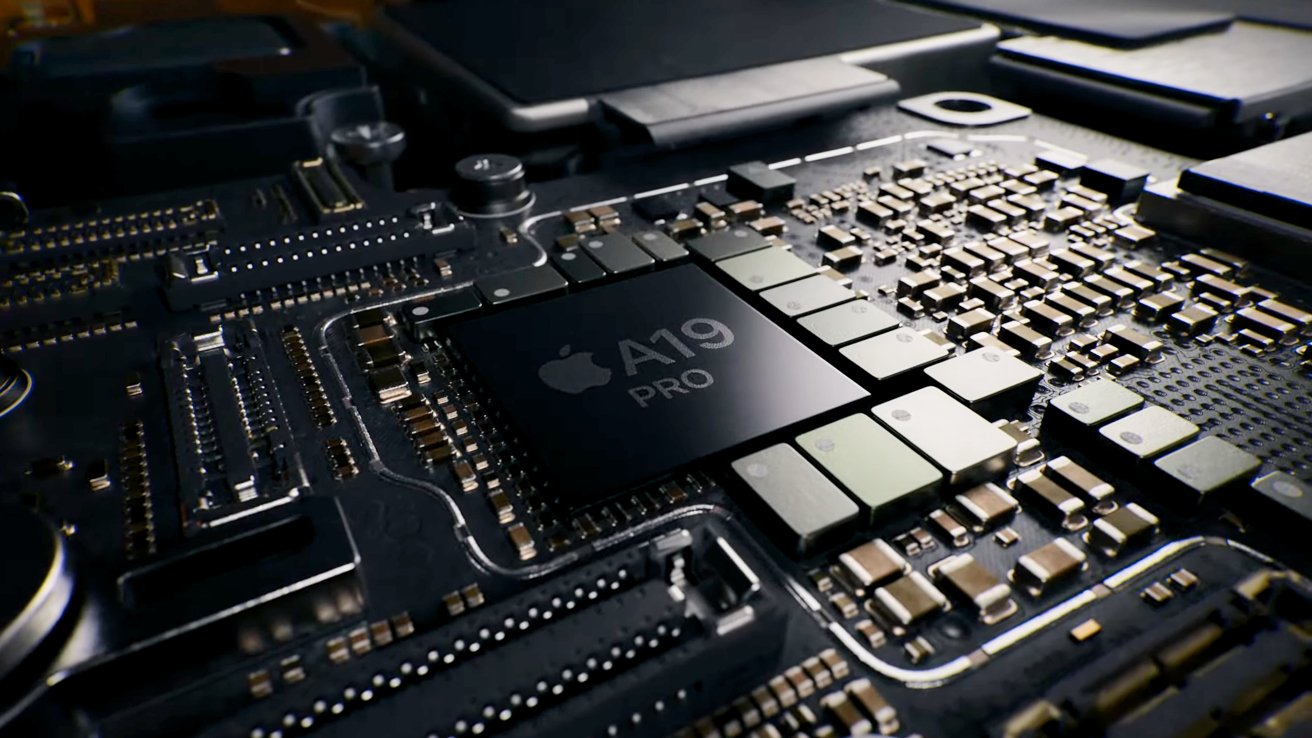

But, in the A19 Pro, Apple has moved to a new chip architecture that builds upon its existing Neural Engine processing. This time, Apple's added neural accelerators to the GPU cores, which will help speed up machine learning-based tasks.

Millet insists that Apple is "building the best on-device AI capability that anyone else has." They will also be capable of "important on-device AI workloads" that are coming in the future.

Though privacy from on-device processing is important, Millet says that efficiency and responsiveness are also factors over the enhanced level of control.

With the neural processing in the iPhone reaching MacBook Pro class performance, Millet says it is a "big, big step forward in ML compute." The "dense matrix math" in the Neural Engine wasn't previously available in the GPU, but it is now in the A19 Pro.

With neural accelerators apparently working similarly to tensor cores on Nvidia's AI chips, Millet points to the benefits in the future.

The neural processing is implemented so that a program could be written to a small processor and the instruction set could be expanded to use a "new class of computer." That software could potentially switch between 3D rendering instructions and neural processing instructions, Millet proposes.