The iPhone could soon gain support for the Model Context Protocol, a move that could make third-party AI tools more useful on Apple products than ever before.

On Monday, Apple deployed the first developer beta of iOS 26.1. Code from the software suggests that Apple is working on implementing support for the Model Context Protocol, or MCP for short.

In a nutshell, MCP makes it possible for AI systems to access and interact with the data they need through a universal protocol, rather than relying on custom implementations for each data source.

As 9to5mac notes, the Model Context Protocol was proposed by Anthropic, but has since seen use by leading AI companies like OpenAI and Google. Based on the first iOS 26.1 developer beta, Apple is set to become the latest tech giant to implement MCP support.

App Intents, Siri, and MCP

By introducing support for the Model Context Protocol, Apple would make it possible for third-party AI systems to interact with iPhone, iPad, and Mac apps. As for how this would work, Apple's App Intents system offers valuable insights.

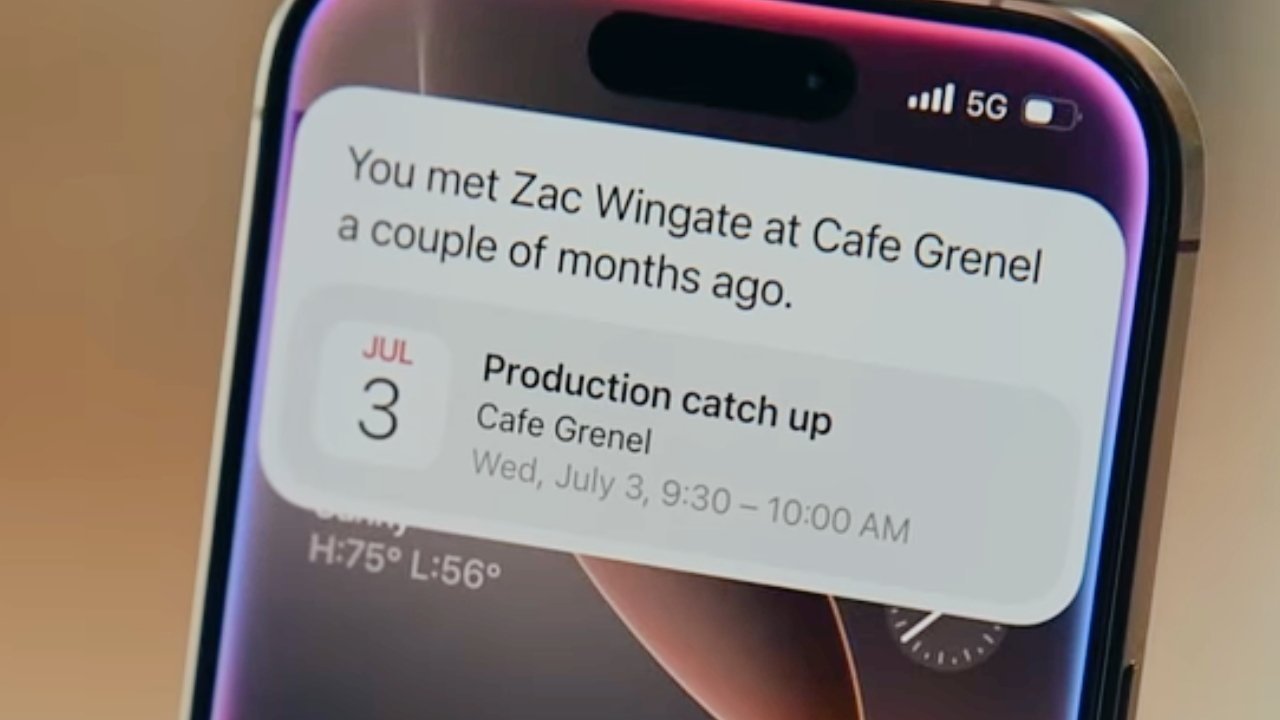

With App Intents, Siri is able to perform actions in applications, and the same capability is made available to Apple's Shortcuts app as well. Apple has been working on improving the system, and those improvements are expected to deliver the long-awaited Siri overhaul.

According to developer documentation released by Apple, developers can test the app intent system for making onscreen content available to Siri and Apple Intelligence. Simply, this means users will be able to send anything active on screen to AI for parsing.

Siri might, one day, be able to answer questions about a webpage on-screen, or edit photos and subsequently send them at the user's request. The assistant might gain the ability to apply comments to an Instagram post, or to add items to an online shopping cart, all without touching the display.

If Apple implements Model Context Protocol, as apparently planned, it could let third-party tools interact with applications in the same way. In theory, this means users could ask OpenAI's ChatGPT to perform in-app actions on iPhone, iPad, and Mac.

There's another potential application, however, as MCP might also make it possible for Siri to gather more data from the web. Previous rumors have suggested that Siri's search features will be handled by Apple's models at the base.

Apple's plans for a revamped Siri

According to a September 2025 rumor, Apple plans to introduce a web search feature backed by Apple Foundation Models. Supposedly, it will be able to call out to Google Gemini to enhance Siri's ability to gather and summarize information.

The new Siri will allegedly have three core components: a planner, a search operator, and a summarizer. Apple's Foundation Model will act as the planner and search operator, as that entails dealing with on-device personal data.

Obtaining the data from the web and collating it may be up to the Google model. Google has reportedly provided a version of Gemini that runs in Private Cloud Compute and helps act as the web summarizer tool.

MCP support on iOS may, in theory, let Google Gemini do much more on iPhone than just provide search results. In-app actions across third-party apps are effectively one possibility.

Apple previously promised Siri would be able to perform advanced in-app tasks, but the planned upgrade has been delayed. Letting third-party tools do the same thing would prove to be a stop-gap solution, and it would give consumers greater freedom of choice.

At the same time, MCP might let Siri obtain information from the web without relying on Google Gemini or other AI products. This is because MCP is a protocol that makes it possible for AI systems to access data from various sources.

Currently, Siri can only perform a basic privacy-preserving web search when it needs information. More advanced tasks are handed off to ChatGPT, with the user's consent, of course.

Ultimately, it appears as though Apple is looking to collaborate with AI companies rather than create a copycat product. This approach is arguably unique, and it might facilitate meaningful development while offering users multiple AI options.

The revamped Siri is expected to debut in the spring of 2025, likely with the iOS 26.4 update.

-xl.jpg)