Proving Apple Intelligence's worth, third-party developers are now using it to make apps more personal to users, and users more productive.

Apple opened up its Apple Intelligence to third-party developers as part of WWDC 2025, and gave them access to what it calls its Foundation Models Framework. It means developers can address the same Apple Intelligence that runs on-device on iPhones, iPads, and Macs.

Now that iOS 26, iPadOS 26, and macOS Tahoe have been officially released, developers are updating their apps to take advantage of the AI features provided via Apple Intelligence.

"We're excited to see developers around the world already bringing privacy-protected intelligence features into their apps," said Susan Prescott, Apple's vice president of Worldwide Developer Relations in a statement. "From generating journaling prompts that will spark creativity in Stoic, to conversational explanations of scientific terms in CellWalk, it's incredible to see the powerful new capabilities that are already enhancing the apps people use every day."

What developers get

By using the Foundation Models Framework, developers get an API that means they can pass prompts to Apple Intelligence. It's done privately, and with specific limitations — but also specific freedoms:

- No limit on user requests

- No tokens or API keys for the user to install

- Access to the same Apple Intelligence on device

That last part is significant, because it is both a limitation and a guarantee of privacy. Developers can't use the full Apple Intelligence LLM in the cloud, nor can they use extensions such as directly making requests of ChatGPT.

Example features

Nonetheless, developers have been implement Apple Intelligence across a wide range of apps. Apple has now picked out more than 20 to champion, ranging from To Do apps to mental health ones.

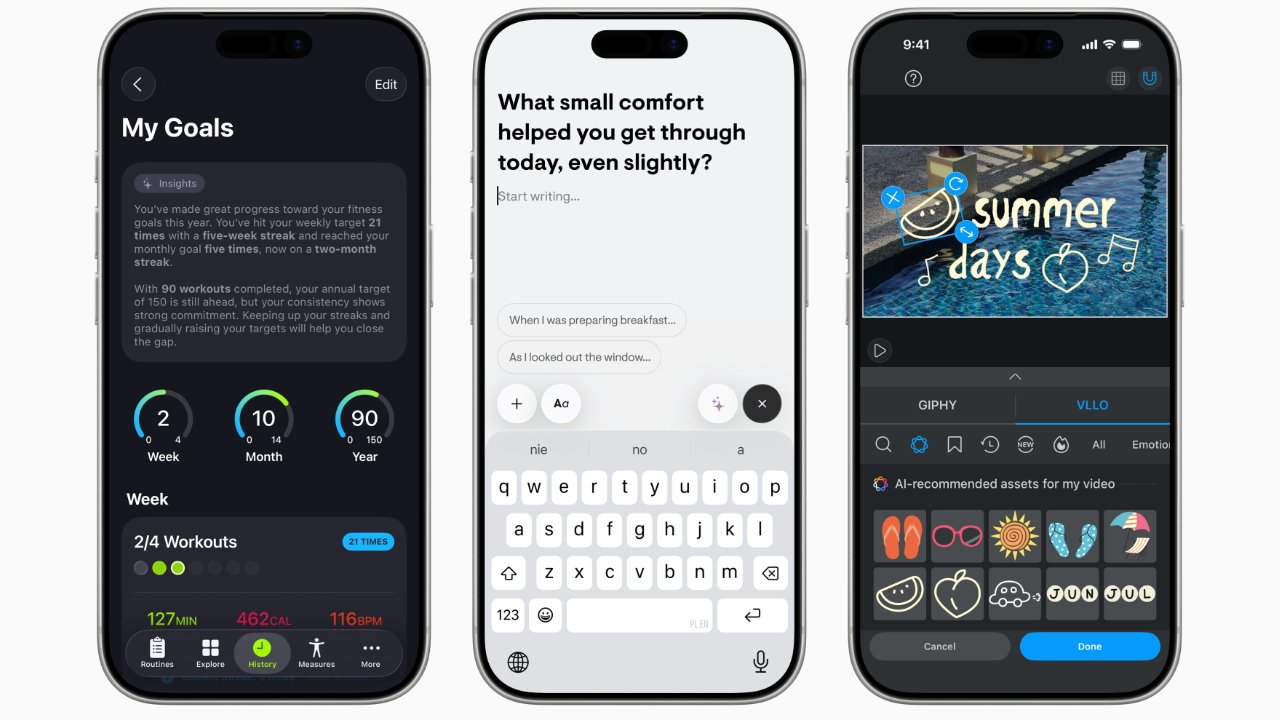

Significant examples include SmartGym, which uses the feature to learn from a user's workouts and recommend changes.

"The Foundation Models framework enables us to deliver on-device features that were once impossible," said Matt Abras, SmartGym's CEO. "It's simple to implement, yet incredibly powerful in its capabilities."

Similarly, the Stoic mental health app can automatically respond with a compassionate, encouraging message if a user logs a low mood.

"What amazed me was how quickly we could build these ideas," said Maciej Lobodzinski, Stoic's founder. "[It] let our small team deliver huge value fast while keeping every user's data private, with all insights and prompts generated without anything ever leaving their device."

Education and productivity

CellWalk takes users through a 3D journey around molecules. Now it can automatically tailor its explanations to the user's level of knowledge.

"Our visuals have always been interactive, but with the Foundation Models framework, the text itself comes alive," said CellWalk's Tim Davison. "Scientific data hidden in our app becomes a dynamic system that adapts to each learner, and the reliable structured data produced by the model made integration with our app seamless."

Then Apple says that the task manager Stuff app lets a user write "Call Sophia Friday," and it fills in the right details. That's the kind of natural language processing that has long been in calendar apps, but is now in a basic To Do app.

More heavyweight To Do apps such as OmniFocus are adopting Apple Intelligence too. It can generate whole projects and suggested tasks to help a user get started.